Introduction

Change Data Capture (CDC) tools help organizations track and move database changes in near real time. In simple words, when data is inserted, updated, or deleted in a source system, CDC tools capture that change and send it to another system such as a data warehouse, data lake, analytics platform, search engine, or operational application.

CDC matters because businesses now need faster reporting, cleaner data pipelines, real-time customer insights, and reliable system synchronization. Instead of running heavy batch jobs again and again, CDC helps move only the changed data, which saves time, reduces load, and improves data freshness.

Best for: Data engineers, platform teams, analytics teams, cloud migration teams, enterprises, SaaS companies, fintech, retail, healthcare, and any business that needs fresh and reliable data movement.

Not ideal for: Very small teams with simple reporting needs, one-time CSV exports, or organizations where basic scheduled ETL is enough.

Key Trends in Change Data Capture (CDC) Tools

- Real-time data pipelines are becoming standard as companies move away from slow batch-based reporting.

- Cloud-native CDC adoption is increasing because more businesses are moving databases and analytics platforms to cloud services.

- Event streaming integration is growing, especially with Kafka-based architectures and operational data platforms.

- AI-assisted monitoring is becoming more useful for detecting pipeline failures, schema issues, latency spikes, and unusual data behavior.

- Security expectations are higher, with more focus on encryption, RBAC, audit logs, SSO, and compliance readiness.

- Hybrid deployment is still important because many enterprises run both on-premises databases and cloud platforms.

- Open-source CDC is gaining adoption among developer teams that want flexibility and control.

- Low-code CDC platforms are popular with data teams that want faster setup and less engineering overhead.

- Schema evolution handling is now critical because modern applications change quickly.

- Pricing transparency remains a challenge, especially for high-volume CDC workloads.

How We Selected These Tools

- Selected tools with strong market recognition in CDC, replication, streaming, and data movement.

- Included a mix of enterprise, cloud-native, open-source, and developer-focused options.

- Considered database source support, target support, latency, and reliability.

- Looked at usability for different buyer segments such as SMBs, mid-market teams, and large enterprises.

- Considered ecosystem strength, including Kafka, cloud warehouses, databases, APIs, and data lakes.

- Prioritized tools that support modern data architectures and real-time data pipelines.

- Included tools with strong documentation, community, or enterprise support where applicable.

- Avoided unsupported or unclear products where CDC capability is not central.

- Used “N/A” or “Not publicly stated” where ratings, certifications, or compliance details are uncertain.

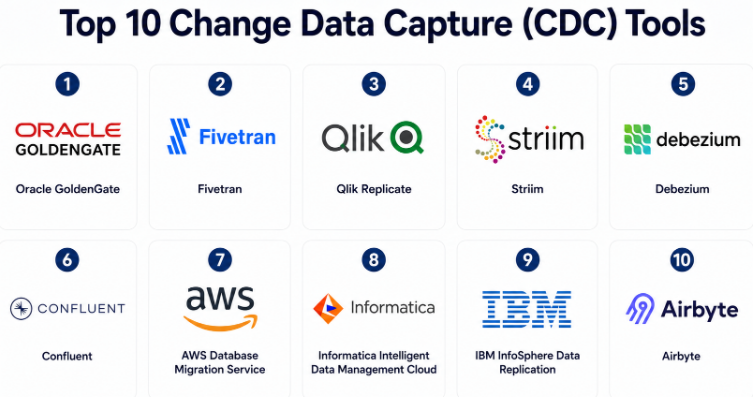

Top 10 Change Data Capture (CDC) Tools

#1 — Oracle GoldenGate

Short description :

Oracle GoldenGate is an enterprise-grade data replication and CDC platform used by large organizations that need high reliability, low latency, and strong database replication capabilities. It is especially strong for Oracle database environments, but it also supports broader heterogeneous data movement scenarios. The tool is commonly used for real-time replication, cloud migration, high availability, analytics pipelines, and database modernization. It is best suited for enterprises with complex database estates and mission-critical workloads. It may require experienced administrators for planning, setup, and long-term management.

Key Features

- Real-time data replication and CDC

- Strong support for Oracle database environments

- Heterogeneous database replication support

- High availability and disaster recovery use cases

- Supports cloud migration and modernization projects

- Low-latency data movement capabilities

- Enterprise-grade monitoring and management options

Pros

- Strong choice for complex enterprise replication.

- Mature platform with deep database-focused capabilities.

- Useful for mission-critical and high-volume environments.

Cons

- Can be complex for smaller teams.

- Licensing and implementation may require careful planning.

- Best value is often seen in larger enterprise environments.

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Supports enterprise security patterns such as encryption, access control, and auditing depending on deployment. Specific compliance certifications vary by environment and service configuration.

Integrations & Ecosystem

Oracle GoldenGate fits well into enterprise database and cloud ecosystems. It is especially useful where Oracle systems are central, but it can also support broader data replication architectures.

- Oracle Database

- Non-Oracle databases

- Cloud data platforms

- Data warehouses

- Data lakes

- Enterprise monitoring systems

Support & Community

Oracle provides enterprise documentation, product support, training resources, and professional services. Community knowledge is strong in Oracle-heavy environments, but implementation usually benefits from experienced database engineers.

#2 — Fivetran

Short description :

Fivetran is a cloud-based data movement platform known for managed connectors and automated data pipelines. It supports CDC for several database sources and is often used to replicate operational data into cloud data warehouses. Fivetran is popular among analytics teams that want less infrastructure management and faster pipeline setup. It works well for companies that prefer a managed approach over building and maintaining custom CDC pipelines. It is especially useful for data warehouse, BI, and modern analytics workflows.

Key Features

- Managed data connectors

- Database replication with CDC support

- Automated schema handling

- Cloud warehouse-focused architecture

- Monitoring and pipeline health visibility

- Minimal infrastructure management

- Broad SaaS and database connector ecosystem

Pros

- Easy to adopt for analytics teams.

- Reduces custom pipeline maintenance.

- Strong connector ecosystem.

Cons

- Pricing can increase with data volume.

- Less control than self-managed CDC frameworks.

- May not fit highly customized replication needs.

Platforms / Deployment

Cloud

Security & Compliance

Security features commonly include encryption, access controls, and enterprise authentication options. Specific compliance details may vary by plan and region.

Integrations & Ecosystem

Fivetran integrates strongly with modern cloud data stacks and analytics platforms. It is commonly used to move data into warehouses for reporting and transformation.

- Snowflake

- BigQuery

- Databricks

- Redshift

- PostgreSQL

- MySQL

- Salesforce and SaaS tools

Support & Community

Fivetran offers documentation, onboarding resources, support plans, and customer success options. The platform is widely discussed in modern data engineering communities.

#3 — Qlik Replicate

Short description :

Qlik Replicate is a data replication and CDC platform designed for real-time data movement across databases, warehouses, data lakes, and cloud environments. It is often used by enterprises that need reliable replication without heavy manual coding. The tool supports a wide range of sources and targets, making it suitable for hybrid and multi-cloud data strategies. It is useful for analytics modernization, cloud migration, operational reporting, and data lake ingestion. It fits teams that need enterprise CDC capabilities with broad platform coverage.

Key Features

- Real-time database replication

- CDC-based data movement

- Broad source and target coverage

- Data lake and warehouse ingestion

- Cloud migration support

- Automation for schema and metadata changes

- Monitoring and management capabilities

Pros

- Strong enterprise CDC and replication coverage.

- Good fit for hybrid data environments.

- Useful for migration and analytics modernization.

Cons

- May require enterprise-level budget.

- Setup can be more involved than lightweight tools.

- Advanced use cases may need skilled data engineers.

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Enterprise security capabilities may include encryption, user access controls, and audit-related features depending on deployment. Compliance specifics are not publicly stated here.

Integrations & Ecosystem

Qlik Replicate supports many enterprise data systems and is often used where businesses need to move data from legacy systems to modern cloud platforms.

- Oracle

- SQL Server

- PostgreSQL

- Snowflake

- Databricks

- AWS, Azure, and Google Cloud data services

Support & Community

Qlik provides enterprise support, documentation, training, and partner resources. Community strength is good among data integration and analytics modernization users.

#4 — Striim

Short description :

Striim is a real-time data integration and streaming platform that supports CDC, event processing, and cloud data movement. It is designed for teams that need low-latency pipelines and operational intelligence. Striim is useful for streaming database changes into cloud warehouses, data lakes, Kafka, and analytics systems. It can support use cases such as fraud detection, real-time dashboards, migration, and event-driven applications. It is a strong fit for organizations that want CDC plus streaming analytics in one platform.

Key Features

- Real-time CDC pipelines

- Streaming data integration

- In-flight processing and enrichment

- Kafka and cloud platform support

- Monitoring and pipeline observability

- Schema handling capabilities

- Real-time analytics use cases

Pros

- Strong real-time streaming focus.

- Useful for operational and analytical workloads.

- Good for low-latency data movement.

Cons

- May be more advanced than simple ETL needs.

- Requires planning for streaming architecture.

- Pricing and deployment details may vary.

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Supports enterprise security patterns such as encryption, authentication, access controls, and monitoring. Specific compliance certifications are Not publicly stated here.

Integrations & Ecosystem

Striim works across databases, messaging systems, and cloud analytics platforms. It is often selected when CDC pipelines need real-time transformation or streaming logic.

- Kafka

- Oracle

- SQL Server

- PostgreSQL

- Snowflake

- BigQuery

- Databricks

Support & Community

Striim provides enterprise documentation, technical support, onboarding assistance, and solution guidance. Community presence is more enterprise-focused than open-source-first.

#5 — Debezium

Short description :

Debezium is a popular open-source CDC platform commonly used with Apache Kafka and Kafka Connect. It captures row-level changes from databases and streams them as events. Debezium is a strong option for developer teams building event-driven systems, microservices, audit trails, and real-time data pipelines. It is flexible and powerful, but it usually needs engineering skill to deploy, monitor, and operate properly. It is best for teams that want control, extensibility, and open-source CDC capability.

Key Features

- Open-source CDC framework

- Kafka Connect-based architecture

- Database change event streaming

- Supports common relational databases

- Useful for event-driven microservices

- Schema change capture support

- Strong developer ecosystem

Pros

- Flexible and developer-friendly.

- Strong open-source community.

- Good fit for Kafka-based architectures.

Cons

- Requires engineering effort to operate.

- Monitoring and scaling need careful setup.

- Not a low-code managed platform by default.

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Security depends heavily on the deployment environment, Kafka setup, network controls, and database configuration. Compliance details are Not publicly stated.

Integrations & Ecosystem

Debezium is deeply connected to Kafka-based data architectures. It works well when teams already use Kafka, Kafka Connect, and event-driven design patterns.

- Apache Kafka

- Kafka Connect

- PostgreSQL

- MySQL

- SQL Server

- MongoDB

- Red Hat ecosystem

Support & Community

Debezium has strong open-source documentation and community support. Enterprise support may be available through vendors and managed platforms depending on deployment choice.

#6 — Confluent

Short description :

Confluent is a streaming data platform built around Apache Kafka. While it is broader than CDC alone, it is widely used for CDC pipelines through connectors and event streaming patterns. Confluent helps teams capture database changes, stream events, process data, and connect operational systems in real time. It is suitable for companies building event-driven applications, real-time analytics, and scalable streaming platforms. It is especially valuable where Kafka is a strategic part of the architecture.

Key Features

- Managed and self-managed Kafka options

- CDC connector ecosystem

- Stream processing capabilities

- Schema Registry support

- Enterprise-grade monitoring and governance

- Cloud and hybrid deployment patterns

- Strong event-driven architecture support

Pros

- Excellent for Kafka-centered CDC use cases.

- Strong ecosystem for streaming data.

- Suitable for large-scale event-driven systems.

Cons

- Broader platform than CDC alone.

- Kafka expertise is often needed.

- Can be complex for small teams.

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Supports enterprise security features such as encryption, access controls, RBAC, audit-related capabilities, and authentication options. Specific compliance details may vary by product edition and deployment.

Integrations & Ecosystem

Confluent has a large connector and streaming ecosystem. It is useful when CDC data must feed multiple downstream consumers in real time.

- Kafka connectors

- Databases

- Cloud warehouses

- Data lakes

- Stream processing tools

- Monitoring and governance systems

Support & Community

Confluent has strong documentation, training, enterprise support, and a large Kafka community. It is one of the strongest ecosystems for real-time streaming data architecture.

#7 — AWS Database Migration Service

Short description :

AWS Database Migration Service is a cloud service used for database migration, replication, and CDC-style ongoing data movement within AWS-centered environments. It is often used to migrate databases to AWS with minimal downtime or to keep source and target systems synchronized during transition. It supports multiple database engines and can help teams move from on-premises to cloud or between cloud databases. It is a practical choice for AWS users who need managed migration and replication capability.

Key Features

- Database migration support

- Ongoing replication with CDC

- Supports multiple database engines

- Useful for cloud modernization

- Managed AWS service model

- Integration with AWS data services

- Supports homogeneous and heterogeneous migration scenarios

Pros

- Strong fit for AWS migration projects.

- Reduces need to build custom replication tooling.

- Useful for modernization and cloud adoption.

Cons

- Best fit is AWS-centered architecture.

- Advanced troubleshooting may need cloud expertise.

- Not always the best choice for non-AWS environments.

Platforms / Deployment

Cloud

Security & Compliance

Security depends on AWS configuration, IAM, encryption settings, networking, and audit setup. Compliance alignment depends on the broader AWS environment and customer configuration.

Integrations & Ecosystem

AWS Database Migration Service integrates well with AWS databases, storage, analytics, and monitoring services. It is commonly used during cloud migration programs.

- Amazon RDS

- Amazon Aurora

- Amazon Redshift

- Amazon S3

- CloudWatch

- On-premises databases

- AWS analytics services

Support & Community

AWS provides documentation, support plans, workshops, and partner resources. Community knowledge is broad because AWS DMS is commonly used in cloud migration projects.

#8 — Informatica Intelligent Data Management Cloud

Short description :

Informatica Intelligent Data Management Cloud is a broad enterprise data management platform that includes data integration, replication, governance, quality, and cloud data management capabilities. For CDC use cases, it is useful where organizations need controlled, governed, and scalable data movement across enterprise systems. It is often selected by large teams that need data integration plus governance and compliance workflows. It may be more than a simple CDC tool, but it fits complex enterprise data environments well.

Key Features

- Enterprise data integration capabilities

- CDC and replication support depending on configuration

- Data governance and quality features

- Cloud and hybrid data management

- Broad connector ecosystem

- Metadata and monitoring capabilities

- Enterprise workflow support

Pros

- Strong fit for governed enterprise data programs.

- Broad platform beyond CDC alone.

- Useful for complex integration landscapes.

Cons

- May be too broad for simple CDC needs.

- Implementation can require planning and expertise.

- Cost and packaging may vary by use case.

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

Enterprise security options may include role-based access, encryption, authentication controls, and governance capabilities. Specific compliance details vary by service and configuration.

Integrations & Ecosystem

Informatica has a broad enterprise ecosystem across databases, SaaS applications, cloud platforms, warehouses, and governance tools.

- Cloud data warehouses

- Enterprise databases

- SaaS applications

- Data lakes

- Governance platforms

- Data quality workflows

Support & Community

Informatica offers enterprise documentation, training, support, partner resources, and professional services. It is best suited for teams that value structured onboarding and enterprise support.

#9 — IBM InfoSphere Data Replication

Short description :

IBM InfoSphere Data Replication is an enterprise replication platform used for real-time data integration, database replication, and CDC-style workloads. It is commonly found in organizations with IBM systems, mainframe environments, and complex enterprise database landscapes. The tool is useful for high-value replication, operational reporting, business continuity, and hybrid integration scenarios. It is best suited for enterprises with mature data operations and specialized infrastructure needs.

Key Features

- Enterprise data replication

- CDC-based change movement

- Support for complex database environments

- Useful for operational reporting

- Supports business continuity scenarios

- Hybrid data integration support

- Enterprise management capabilities

Pros

- Strong fit for IBM-heavy enterprise environments.

- Useful for complex and mature data estates.

- Supports mission-critical replication needs.

Cons

- May not be simple for small teams.

- Specialized expertise may be required.

- Not usually the fastest option for lightweight use cases.

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Enterprise security capabilities may depend on environment, access controls, encryption setup, and IBM infrastructure configuration. Specific compliance details are Not publicly stated here.

Integrations & Ecosystem

IBM InfoSphere Data Replication fits well into IBM and enterprise database ecosystems. It is often used where legacy and modern systems must work together.

- IBM Db2

- Mainframe environments

- Enterprise databases

- Data warehouses

- Hybrid infrastructure

- IBM data platforms

Support & Community

IBM provides enterprise documentation, support, consulting, and partner resources. Community knowledge is strongest in IBM enterprise environments and legacy modernization projects.

#10 — Airbyte

Short description :

Airbyte is an open-source data movement platform with a strong connector ecosystem and growing adoption among modern data teams. While it is broader than CDC alone, it supports database replication use cases and is often considered by teams that want flexibility, open-source control, and cloud or self-managed deployment options. Airbyte is useful for startups, SMBs, and data teams that need many connectors without building everything from scratch. It is a practical choice when teams want a balance between openness and managed convenience.

Key Features

- Open-source data integration platform

- Large connector ecosystem

- Database replication support

- Cloud and self-hosted options

- Custom connector development

- Modern data stack compatibility

- Useful for ELT workflows

Pros

- Flexible and open-source friendly.

- Good connector coverage for modern teams.

- Suitable for teams that want deployment choice.

Cons

- CDC depth may vary by connector.

- Some advanced enterprise needs may require careful validation.

- Self-hosted operation requires maintenance.

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Security depends on deployment model and configuration. Enterprise security features may vary by plan. Compliance details are Not publicly stated here.

Integrations & Ecosystem

Airbyte integrates with many SaaS tools, databases, warehouses, and data lakes. It is especially useful for modern ELT pipelines.

- PostgreSQL

- MySQL

- Snowflake

- BigQuery

- Redshift

- Databricks

- SaaS applications

Support & Community

Airbyte has strong open-source community activity, documentation, and commercial support options. It is a good fit for teams that value transparency and extensibility.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Oracle GoldenGate | Large enterprise database replication | Cloud / Self-hosted / Hybrid | Hybrid | Mature enterprise replication | N/A |

| Fivetran | Managed analytics pipelines | Cloud | Cloud | Automated managed connectors | N/A |

| Qlik Replicate | Hybrid enterprise CDC | Cloud / Self-hosted / Hybrid | Hybrid | Broad source and target replication | N/A |

| Striim | Real-time streaming CDC | Cloud / Self-hosted / Hybrid | Hybrid | CDC plus streaming processing | N/A |

| Debezium | Developer-first open-source CDC | Linux / Self-hosted | Self-hosted / Hybrid | Kafka-based open-source CDC | N/A |

| Confluent | Kafka-based event streaming | Cloud / Self-hosted / Hybrid | Hybrid | Enterprise Kafka ecosystem | N/A |

| AWS Database Migration Service | AWS database migration | Cloud | Cloud | Managed AWS migration and replication | N/A |

| Informatica IDMC | Governed enterprise data management | Cloud / Hybrid | Hybrid | CDC with broader data governance | N/A |

| IBM InfoSphere Data Replication | IBM and mainframe-heavy enterprises | Self-hosted / Hybrid | Hybrid | Enterprise replication for complex estates | N/A |

| Airbyte | Open-source ELT and replication | Cloud / Self-hosted / Hybrid | Hybrid | Flexible connector ecosystem | N/A |

Evaluation & Change Data Capture (CDC) Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Oracle GoldenGate | 10 | 6 | 9 | 9 | 10 | 9 | 7 | 8.65 |

| Fivetran | 8 | 9 | 9 | 8 | 8 | 8 | 7 | 8.15 |

| Qlik Replicate | 9 | 7 | 9 | 8 | 9 | 8 | 7 | 8.20 |

| Striim | 9 | 7 | 8 | 8 | 9 | 8 | 7 | 8.05 |

| Debezium | 8 | 6 | 8 | 6 | 8 | 8 | 9 | 7.65 |

| Confluent | 9 | 6 | 9 | 9 | 9 | 9 | 7 | 8.30 |

| AWS Database Migration Service | 8 | 8 | 8 | 8 | 8 | 8 | 8 | 8.00 |

| Informatica IDMC | 8 | 7 | 9 | 9 | 8 | 9 | 7 | 8.10 |

| IBM InfoSphere Data Replication | 8 | 6 | 8 | 8 | 8 | 8 | 6 | 7.45 |

| Airbyte | 7 | 8 | 8 | 6 | 7 | 7 | 9 | 7.45 |

These scores are comparative, not absolute. A higher score does not mean the tool is always the best choice for every company. Enterprise tools may score higher in depth and security, while open-source tools may score better in flexibility and value. Buyers should validate performance, pricing, security, and connector support through a pilot before making a final decision.

Which Change Data Capture (CDC) Tools

Solo / Freelancer

Solo users usually do not need a heavy enterprise CDC platform unless they are building a technical product or data-heavy SaaS project. Airbyte and Debezium are practical choices for technical users who want lower-cost options and more control. Fivetran may be suitable if the budget allows and the user wants managed pipelines with less setup effort.

SMB

Small and mid-sized businesses should focus on ease of use, predictable maintenance, and connector availability. Fivetran, Airbyte, and AWS Database Migration Service are good starting points depending on the cloud environment. If the business already uses AWS, AWS DMS is often practical for migration and replication. If the team needs many SaaS connectors, Fivetran or Airbyte may be easier.

Mid-Market

Mid-market teams often need a balance of performance, governance, and cost. Qlik Replicate, Striim, Confluent, Fivetran, and Airbyte can all fit depending on architecture. For analytics-focused teams, Fivetran is attractive. For event-driven teams, Confluent and Debezium are stronger. For hybrid database replication, Qlik Replicate and Striim deserve serious evaluation.

Enterprise

Enterprises should evaluate reliability, security, auditability, support, deployment flexibility, and high-volume performance. Oracle GoldenGate, Qlik Replicate, Informatica IDMC, IBM InfoSphere Data Replication, Striim, and Confluent are strong candidates. The right choice depends heavily on existing databases, cloud strategy, compliance requirements, and internal skills.

Budget vs Premium

Budget-conscious teams should consider Debezium and Airbyte, especially if they have engineering capacity. Premium buyers may prefer Oracle GoldenGate, Qlik Replicate, Informatica IDMC, Striim, or Confluent for stronger enterprise features, support, and governance. Fivetran sits in the managed cloud category where value depends on connector needs and data volume.

Feature Depth vs Ease of Use

For maximum CDC depth, Oracle GoldenGate, Qlik Replicate, Striim, and Confluent are strong. For ease of use, Fivetran, Airbyte Cloud, and AWS DMS may be easier to start with. Debezium offers strong flexibility but needs engineering ownership.

Integrations & Scalability-

For broad enterprise integrations, Informatica IDMC, Qlik Replicate, Fivetran, and Confluent are strong options. For Kafka-based scalability, Debezium and Confluent are natural choices. For AWS-first scaling, AWS Database Migration Service is practical. For open connector flexibility, Airbyte is a good candidate.

Security & Compliance Needs

Security-heavy buyers should prioritize tools with RBAC, encryption, audit logs, SSO, strong support, and deployment control. Enterprise teams should validate security documents directly during procurement. Oracle GoldenGate, Confluent, Informatica IDMC, Qlik Replicate, and AWS DMS are commonly considered in security-sensitive environments, but final compliance validation should always be done by the buyer.

Frequently Asked Questions

1. What is a Change Data Capture tool?

A CDC tool captures database changes such as inserts, updates, and deletes. It then sends those changes to another system in near real time. This helps teams avoid heavy batch jobs and keep data fresh.

2. How is CDC different from traditional ETL?

Traditional ETL often moves data in scheduled batches. CDC focuses on capturing only changed data as it happens. This makes CDC better for real-time analytics, replication, and event-driven use cases.

3. Are CDC tools expensive?

Pricing varies widely. Some open-source tools have lower software cost but need engineering time. Managed and enterprise tools may cost more but reduce maintenance and provide stronger support.

4. How long does CDC implementation take?

Simple cloud-based pipelines may be set up quickly. Complex enterprise replication can take longer because teams must validate schema changes, security, latency, failover, and monitoring.

5. What are common CDC implementation mistakes?

Common mistakes include ignoring schema changes, underestimating data volume, skipping monitoring, not testing recovery, and failing to validate target data quality. Security and access planning are also often missed.

6. Are CDC tools secure?

CDC tools can be secure when configured correctly. Buyers should check encryption, access controls, RBAC, audit logs, SSO, network security, and compliance documentation before production use.

7. Can CDC tools handle large-scale data?

Yes, many CDC tools are built for high-volume environments. However, scalability depends on source databases, network capacity, target systems, connector design, and pipeline architecture.

8. Do CDC tools support cloud data warehouses?

Most modern CDC tools support popular cloud warehouses and analytics platforms. Buyers should confirm exact source and target support before selecting a tool.

9. Is open-source CDC a good option?

Open-source CDC can be excellent for teams with engineering skills. Debezium and Airbyte are strong examples, but they require more ownership around deployment, monitoring, upgrades, and troubleshooting.

10. When should a company switch CDC tools?

A company should consider switching when the current tool has poor reliability, limited connectors, high cost, weak monitoring, poor schema handling, or does not fit the future cloud strategy.

Conclusion

Change Data Capture tools are now an important part of modern data architecture because businesses need faster, cleaner, and more reliable data movement. The best CDC tool depends on your current systems, team skills, budget, security needs, and future data strategy. Oracle GoldenGate, Qlik Replicate, Informatica IDMC, IBM InfoSphere Data Replication, and Striim are strong for enterprise and hybrid environments. Fivetran and AWS Database Migration Service are useful for managed cloud-focused workflows. Debezium, Confluent, and Airbyte are strong choices for engineering-led and modern data teams.