Introduction

LLM gateways and model routing platforms help teams manage, control, route, monitor, secure, and optimize requests going to large language models. Instead of connecting every application directly to one AI model provider, teams use a gateway layer to centralize model access, apply policies, track usage, manage costs, handle failover, and route prompts to the best model for each use case.

These platforms matter because modern AI applications often use multiple models across different providers. One workload may need a low-cost model for summarization, another may need a high-quality model for reasoning, while another may require an open-source model running in a private environment. Without a routing layer, teams struggle with cost visibility, provider lock-in, latency issues, inconsistent security controls, and scattered API management.

Common use cases include:

- Routing prompts across multiple LLM providers

- Reducing AI inference cost with cheaper models for simple tasks

- Adding fallback models when one provider fails

- Monitoring latency, token usage, and cost

- Applying prompt security and access policies

- Managing AI API keys centrally

- Supporting internal AI platforms for developers

- Comparing model quality across providers

- Enforcing governance for enterprise AI usage

- Supporting RAG, agent, chatbot, and automation systems

Buyers should evaluate:

- Supported model providers

- Routing rules and fallback logic

- Cost tracking and token analytics

- Latency monitoring

- Prompt logging and observability

- Security and access control

- Data privacy options

- Rate limiting and quota management

- SDK and API compatibility

- Deployment model and enterprise readiness

Best for: AI platform teams, MLOps teams, DevOps teams, product engineers, application developers, enterprises, startups, SaaS companies, data teams, and organizations building production AI applications.

Not ideal for: Small experiments, one-off prototypes, or teams using a single model provider with low traffic and no need for routing, governance, cost control, or observability.

Key Trends in LLM Gateways & Model Routing Platforms

- Multi-model AI architecture: Teams increasingly use different models for reasoning, coding, summarization, extraction, embeddings, and chat.

- Cost-aware routing: Gateways are helping teams send simple tasks to cheaper models and complex tasks to stronger models.

- Fallback and resilience: Production AI systems need automatic failover when a model provider is slow, unavailable, or rate-limited.

- LLM observability: Token usage, latency, errors, prompt quality, completion quality, and cost tracking are now essential.

- Prompt security: Enterprises need controls for sensitive data, prompt injection, jailbreak attempts, and unsafe outputs.

- Model evaluation loops: Routing decisions are increasingly connected with model evaluation, A/B testing, and quality scoring.

- Provider abstraction: Developers want one API layer that can work across multiple model providers.

- Private and open-source model support: Many organizations want routing across both hosted models and self-hosted models.

- Governance and compliance: AI teams need audit logs, access policies, retention controls, and user-level usage visibility.

- Agent and RAG support: Gateways are becoming important infrastructure for retrieval-augmented generation and AI agent workflows.

How We Selected These Tools

The platforms below were selected based on practical usefulness, technical relevance, adoption among AI developers, routing and gateway capability, observability features, provider support, and fit for production AI workflows.

The evaluation considered:

- Model provider support and flexibility

- Gateway, proxy, or routing capability

- Developer experience and API compatibility

- Cost tracking and token-level analytics

- Observability and logging

- Security, governance, and policy controls

- Deployment flexibility

- Support for open-source and commercial models

- Fit for startups, SMBs, mid-market teams, and enterprises

- Usefulness for production LLM applications

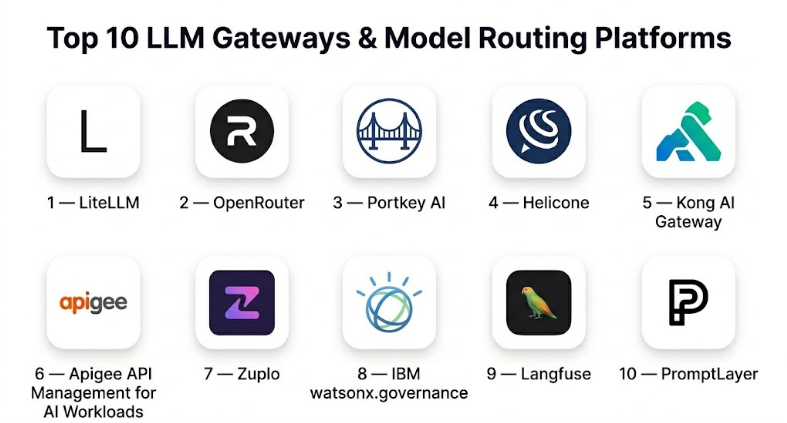

Top 10 LLM Gateways & Model Routing Platforms

#1 — LiteLLM

Short description :

LiteLLM is a widely used model gateway and proxy layer that helps developers call multiple LLM providers through a unified interface. It is especially useful for teams that want provider flexibility, model routing, logging, budgets, retries, and compatibility across different model APIs. LiteLLM is popular with developers building AI applications because it reduces the friction of switching models or supporting multiple providers. It is a strong choice for startups and platform teams that want practical multi-model routing without heavy enterprise complexity.

Key Features

- Unified API layer for multiple LLM providers

- Model routing and fallback support

- Usage tracking and budget controls

- Retry and failure handling

- Logging and observability options

- Proxy deployment support

- Developer-friendly configuration

Pros

- Strong developer experience

- Useful for multi-provider routing

- Good fit for startups and platform teams

Cons

- Enterprise governance depends on setup

- Advanced security controls may require additional architecture

- Operational quality depends on deployment and configuration

Platforms / Deployment

- Containers / Python environments / Cloud environments / Kubernetes depending on setup

- Self-hosted / Cloud / Hybrid

Security & Compliance

Compliance is Varies / N/A. Security depends on deployment model, API key handling, authentication, logging configuration, network controls, and retention policies.

Integrations & Ecosystem

LiteLLM fits well into AI application stacks and developer workflows.

- Multiple LLM providers

- Self-hosted model endpoints

- API proxy workflows

- Budget and usage tracking

- Observability integrations depending on setup

- AI application frameworks

Support & Community

LiteLLM has strong developer community adoption and active usage among teams building LLM applications. Support depends on open-source resources or commercial options where applicable.

#2 — OpenRouter

Short description :

OpenRouter is a model routing platform that gives developers access to many different LLMs through a unified API-style experience. It is useful for teams that want to compare models, route requests, reduce provider lock-in, and experiment with different commercial and open models. OpenRouter is especially helpful for developers who want quick model access without integrating each provider separately. It is a good fit for prototyping, evaluation, and multi-model application development.

Key Features

- Unified access to multiple models

- Model comparison workflows

- Routing across supported providers

- API-style developer experience

- Usage tracking

- Support for different model categories

- Useful for experimentation and production pilots

Pros

- Fast way to test many models

- Reduces integration overhead

- Good for model comparison and prototyping

Cons

- Dependency on a third-party routing layer

- Enterprise controls may not fit every regulated use case

- Data handling requirements should be reviewed carefully

Platforms / Deployment

- Web / API

- Cloud

Security & Compliance

Not publicly stated for all enterprise compliance requirements. Teams should evaluate data handling, retention, access controls, and security posture before using it for sensitive workloads.

Integrations & Ecosystem

OpenRouter is useful for developer-facing multi-model access.

- Multiple LLM providers

- API-based applications

- Prototyping environments

- Model comparison workflows

- AI coding and chatbot applications

- Evaluation experiments

Support & Community

OpenRouter has developer community visibility and is commonly discussed by AI builders testing multiple models. Support expectations should be validated for production use.

#3 — Portkey AI

Short description :

Portkey AI is an AI gateway and observability platform designed to help teams manage LLM applications with routing, logging, monitoring, caching, guardrails, and cost controls. It is useful for production AI teams that need more visibility and governance than direct provider API calls. Portkey focuses on making LLM usage manageable across applications, teams, providers, and environments. It is a strong option for companies building AI products at scale.

Key Features

- AI gateway for LLM applications

- Model routing and fallback support

- Prompt and response logging

- Cost and latency tracking

- Caching options

- Guardrails and policy workflows

- Team and workspace management

Pros

- Strong production AI observability

- Useful cost and latency visibility

- Good fit for multi-team AI application management

Cons

- May be more than small prototypes need

- Teams must plan logging and privacy settings carefully

- Pricing and advanced features may vary

Platforms / Deployment

- Web / API / Cloud environments

- Cloud / Hybrid options may vary

Security & Compliance

Security features may vary by plan and deployment. Teams should validate access controls, retention settings, encryption, auditability, and compliance needs before adoption.

Integrations & Ecosystem

Portkey AI fits modern LLM application operations.

- Multiple LLM providers

- Prompt logs

- Observability systems

- Guardrail workflows

- API gateway patterns

- Cost monitoring workflows

Support & Community

Portkey AI provides product resources and support options. It is relevant for teams moving from prototype AI apps to production-grade operations.

#4 — Helicone

Short description :

Helicone is an LLM observability and gateway-style platform that helps teams monitor requests, track costs, analyze latency, debug prompts, and understand production AI usage. It is useful for developers and teams that need visibility into LLM calls without building custom logging from scratch. Helicone is especially valuable for teams that want prompt analytics, user-level usage tracking, and operational insights into AI applications.

Key Features

- LLM request logging

- Cost and token tracking

- Latency analytics

- Prompt debugging

- User-level usage insights

- Caching and gateway capabilities depending on setup

- Evaluation and monitoring workflows

Pros

- Strong LLM observability

- Useful for debugging production prompts

- Good for cost and usage tracking

Cons

- Routing depth may depend on setup and use case

- Sensitive data logging requires careful configuration

- May require integration planning for larger teams

Platforms / Deployment

- Web / API / Cloud environments

- Cloud / Self-hosted options may vary

Security & Compliance

Security depends on deployment configuration, logging settings, data retention, authentication, and workspace controls. Compliance is Varies / N/A.

Integrations & Ecosystem

Helicone fits AI observability and debugging workflows.

- LLM API monitoring

- Prompt analytics

- Cost dashboards

- User tracking

- Evaluation workflows

- Application logging pipelines

Support & Community

Helicone has developer community awareness and support resources. It is particularly useful for teams that want practical LLM monitoring quickly.

#5 — Kong AI Gateway

Short description :

Kong AI Gateway extends API gateway patterns into AI and LLM traffic management. It is useful for organizations that already treat APIs as governed infrastructure and want to apply similar control to LLM usage. Kong AI Gateway can help teams manage authentication, routing, rate limiting, observability, security policies, and model provider access through a gateway layer. It is especially relevant for enterprises with established API platform practices.

Key Features

- API gateway-style LLM traffic control

- Model provider routing

- Authentication and access policy support

- Rate limiting and quotas

- Observability and traffic management

- Plugin-based extensibility

- Enterprise gateway workflows

Pros

- Strong fit for API-first enterprises

- Useful governance and traffic control patterns

- Works well where gateway architecture is already standard

Cons

- May be too infrastructure-heavy for small teams

- Requires API gateway expertise

- LLM-specific depth depends on configuration and ecosystem

Platforms / Deployment

- Containers / Kubernetes / Cloud environments

- Self-hosted / Cloud / Hybrid

Security & Compliance

Security features depend on gateway configuration, enterprise plan, authentication setup, network controls, logging, and policy management. Compliance is Varies / N/A.

Integrations & Ecosystem

Kong AI Gateway fits enterprise API and AI infrastructure.

- API gateway workflows

- LLM providers

- Kubernetes

- Authentication systems

- Observability tools

- Enterprise traffic policies

Support & Community

Kong has strong API gateway recognition, documentation, and enterprise support options. AI gateway adoption is especially relevant for platform and infrastructure teams.

#6 — Apigee API Management for AI Workloads

Short description :

Apigee can be used as an enterprise API management layer for AI and LLM workloads, especially in organizations that already use API management for governance, security, developer access, and analytics. While it is not only an LLM gateway, it can help enterprises manage AI APIs, enforce policies, apply quotas, monitor usage, and expose model-backed services securely. It is best suited for large organizations with mature API governance needs.

Key Features

- API management and gateway control

- Authentication and authorization policies

- Quotas and rate limits

- Analytics and monitoring

- Developer portal workflows

- Traffic management

- Enterprise governance patterns

Pros

- Strong enterprise API governance

- Useful for exposing AI services securely

- Good fit for organizations already using API management

Cons

- Not a dedicated LLM routing platform by default

- Requires enterprise API management expertise

- Model-specific observability may need additional tools

Platforms / Deployment

- Cloud / Hybrid depending on environment

- Managed / Hybrid

Security & Compliance

Security and compliance depend on enterprise configuration, identity policies, cloud setup, logging, retention, and governance controls. Compliance is Varies / N/A.

Integrations & Ecosystem

Apigee fits enterprise API and AI service exposure workflows.

- API products

- Developer portals

- Authentication systems

- Quotas and policies

- Cloud monitoring

- Enterprise integration workflows

Support & Community

Apigee has enterprise support resources and broad API management adoption. It is most relevant for organizations that want AI services managed through established API governance.

#7 — Zuplo

Short description :

Zuplo is an API gateway and developer-focused API management platform that can support AI API routing, access control, rate limiting, and edge-style API workflows. It is useful for teams that want a lightweight, developer-friendly gateway layer for APIs, including LLM-backed services. Zuplo is especially relevant for startups and engineering teams that want quick API policy control without the operational weight of traditional enterprise gateways.

Key Features

- API gateway workflows

- Request routing

- Rate limiting

- Authentication policies

- Developer-friendly configuration

- Edge-style API control

- Observability and logging features

Pros

- Developer-friendly gateway experience

- Useful for lightweight AI API control

- Good for startups and product teams

Cons

- Not exclusively focused on LLM routing

- Advanced AI observability may require additional tools

- Enterprise governance depth should be validated

Platforms / Deployment

- Web / API / Cloud environments

- Cloud / Edge-oriented depending on setup

Security & Compliance

Security depends on authentication configuration, gateway rules, logging, access control, and deployment setup. Compliance is Varies / N/A.

Integrations & Ecosystem

Zuplo fits modern API and AI application workflows.

- API routing

- Authentication systems

- Rate limits

- Developer portals depending on setup

- AI-backed API services

- Product API workflows

Support & Community

Zuplo provides documentation and developer resources. It is relevant for teams that want flexible API gateway control without heavy platform overhead.

#8 — IBM watsonx.governance

Short description :

IBM watsonx.governance is an AI governance platform that helps organizations manage risk, compliance, lifecycle visibility, and governance around AI models and generative AI systems. While it is not only a model routing gateway, it is relevant for enterprise teams that need oversight, documentation, policies, and governance for AI deployments. It can support organizations trying to bring structure and accountability to enterprise AI usage.

Key Features

- AI governance workflows

- Model lifecycle documentation

- Risk and compliance support

- Governance dashboards

- Policy management

- AI use case tracking

- Enterprise AI oversight

Pros

- Strong enterprise governance focus

- Useful for regulated AI environments

- Helps formalize AI risk management

Cons

- Not a direct lightweight LLM routing proxy

- Best suited for enterprise governance teams

- May need other tools for real-time gateway routing

Platforms / Deployment

- Cloud / Enterprise environments depending on setup

- Managed / Hybrid options may vary

Security & Compliance

Designed for enterprise governance workflows, but specific compliance outcomes depend on configuration, organizational policies, deployment, and regulatory context.

Integrations & Ecosystem

watsonx.governance fits enterprise AI management workflows.

- AI governance programs

- Model lifecycle processes

- Risk documentation

- Compliance workflows

- Enterprise AI platforms

- Audit and oversight processes

Support & Community

IBM provides enterprise support and documentation. This platform is most relevant for larger organizations with formal AI governance requirements.

#9 — Langfuse

Short description :

Langfuse is an open-source LLM engineering and observability platform that helps teams trace, monitor, evaluate, and debug LLM applications. It is not only a model routing gateway, but it is highly relevant in LLM platform stacks because routing decisions need observability, evaluations, traces, and feedback loops. Langfuse is useful for developers building chatbots, agents, RAG systems, and production AI applications that require transparency into prompt chains and model behavior.

Key Features

- LLM tracing

- Prompt and generation observability

- Evaluation workflows

- User feedback tracking

- Debugging for LLM applications

- Open-source deployment option

- Support for agent and RAG monitoring patterns

Pros

- Strong observability for LLM applications

- Useful for evaluation and debugging

- Open-source option supports flexibility

Cons

- Not primarily a routing gateway

- Routing must be handled by another layer or custom logic

- Requires careful logging configuration for sensitive data

Platforms / Deployment

- Web / Containers / Cloud environments

- Cloud / Self-hosted

Security & Compliance

Security depends on deployment, logging controls, authentication, data retention, access control, and hosting configuration. Compliance is Varies / N/A.

Integrations & Ecosystem

Langfuse fits LLM application engineering workflows.

- LLM application tracing

- RAG systems

- Agent workflows

- Evaluation pipelines

- Prompt management workflows

- Observability dashboards

Support & Community

Langfuse has open-source community adoption and developer-focused documentation. It is especially useful for teams serious about LLM observability and evaluation.

#10 — PromptLayer

Short description :

PromptLayer is a prompt management and observability platform for teams building LLM applications. It helps teams track prompt versions, monitor requests, inspect model outputs, and improve production prompt workflows. While it is not mainly a full model router, it plays an important role in the LLM gateway ecosystem because prompt governance and observability are essential for production AI systems. It is useful for teams that want to manage prompt changes more carefully.

Key Features

- Prompt tracking

- Prompt version management

- LLM request logging

- Output inspection

- Collaboration for prompt workflows

- Monitoring and debugging

- Evaluation support depending on setup

Pros

- Strong for prompt version control

- Useful for debugging prompt changes

- Good for product teams managing LLM behavior

Cons

- Not a complete model routing gateway by itself

- Sensitive prompt logging requires policy planning

- May need to be paired with gateway or observability tools

Platforms / Deployment

- Web / API

- Cloud

Security & Compliance

Security details may vary by plan. Teams should validate data retention, logging controls, access permissions, and compliance requirements before production use.

Integrations & Ecosystem

PromptLayer fits prompt engineering and LLM application workflows.

- Prompt versioning

- Request logging

- Output review

- Product experimentation

- Evaluation workflows

- LLM application development

Support & Community

PromptLayer provides documentation and product support resources. It is most useful for teams that treat prompt changes as production changes.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LiteLLM | Developer-friendly multi-model routing | Containers, Python environments, Kubernetes | Self-hosted / Cloud / Hybrid | Unified LLM API and proxy routing | N/A |

| OpenRouter | Fast access to many models | Web, API | Cloud | Multi-model access through one platform | N/A |

| Portkey AI | Production AI gateway and observability | Web, API, Cloud environments | Cloud / Hybrid | Routing, logging, cost, and guardrails | N/A |

| Helicone | LLM observability and usage tracking | Web, API, Cloud environments | Cloud / Self-hosted options may vary | Prompt, cost, and latency monitoring | N/A |

| Kong AI Gateway | Enterprise API gateway for AI traffic | Containers, Kubernetes, Cloud environments | Self-hosted / Cloud / Hybrid | Gateway policies for LLM traffic | N/A |

| Apigee API Management for AI Workloads | Enterprise AI API governance | Cloud, Hybrid environments | Managed / Hybrid | API governance and policy control | N/A |

| Zuplo | Developer-friendly API gateway for AI APIs | Web, API, Cloud environments | Cloud / Edge-oriented | Lightweight API control for AI services | N/A |

| IBM watsonx.governance | Enterprise AI governance | Cloud, Enterprise environments | Managed / Hybrid options may vary | AI risk and governance workflows | N/A |

| Langfuse | LLM tracing and evaluation | Web, Containers, Cloud environments | Cloud / Self-hosted | Open-source LLM observability | N/A |

| PromptLayer | Prompt tracking and prompt governance | Web, API | Cloud | Prompt versioning and request tracking | N/A |

Evaluation & LLM Gateways and Model Routing Platforms

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| LiteLLM | 9 | 8 | 9 | 7 | 8 | 8 | 9 | 8.35 |

| OpenRouter | 8 | 9 | 8 | 7 | 8 | 7 | 8 | 7.95 |

| Portkey AI | 9 | 8 | 8 | 8 | 8 | 8 | 8 | 8.25 |

| Helicone | 8 | 8 | 8 | 7 | 8 | 8 | 8 | 7.90 |

| Kong AI Gateway | 8 | 6 | 9 | 9 | 8 | 9 | 7 | 8.00 |

| Apigee API Management for AI Workloads | 7 | 6 | 9 | 9 | 8 | 9 | 7 | 7.75 |

| Zuplo | 7 | 8 | 8 | 8 | 8 | 7 | 8 | 7.70 |

| IBM watsonx.governance | 7 | 6 | 8 | 9 | 7 | 9 | 7 | 7.45 |

| Langfuse | 8 | 8 | 8 | 7 | 8 | 8 | 9 | 8.00 |

| PromptLayer | 7 | 8 | 7 | 7 | 7 | 7 | 8 | 7.25 |

The scoring is comparative and should be used as a shortlist guide, not as a universal ranking. LiteLLM is strong for developer-friendly routing, Portkey AI is strong for production gateway and observability workflows, and Kong or Apigee may fit enterprises that already use API gateway governance. Langfuse and PromptLayer are stronger for observability and prompt management than pure model routing.

Which LLM Gateway or Model Routing Platform Is Right for You?

Solo / Freelancer

Solo developers and freelancers should start with lightweight tools that reduce integration complexity. LiteLLM is a strong choice when working with multiple model providers. OpenRouter can help quickly test different models without building many integrations.

If the main need is debugging prompts and tracking usage, Helicone, Langfuse, or PromptLayer may be more useful than a heavy gateway. Avoid overengineering if the app is still in early prototype stage.

SMB

Small businesses building AI features should prioritize cost control, provider flexibility, and basic observability. LiteLLM, Portkey AI, Helicone, and OpenRouter are practical options depending on whether the team wants self-managed routing or a managed model access layer.

SMBs should focus on simple routing rules, fallback models, token usage dashboards, and safe API key management before adding complex governance workflows.

Mid-Market

Mid-market teams often need multiple environments, team-level cost tracking, fallback routing, monitoring, prompt versioning, and model comparison. Portkey AI, LiteLLM, Helicone, Langfuse, and Kong AI Gateway may be strong candidates depending on infrastructure maturity.

If the company already has an API platform team, Kong or Zuplo may fit well. If the AI team wants LLM-specific observability and evaluation, Langfuse and Helicone should be considered.

Enterprise

Enterprises should prioritize governance, security, identity integration, auditability, policy enforcement, cost controls, and data retention. Kong AI Gateway, Apigee, IBM watsonx.governance, Portkey AI, and self-hosted observability stacks may be relevant.

Enterprise buyers should not choose only by routing features. They should validate access control, logging rules, sensitive data handling, model provider risk, audit trails, approval workflows, and compliance alignment.

Budget vs Premium

Budget-focused teams can start with open-source or lightweight options such as LiteLLM and Langfuse, depending on the need. However, self-hosted tools still require engineering time, monitoring, upgrades, and secure deployment.

Premium platforms may be worth it when AI traffic grows, costs need governance, multiple teams use models, and compliance or observability becomes mandatory.

Feature Depth vs Ease of Use

For ease of use, OpenRouter, Helicone, PromptLayer, and Zuplo are more approachable for fast setup. They are useful when teams want quick integration and visibility.

For feature depth, LiteLLM, Portkey AI, Kong AI Gateway, Apigee, and enterprise governance platforms offer stronger control, routing, policy, and organizational management.

Integrations & Scalability

LiteLLM is strong when teams need broad model provider compatibility. Portkey AI is useful when routing, observability, cost, and policy controls are needed together. Kong and Apigee scale well when AI APIs must follow enterprise API governance patterns.

Scalability depends on request volume, provider limits, latency requirements, retry behavior, observability costs, logging volume, and internal access policies.

Security & Compliance Needs

Security-focused teams should evaluate API key handling, request logging, prompt retention, data masking, authentication, authorization, encryption, audit logs, and network isolation.

For sensitive workloads, avoid logging raw prompts unless retention and access policies are clear. The gateway should support secure routing without exposing confidential customer, employee, or business data unnecessarily.

Frequently Asked Questions

1. What is an LLM gateway?

An LLM gateway is a centralized layer between applications and large language model providers. It helps route requests, manage API keys, monitor usage, apply policies, control costs, and improve reliability.

2. What is model routing?

Model routing means sending each AI request to the most suitable model based on cost, quality, latency, availability, task type, or business rules. For example, a simple summarization task may go to a cheaper model, while complex reasoning may go to a stronger model.

3. Why do teams need an LLM gateway?

Teams need an LLM gateway when they use multiple providers, want cost visibility, need fallback models, require centralized security controls, or want consistent observability across AI applications.

4. What is fallback routing?

Fallback routing automatically sends a request to another model or provider if the first one fails, times out, becomes rate-limited, or returns an unacceptable response. This improves reliability for production AI systems.

5. Can an LLM gateway reduce AI costs?

Yes. A gateway can reduce cost by routing simple tasks to cheaper models, caching repeated requests, tracking token usage, enforcing budgets, and showing teams which applications consume the most AI spend.

6. What is the difference between an LLM gateway and LLM observability?

An LLM gateway controls traffic between applications and models. LLM observability tracks what happens during requests, including prompts, responses, latency, cost, errors, and user behavior. Many platforms combine both, but they are not always the same.

7. Is an API gateway enough for LLM routing?

A traditional API gateway can handle authentication, quotas, and routing, but LLM workloads often need token tracking, prompt logging, model fallback, cost analysis, and evaluation workflows. For serious AI use, LLM-specific features are usually helpful.

8. What is prompt logging?

Prompt logging records inputs, outputs, metadata, latency, token usage, and errors from LLM requests. It helps teams debug issues and improve quality, but sensitive data must be handled carefully.

9. Are LLM gateways safe for sensitive data?

They can be safe when configured properly. Teams must review logging, data retention, encryption, access controls, masking, provider routing, and compliance policies before sending sensitive data through a gateway.

10. What is the biggest mistake teams make with LLM routing?

The biggest mistake is routing only by cost. Cheap models may reduce spend but harm quality if used for complex tasks. Good routing balances cost, quality, latency, reliability, and risk.

11. Can LLM gateways support open-source models?

Yes, many gateways can route requests to both hosted commercial models and self-hosted open-source models. The exact setup depends on provider compatibility, endpoint format, and infrastructure design.

12. How should I choose an LLM gateway?

Start by identifying your main pain point. Choose LiteLLM for flexible multi-model routing, Portkey AI for production gateway and observability, Helicone or Langfuse for monitoring, Kong or Apigee for enterprise API governance, and PromptLayer for prompt tracking.

Conclusion

LLM gateways and model routing platforms are becoming essential infrastructure for production AI applications. They help teams move beyond direct model API calls by adding routing, fallback, monitoring, cost control, governance, and security. The best platform depends on your architecture and maturity. LiteLLM is strong for developer-friendly multi-model routing, OpenRouter is useful for fast model access and experimentation, Portkey AI combines gateway and observability capabilities, and Helicone helps teams monitor usage and cost. Kong AI Gateway, Apigee, and enterprise governance platforms fit larger organizations with API governance and compliance needs. Langfuse and PromptLayer support observability, evaluation, and prompt lifecycle workflows.