Introduction

AI evaluation and benchmarking frameworks help teams test, compare, measure, and improve the quality of machine learning models, large language models, retrieval systems, chatbots, agents, and AI applications. These tools are used to check whether an AI system is accurate, reliable, safe, consistent, cost-effective, and suitable for production use.

AI evaluation matters because building an AI model or LLM application is not enough. Teams must know whether the system gives correct answers, follows instructions, avoids unsafe outputs, handles edge cases, performs well across datasets, and stays reliable after changes. Without evaluation, teams may ship AI features that look impressive in demos but fail in real customer scenarios.

Common use cases include:

- Testing LLM response quality

- Comparing different AI models

- Evaluating RAG and chatbot answers

- Measuring hallucination risk

- Running regression tests on prompts

- Benchmarking model accuracy and latency

- Testing safety, fairness, and robustness

- Monitoring quality across production versions

- Comparing cost versus output quality

- Creating repeatable AI testing pipelines

Buyers should evaluate:

- Supported model types and providers

- LLM evaluation capabilities

- RAG evaluation support

- Benchmark dataset support

- Custom metric creation

- Human and automated evaluation workflows

- CI/CD and MLOps integration

- Experiment tracking

- Reporting and dashboards

- Security, privacy, and governance controls

Best for: AI engineers, ML engineers, data scientists, MLOps teams, LLMOps teams, AI product teams, platform engineers, QA teams, researchers, and enterprises building production AI applications.

Not ideal for: Teams only doing early experiments without real users, production requirements, model comparisons, safety concerns, or quality regression needs.

Key Trends in AI Evaluation & Benchmarking Frameworks

- LLM-specific evaluation: Traditional accuracy metrics are not enough for generative AI, so teams now evaluate helpfulness, faithfulness, instruction following, tone, safety, and reasoning quality.

- RAG evaluation growth: Retrieval-augmented generation systems need evaluation for context relevance, answer correctness, citation quality, and hallucination risk.

- Automated eval pipelines: AI teams increasingly run evaluations automatically before releasing prompt, model, dataset, or retrieval changes.

- Human-in-the-loop review: Human judgment remains important for subjective tasks such as tone, usefulness, creativity, and domain correctness.

- Model comparison workflows: Teams compare multiple models based on cost, speed, quality, safety, and task fit.

- Regression testing for prompts: Prompt changes can break quality, so prompt evaluation is becoming similar to software testing.

- Safety and red-team testing: Enterprises need to evaluate harmful outputs, jailbreak resistance, privacy leakage, and policy compliance.

- Production monitoring integration: Evaluation is moving from offline notebooks into live quality monitoring and observability workflows.

- Custom metrics: Teams want to define domain-specific metrics instead of relying only on generic benchmarks.

- Cost-quality benchmarking: Teams are not only asking which model is best, but which model is good enough at the lowest acceptable cost.

How We Selected These Tools

The frameworks below were selected based on practical usefulness, recognition among AI teams, support for evaluation workflows, LLM and ML relevance, integration options, and fit across different maturity levels.

The evaluation considered:

- Market adoption and developer mindshare

- Support for LLM, ML, and RAG evaluation

- Ease of creating custom evaluation tests

- Benchmarking and comparison features

- Experiment tracking and reporting support

- Integration with CI/CD and MLOps workflows

- Human and automated evaluation options

- Support for production monitoring patterns

- Fit for startups, SMBs, mid-market teams, and enterprises

- Usefulness for research, product, and operational AI teams

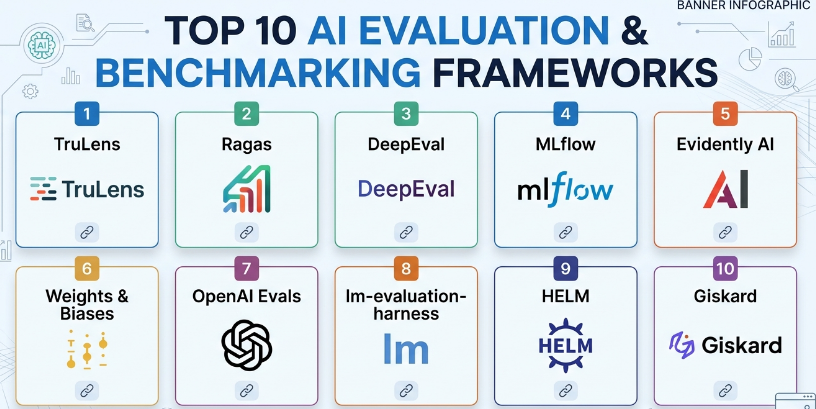

Top 10 AI Evaluation & Benchmarking Frameworks

#1 — TruLens

Short description :

TruLens is an evaluation and observability framework focused on testing and improving LLM applications, especially retrieval-augmented generation systems. It helps teams measure answer relevance, context relevance, groundedness, and other quality signals. TruLens is useful for AI engineers building chatbots, RAG pipelines, and LLM-powered applications where correctness and transparency matter. Its strength is helping teams understand why an AI response is good or weak.

Key Features

- LLM application evaluation

- RAG evaluation support

- Feedback functions for custom metrics

- Groundedness and relevance checks

- Experiment comparison

- Application tracing

- Quality monitoring workflows

Pros

- Strong for RAG and LLM application evaluation

- Useful for debugging response quality

- Good fit for AI engineers building production prototypes

Cons

- Requires evaluation design knowledge

- May need additional tools for full enterprise governance

- Metric quality depends on how feedback functions are configured

Platforms / Deployment

- Python / Notebooks / Application environments

- Local / Cloud / Hybrid depending on setup

Security & Compliance

Compliance is Varies / N/A. Security depends on deployment, data storage, prompt logging, model provider usage, access controls, and retention configuration.

Integrations & Ecosystem

TruLens fits modern LLM application development workflows.

- RAG pipelines

- LLM application frameworks

- Python evaluation workflows

- Experiment tracking patterns

- Observability workflows

- Custom feedback functions

Support & Community

TruLens has documentation and a developer community around LLM evaluation and RAG quality testing. It is suitable for teams that want practical evaluation inside AI development workflows.

#2 — Ragas

Short description :

Ragas is an evaluation framework designed specifically for retrieval-augmented generation applications. It helps teams evaluate whether retrieved context is relevant, whether answers are faithful to the context, and whether responses are useful. Ragas is especially valuable for teams building AI assistants, knowledge base chatbots, document Q&A systems, and enterprise search systems. It helps measure quality beyond simple answer matching.

Key Features

- RAG-focused evaluation

- Faithfulness scoring

- Answer relevance checks

- Context precision and recall style evaluation

- Synthetic test data support depending on setup

- Dataset-based evaluation workflows

- Python-friendly integration

Pros

- Strong fit for RAG systems

- Useful for detecting hallucination and weak retrieval

- Practical for document Q&A and chatbot testing

Cons

- Focused mainly on RAG workflows

- Requires good test data for meaningful results

- Evaluation quality depends on model and metric configuration

Platforms / Deployment

- Python / Notebooks / AI application environments

- Local / Cloud / Hybrid depending on setup

Security & Compliance

Compliance is Varies / N/A. Security depends on evaluation data handling, model provider usage, logging, storage, and access controls.

Integrations & Ecosystem

Ragas works well with RAG development stacks.

- Retrieval pipelines

- Vector database workflows

- LLM application frameworks

- Python evaluation notebooks

- Test datasets

- AI quality regression workflows

Support & Community

Ragas has strong developer awareness in the RAG evaluation space. Community support is useful for teams building document-based AI applications.

#3 — DeepEval

Short description :

DeepEval is an LLM evaluation framework that helps teams write tests for AI applications in a way similar to software testing. It supports metrics for correctness, faithfulness, relevance, hallucination, bias, toxicity, and other LLM quality dimensions. DeepEval is useful for developers who want to add automated AI evaluations into development and CI/CD workflows. It is especially helpful when teams need repeatable tests for prompts, agents, and RAG systems.

Key Features

- LLM unit testing style workflow

- RAG and chatbot evaluation

- Hallucination and faithfulness metrics

- Bias and toxicity checks

- Custom metric support

- CI/CD-friendly testing

- Dataset-based evaluation

Pros

- Developer-friendly testing approach

- Good for automated regression testing

- Supports multiple LLM quality dimensions

Cons

- Requires thoughtful test case design

- Automated scores should be validated with human review

- Complex AI behavior may need custom metrics

Platforms / Deployment

- Python / CI/CD environments / Application testing workflows

- Local / Cloud / Hybrid depending on setup

Security & Compliance

Compliance is Varies / N/A. Security depends on where test data, prompts, outputs, logs, and model responses are processed and stored.

Integrations & Ecosystem

DeepEval fits software engineering and LLMOps workflows.

- CI/CD pipelines

- LLM applications

- RAG systems

- Agent testing

- Prompt regression testing

- Custom metric workflows

Support & Community

DeepEval has growing developer adoption among teams testing LLM applications. It is especially relevant for teams treating AI quality as part of software quality engineering.

#4 — MLflow

Short description :

MLflow is a widely used machine learning lifecycle platform that supports experiment tracking, model packaging, model registry workflows, and evaluation patterns. While it is broader than AI evaluation alone, it is useful for comparing models, tracking metrics, storing artifacts, and managing model versions. MLflow is especially valuable for teams that want evaluation results connected to the full ML lifecycle. It supports both traditional ML and modern AI workflows depending on setup.

Key Features

- Experiment tracking

- Metric and artifact logging

- Model registry

- Model comparison

- Evaluation result tracking

- Multi-framework support

- Integration with MLOps workflows

Pros

- Strong for end-to-end ML lifecycle tracking

- Useful for comparing experiments and model versions

- Broad adoption across ML teams

Cons

- Not only focused on LLM-specific evaluation

- Advanced governance may require additional tooling

- Serving and monitoring depend on deployment architecture

Platforms / Deployment

- Python / Web UI / Cloud environments / Containers

- Self-hosted / Cloud / Hybrid

Security & Compliance

Security depends on deployment, authentication, artifact storage, access controls, network configuration, and platform setup. Compliance is Varies / N/A.

Integrations & Ecosystem

MLflow fits broad ML and MLOps workflows.

- Experiment tracking

- Model registry

- Model packaging

- CI/CD workflows

- Cloud ML platforms

- Evaluation metric logging

Support & Community

MLflow has a large community, broad documentation, and strong adoption across ML engineering and data science teams.

#5 — Evidently AI

Short description :

Evidently AI is a model evaluation, monitoring, and data quality platform focused on ML models, data drift, model performance, and production monitoring. It helps teams evaluate datasets, compare model behavior, monitor quality, and generate reports. Evidently is useful for traditional ML systems and can also support AI quality workflows depending on configuration. It is especially relevant for teams that want visibility into model and data changes over time.

Key Features

- Data drift detection

- Model performance monitoring

- Data quality reports

- Test suites for ML systems

- Dashboard and report generation

- Production monitoring workflows

- Custom metric support

Pros

- Strong for traditional ML monitoring and evaluation

- Useful visual reports

- Good for data quality and drift detection

Cons

- LLM evaluation may require additional setup

- More focused on ML observability than prompt testing

- Requires proper baseline datasets

Platforms / Deployment

- Python / Web dashboards / Cloud environments

- Self-hosted / Cloud / Hybrid depending on setup

Security & Compliance

Security depends on deployment, data storage, authentication, infrastructure, and access controls. Compliance is Varies / N/A.

Integrations & Ecosystem

Evidently fits ML quality and monitoring workflows.

- Data pipelines

- ML monitoring

- Model performance tracking

- Drift detection

- Reports and dashboards

- CI/CD quality checks

Support & Community

Evidently has documentation and community adoption among MLOps teams, data scientists, and ML engineers focused on model monitoring and quality.

#6 — Weights & Biases

Short description :

Weights & Biases is an experiment tracking, model evaluation, and ML collaboration platform used by machine learning teams to track experiments, compare runs, log metrics, manage artifacts, and collaborate on model development. It is useful for both research and production-oriented AI teams. For evaluation workflows, it helps teams compare model performance, datasets, hyperparameters, outputs, and reports in a structured way. It is especially strong for teams working at scale across many experiments.

Key Features

- Experiment tracking

- Metric logging and visualization

- Dataset and artifact tracking

- Model comparison

- Reports and dashboards

- Collaboration tools

- Integration with ML frameworks

Pros

- Strong for experiment comparison

- Excellent collaboration and visualization

- Useful for research and production teams

Cons

- Not only focused on LLM evaluation

- Cost and data handling should be reviewed by teams

- Requires process discipline for clean experiment tracking

Platforms / Deployment

- Web / Python / ML frameworks

- Cloud / Self-hosted options may vary

Security & Compliance

Security features and compliance options vary by plan and deployment. Teams should validate access controls, data retention, workspace permissions, and compliance needs.

Integrations & Ecosystem

Weights & Biases fits ML research and engineering workflows.

- ML frameworks

- Experiment tracking

- Model training workflows

- Reports

- Dataset and artifact management

- Team collaboration

Support & Community

Weights & Biases has strong documentation, educational resources, and wide adoption among ML researchers and engineering teams.

#7 — OpenAI Evals

Short description :

OpenAI Evals is an evaluation framework used to test model behavior, compare outputs, and create repeatable evaluation tasks. It is especially useful for teams experimenting with LLM quality, prompt behavior, and model comparisons. The framework helps developers define custom evaluations and run them against models. It is most useful for technical teams that want structured evaluation workflows for language model behavior.

Key Features

- Custom evaluation definitions

- LLM behavior testing

- Model comparison workflows

- Dataset-based evaluations

- Repeatable evaluation tasks

- Prompt and output assessment

- Developer-oriented configuration

Pros

- Useful for structured LLM testing

- Good for custom evaluation tasks

- Helps compare model behavior over time

Cons

- Requires technical setup

- Not a complete enterprise evaluation platform by itself

- Best results require well-designed eval datasets

Platforms / Deployment

- Python / Developer environments

- Local / Cloud depending on model provider and setup

Security & Compliance

Compliance is Varies / N/A. Security depends on data used in evaluations, model provider configuration, logging, retention, and access controls.

Integrations & Ecosystem

OpenAI Evals fits developer-led LLM evaluation workflows.

- Prompt testing

- Model comparison

- Custom benchmark tasks

- LLM application testing

- Dataset-based evaluation

- Regression testing workflows

Support & Community

OpenAI Evals has developer community awareness and is useful for teams that want to define technical evaluation tasks for LLM behavior.

#8 — lm-evaluation-harness

Short description :

lm-evaluation-harness is a benchmarking framework widely used by researchers and engineers to evaluate language models across standardized tasks and datasets. It is especially useful for comparing base models, open models, and research models on common benchmarks. The framework is valuable for research teams, model builders, and organizations evaluating model capabilities before deployment. It focuses more on benchmark evaluation than product-specific application evaluation.

Key Features

- Standardized language model benchmarks

- Support for many evaluation tasks

- Model comparison workflows

- Research-oriented evaluation

- Open model evaluation support

- Reproducible benchmarking patterns

- Command-line evaluation workflows

Pros

- Strong for model benchmarking

- Useful for comparing open and research models

- Good reproducibility focus

Cons

- Less focused on application-level LLM quality

- Requires technical setup

- Benchmark scores may not reflect real product performance

Platforms / Deployment

- Python / Command-line environments / Research infrastructure

- Local / Cloud / Hybrid depending on setup

Security & Compliance

Compliance is Varies / N/A. Security depends on datasets, model access, compute environment, and result storage.

Integrations & Ecosystem

lm-evaluation-harness fits model research and benchmarking workflows.

- Open-source models

- Benchmark datasets

- Research pipelines

- GPU evaluation environments

- Model comparison workflows

- Reproducible experiments

Support & Community

The framework has strong research community usage and is valuable for teams comparing language model capabilities across standardized tasks.

#9 — HELM

Short description :

HELM is a holistic evaluation approach and benchmarking framework for language models that focuses on broad, transparent, and multi-metric evaluation. It is useful for researchers, policy teams, and organizations that want to understand model behavior across many dimensions rather than relying on one benchmark score. HELM emphasizes structured evaluation across accuracy, robustness, fairness, efficiency, and other dimensions. It is especially relevant for deeper model assessment and research analysis.

Key Features

- Multi-metric model evaluation

- Benchmarking across diverse scenarios

- Language model behavior analysis

- Robustness and fairness dimensions

- Efficiency and performance consideration

- Research-oriented reporting

- Transparent evaluation methodology

Pros

- Strong for holistic model assessment

- Useful for research and governance discussions

- Encourages multi-dimensional evaluation

Cons

- More research-oriented than product workflow-oriented

- May require technical expertise to apply

- Not a simple plug-and-play production monitoring tool

Platforms / Deployment

- Research environments / Python-based workflows

- Local / Cloud / Hybrid depending on setup

Security & Compliance

Compliance is Varies / N/A. Security depends on deployment environment, datasets, model endpoints, and data handling practices.

Integrations & Ecosystem

HELM fits research and responsible AI evaluation workflows.

- Language model benchmarks

- Research evaluation pipelines

- Multi-metric reporting

- Responsible AI analysis

- Model comparison studies

- Governance-oriented review

Support & Community

HELM is most relevant in research, policy, and responsible AI communities. It is useful for teams that want deeper model evaluation beyond narrow benchmark scores.

#10 — Giskard

Short description :

Giskard is an AI testing and evaluation platform focused on model quality, risk detection, and testing for machine learning and LLM systems. It helps teams identify issues such as hallucination, bias, security weaknesses, data quality problems, and model failures. Giskard is useful for AI teams that want structured testing and quality assurance for models before and after deployment. It is especially relevant for organizations that need more confidence in AI behavior and risk management.

Key Features

- AI model testing

- LLM and ML evaluation support

- Bias and risk detection

- Test suite generation

- Model quality checks

- Reporting workflows

- Integration with AI development pipelines

Pros

- Strong AI testing and risk focus

- Useful for both ML and LLM workflows

- Good for quality assurance before production release

Cons

- Setup depends on model and data environment

- Enterprise requirements should be validated by plan

- Automated risk checks still need human review

Platforms / Deployment

- Python / Web workflows / Cloud environments

- Cloud / Self-hosted options may vary

Security & Compliance

Security and compliance depend on deployment, data handling, access controls, retention, and workspace configuration. Compliance is Varies / N/A.

Integrations & Ecosystem

Giskard fits AI testing and risk management workflows.

- ML model testing

- LLM evaluation

- Risk checks

- CI/CD quality gates

- Model validation

- AI governance workflows

Support & Community

Giskard has documentation and community awareness among AI safety, quality, and testing teams. It is suitable for teams that want a more structured AI QA workflow.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| TruLens | RAG and LLM app evaluation | Python, notebooks, app environments | Local / Cloud / Hybrid | Groundedness and relevance feedback | N/A |

| Ragas | RAG evaluation | Python, notebooks, app environments | Local / Cloud / Hybrid | Faithfulness and context relevance checks | N/A |

| DeepEval | LLM regression testing | Python, CI/CD environments | Local / Cloud / Hybrid | Unit-test style LLM evaluation | N/A |

| MLflow | ML lifecycle evaluation tracking | Python, Web UI, cloud environments | Self-hosted / Cloud / Hybrid | Experiment and model tracking | N/A |

| Evidently AI | ML monitoring and drift evaluation | Python, dashboards, cloud environments | Self-hosted / Cloud / Hybrid | Data drift and model monitoring reports | N/A |

| Weights & Biases | Experiment tracking and model comparison | Web, Python, ML frameworks | Cloud / Self-hosted options may vary | Collaborative experiment tracking | N/A |

| OpenAI Evals | Custom LLM evaluation tasks | Python, developer environments | Local / Cloud | Structured LLM behavior testing | N/A |

| lm-evaluation-harness | Research model benchmarking | Python, command-line environments | Local / Cloud / Hybrid | Standardized language model benchmarks | N/A |

| HELM | Holistic language model evaluation | Research and Python workflows | Local / Cloud / Hybrid | Multi-metric responsible evaluation | N/A |

| Giskard | AI testing and risk detection | Python, Web workflows, cloud environments | Cloud / Self-hosted options may vary | AI risk and quality testing | N/A |

Evaluation & AI Evaluation and Benchmarking Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| TruLens | 9 | 7 | 8 | 7 | 8 | 7 | 8 | 7.95 |

| Ragas | 9 | 7 | 8 | 7 | 8 | 7 | 9 | 8.10 |

| DeepEval | 9 | 8 | 8 | 7 | 8 | 7 | 8 | 8.05 |

| MLflow | 8 | 8 | 9 | 8 | 8 | 9 | 9 | 8.40 |

| Evidently AI | 8 | 8 | 8 | 8 | 8 | 8 | 8 | 8.00 |

| Weights & Biases | 9 | 8 | 9 | 8 | 9 | 9 | 8 | 8.60 |

| OpenAI Evals | 8 | 6 | 7 | 7 | 8 | 7 | 8 | 7.35 |

| lm-evaluation-harness | 9 | 5 | 8 | 7 | 8 | 7 | 9 | 7.75 |

| HELM | 9 | 5 | 7 | 7 | 8 | 7 | 8 | 7.45 |

| Giskard | 8 | 8 | 8 | 8 | 8 | 8 | 8 | 8.00 |

The scoring is comparative and should be used as a shortlist guide, not as a universal ranking. Weights & Biases and MLflow are strong for experiment tracking and model comparison, while Ragas, TruLens, and DeepEval are stronger for LLM and RAG application evaluation. lm-evaluation-harness and HELM are better for research-style benchmarking, while Giskard is useful for AI testing and risk-focused evaluation.

Which AI Evaluation & Benchmarking Framework Is Right for You?

Solo / Freelancer

Solo AI developers and freelancers should start with lightweight tools that solve the immediate evaluation problem. If building a RAG chatbot, Ragas or TruLens is a strong starting point. If writing repeatable LLM tests, DeepEval can be useful.

If the work is more traditional ML, MLflow or Evidently AI may be better. For model research and benchmarking, lm-evaluation-harness can be useful, but it requires more technical setup.

SMB

Small businesses building AI applications should prioritize simple, repeatable evaluation workflows. Ragas, DeepEval, TruLens, MLflow, and Evidently AI are practical choices depending on the use case.

SMBs should not rely only on manual testing. Even a small set of evaluation questions can catch prompt regressions, retrieval issues, and model quality drops before customers notice them.

Mid-Market

Mid-market teams often need evaluation pipelines, experiment tracking, model comparison, reporting, and quality gates. MLflow, Weights & Biases, DeepEval, Giskard, Evidently AI, Ragas, and TruLens can all be relevant.

For LLM applications, combine application-specific evaluation with experiment tracking. For example, teams may use Ragas or DeepEval for quality checks and MLflow or Weights & Biases for tracking results across versions.

Enterprise

Enterprises should focus on governance, repeatability, auditability, safety, risk management, and production monitoring. Giskard, Evidently AI, MLflow, Weights & Biases, HELM-style evaluation approaches, and LLM-specific tools such as Ragas or TruLens may all play a role.

Enterprise teams should build evaluation as a process, not just a tool choice. They need datasets, metrics, human review, approval workflows, monitoring, and release gates.

Budget vs Premium

Budget-focused teams can start with open-source frameworks and small custom evaluation datasets. Ragas, TruLens, DeepEval, MLflow, Evidently AI, OpenAI Evals, and lm-evaluation-harness can support strong workflows depending on team skill.

Premium platforms may be worth it when teams need collaboration, dashboards, access controls, managed storage, enterprise support, and governance features.

Feature Depth vs Ease of Use

For ease of use, MLflow, Weights & Biases, Evidently AI, DeepEval, and Giskard are more approachable depending on the user’s background.

For feature depth, lm-evaluation-harness, HELM, TruLens, Ragas, and custom evaluation stacks provide stronger technical control but may require more expertise.

Integrations & Scalability

MLflow and Weights & Biases scale well for experiment-heavy teams. Evidently AI scales well for monitoring and drift workflows. Ragas, TruLens, and DeepEval fit LLM and RAG quality pipelines.

Scalability depends on dataset size, evaluation frequency, model count, prompt versions, human review process, CI/CD integration, and reporting needs.

Security & Compliance Needs

Security-focused teams should evaluate where prompts, datasets, outputs, logs, metrics, and model responses are stored. Sensitive evaluation data should be protected with access controls, encryption, retention policies, and careful provider selection.

Compliance does not come from the framework alone. It depends on deployment, data governance, review process, audit trails, and organizational controls.

Frequently Asked Questions

1. What is AI evaluation?

AI evaluation is the process of testing an AI model or application to measure how well it performs. It checks quality, accuracy, safety, reliability, latency, cost, and suitability for real-world use.

2. What is AI benchmarking?

AI benchmarking compares models or systems using standard tests, datasets, or evaluation scenarios. It helps teams understand relative performance across models, prompts, architectures, or providers.

3. Why is LLM evaluation difficult?

LLM evaluation is difficult because answers can be open-ended, subjective, and context-dependent. A response may be fluent but wrong, safe but unhelpful, or correct but poorly formatted. That is why teams need multiple metrics and human review.

4. What is RAG evaluation?

RAG evaluation tests retrieval-augmented generation systems. It checks whether retrieved context is relevant, whether the answer is grounded in that context, and whether the final response correctly answers the user’s question.

5. Which framework is best for RAG evaluation?

Ragas and TruLens are strong options for RAG evaluation. DeepEval can also support RAG testing through custom and built-in metrics. The best choice depends on the workflow, dataset, and integration needs.

6. Which framework is best for experiment tracking?

MLflow and Weights & Biases are strong choices for experiment tracking. MLflow is widely used for ML lifecycle workflows, while Weights & Biases is strong for collaborative experiment comparison and visualization.

7. Which framework is best for research benchmarking?

lm-evaluation-harness and HELM are strong for research-style language model benchmarking. They are useful when teams want standardized comparisons across models and tasks.

8. Can automated evaluation replace human review?

No. Automated evaluation is useful for scale, regression testing, and repeatability, but human review is still important for domain correctness, tone, user value, safety, and business-specific judgment.

9. What is a good evaluation dataset?

A good evaluation dataset includes realistic user questions, edge cases, failure scenarios, domain-specific tasks, expected behavior, and examples from production when available. It should be updated as the product changes.

10. What is the biggest mistake teams make in AI evaluation?

The biggest mistake is using only generic benchmarks and assuming they represent real product quality. Teams should evaluate using real user workflows, domain data, and business-specific success criteria.

11. How often should AI evaluations run?

Evaluations should run whenever models, prompts, retrieval logic, datasets, or system instructions change. Mature teams also run scheduled evaluations to detect quality drift and production regressions.

12. How should I choose an AI evaluation framework?

Start with the system type. Choose Ragas or TruLens for RAG, DeepEval for LLM testing, MLflow or Weights & Biases for experiment tracking, Evidently AI for drift and monitoring, lm-evaluation-harness or HELM for research benchmarking, and Giskard for AI risk testing.

Conclusion

AI evaluation and benchmarking frameworks are essential for building reliable AI systems because they help teams measure quality before and after deployment. The best framework depends on the type of AI system being tested. Ragas and TruLens are strong for RAG and LLM application evaluation, DeepEval is practical for automated LLM testing, MLflow and Weights & Biases are excellent for experiment tracking and model comparison, Evidently AI is strong for drift and monitoring, and Giskard supports risk-focused AI testing. For research benchmarking, lm-evaluation-harness and HELM are valuable options.