Introduction

AI inference serving platforms help teams deploy trained machine learning, deep learning, and generative AI models into production so applications can send requests and receive predictions, classifications, recommendations, embeddings, responses, or generated outputs. In simple terms, model serving is the layer that makes an AI model usable by real users, business systems, APIs, mobile apps, web apps, data pipelines, and enterprise workflows.

These platforms matter because building a model is only one part of the AI lifecycle. Once a model is trained, teams need reliable serving, autoscaling, monitoring, versioning, rollback, GPU utilization, latency control, security, and integration with cloud or Kubernetes environments. Without a strong inference layer, even a high-quality model can fail in production due to slow responses, high cost, poor observability, or unstable scaling.

Common use cases include:

- Serving real-time prediction APIs

- Deploying large language models for chat and assistants

- Running image, video, and speech models

- Serving recommendation models

- Generating embeddings for search and retrieval

- Running batch and online inference workloads

- Deploying models on Kubernetes or cloud infrastructure

- Managing model versions, traffic splitting, and rollback

Buyers should evaluate:

- Supported model frameworks

- Real-time and batch inference support

- GPU and accelerator support

- Autoscaling and performance tuning

- Kubernetes and cloud-native compatibility

- Model versioning and rollout control

- Observability, logging, and metrics

- Security, authentication, and access control

- Cost efficiency and hardware utilization

- Fit for LLM, traditional ML, and deep learning workloads

Best for: ML engineers, MLOps teams, platform engineers, data scientists, AI product teams, DevOps teams, cloud architects, enterprises, startups, and organizations deploying machine learning or generative AI into production.

Not ideal for: Teams that only run small experimental notebooks, one-off offline predictions, or manual model testing without production traffic, scaling, monitoring, or reliability needs.

Key Trends in AI Inference Serving Platforms

- LLM-optimized serving: Modern platforms increasingly support large language model workloads, token streaming, batching, quantization, and GPU-aware scaling.

- Cost-efficient GPU utilization: Teams are focusing on higher throughput, better batching, and reduced idle GPU time.

- Kubernetes-native deployment: Many organizations prefer Kubernetes-based model serving for portability, autoscaling, and infrastructure standardization.

- Multi-model serving: Platforms are improving support for serving many models from shared infrastructure.

- Serverless inference: Teams want scale-to-zero or demand-based scaling to reduce cost for variable workloads.

- Model monitoring and observability: Latency, throughput, errors, drift, model quality, and infrastructure metrics are now critical.

- Edge and hybrid inference: Some workloads need inference closer to users, devices, factories, branches, or private environments.

- Open-source serving stacks: Engineering teams often prefer open-source platforms for flexibility and avoiding vendor lock-in.

- Security and governance: Enterprises need authentication, authorization, auditability, network isolation, and policy controls.

- Vector and retrieval workflows: Model serving is increasingly connected to embeddings, vector databases, retrieval-augmented generation, and AI application pipelines.

How We Selected These Tools

The platforms below were selected based on recognition, technical capability, production readiness, ecosystem adoption, model framework support, scalability, and fit across different AI deployment needs.

The evaluation considered:

- Market adoption and engineering mindshare

- Support for real-time inference and batch serving

- Compatibility with common ML and deep learning frameworks

- Kubernetes and cloud-native support

- GPU, accelerator, and high-performance serving features

- Model versioning and rollout support

- Observability and operational maturity

- Security and enterprise controls

- Fit for LLMs, computer vision, NLP, tabular ML, and recommendation workloads

- Suitability for solo teams, SMBs, mid-market companies, and enterprises

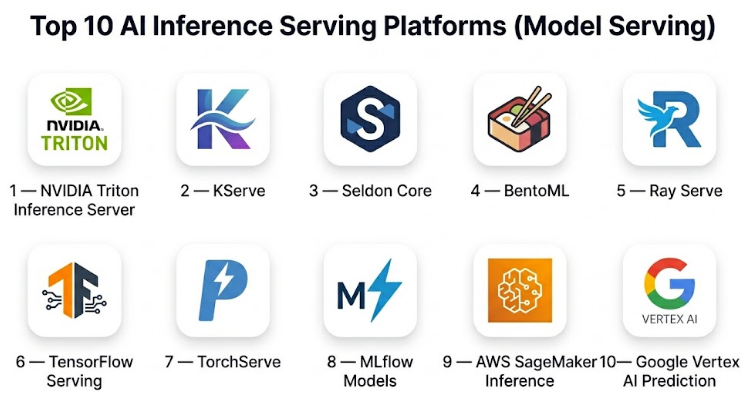

Top 10 AI Inference Serving Platforms

#1 — NVIDIA Triton Inference Server

Short description :

NVIDIA Triton Inference Server is a high-performance inference serving platform designed for deploying AI models across GPUs and CPUs. It supports multiple frameworks and is widely used for deep learning, computer vision, speech, recommendation, and large-scale AI workloads. Triton is especially strong when teams need optimized GPU serving, dynamic batching, model ensembles, and production-grade performance. It is a strong fit for platform teams running inference at scale.

Key Features

- Multi-framework model serving

- GPU and CPU inference support

- Dynamic batching

- Model ensembles

- Concurrent model execution

- HTTP and gRPC endpoints

- Metrics for production observability

Pros

- Excellent for GPU-accelerated inference

- Strong performance optimization features

- Suitable for high-throughput production workloads

Cons

- Requires infrastructure and performance tuning expertise

- May feel complex for small teams

- Best value appears when hardware utilization matters

Platforms / Deployment

- Linux / Containers / Kubernetes

- Self-hosted / Cloud / Hybrid

Security & Compliance

Not publicly stated as a standalone compliance-certified platform. Security depends on deployment architecture, network controls, Kubernetes policies, authentication layers, container security, and cloud environment configuration.

Integrations & Ecosystem

Triton fits well into advanced AI infrastructure and MLOps workflows.

- Kubernetes

- GPU infrastructure

- Prometheus-style metrics workflows

- Multiple model frameworks

- Containerized deployments

- Model repository workflows

Support & Community

NVIDIA Triton has strong documentation, enterprise ecosystem support, and a large technical community. It is widely used by teams focused on high-performance AI serving.

#2 — KServe

Short description :

KServe is a Kubernetes-native model serving platform designed for scalable and standardized AI inference deployment. It supports multiple model runtimes and helps teams deploy, autoscale, version, and manage inference services on Kubernetes. KServe is useful for organizations building internal MLOps platforms and teams that want cloud-native control over model serving. It is especially relevant when Kubernetes is already the standard infrastructure layer.

Key Features

- Kubernetes-native model serving

- Serverless-style autoscaling

- Support for multiple model runtimes

- Canary rollout and revision support

- Inference service abstraction

- Transformer and explainer patterns

- Integration with cloud-native observability tools

Pros

- Strong fit for Kubernetes-based MLOps

- Good abstraction for standardized model serving

- Supports multiple frameworks and runtimes

Cons

- Requires Kubernetes expertise

- Operational setup can be complex

- Best suited for platform teams, not casual users

Platforms / Deployment

- Kubernetes

- Self-hosted / Cloud / Hybrid

Security & Compliance

Security depends on Kubernetes configuration, service mesh, identity controls, network policies, secrets management, and cloud environment. Compliance is Varies / N/A.

Integrations & Ecosystem

KServe works well inside cloud-native AI platforms.

- Kubernetes

- Kubeflow ecosystem

- Knative-style serving patterns

- Istio or service mesh workflows depending on setup

- Model runtimes

- Observability and autoscaling systems

Support & Community

KServe has open-source community support and documentation. It is best suited for teams comfortable operating Kubernetes and MLOps infrastructure.

#3 — Seldon Core

Short description :

Seldon Core is an open-source model serving platform designed for deploying, scaling, and managing machine learning models on Kubernetes. It supports production inference workflows, model graphs, canary deployments, explainability patterns, and monitoring integration. Seldon Core is especially useful for enterprises and platform teams that want flexible model serving with Kubernetes-native control. It is often used in regulated or mature MLOps environments where deployment governance matters.

Key Features

- Kubernetes-native model deployment

- Model graphs and inference pipelines

- Canary and traffic management support

- Multi-framework serving

- REST and gRPC endpoints

- Monitoring integration

- Explainability and advanced deployment patterns

Pros

- Strong for complex production serving workflows

- Good Kubernetes and enterprise fit

- Supports advanced inference graphs

Cons

- Requires platform engineering maturity

- Setup and operation can be complex

- May be too heavy for small teams

Platforms / Deployment

- Kubernetes

- Self-hosted / Cloud / Hybrid

Security & Compliance

Security depends on Kubernetes configuration, access controls, network policies, secrets management, and enterprise deployment practices. Compliance is Varies / N/A.

Integrations & Ecosystem

Seldon Core integrates well with MLOps and platform engineering stacks.

- Kubernetes

- Model monitoring workflows

- Service mesh and traffic routing patterns

- REST and gRPC APIs

- CI/CD pipelines

- Explainability and governance workflows

Support & Community

Seldon Core has documentation, open-source community support, and enterprise-oriented adoption. It is suited for teams with production MLOps needs.

#4 — BentoML

Short description :

BentoML is a model serving and AI application packaging platform that helps teams package models, define APIs, serve inference endpoints, and deploy models across different environments. It is useful for ML engineers and AI product teams that want a developer-friendly workflow for turning models into production services. BentoML supports traditional ML, deep learning, and modern AI application workflows. Its strength is simplifying packaging and deployment without forcing one specific infrastructure model.

Key Features

- Model packaging

- API service definition

- Local and cloud deployment workflows

- Batch and online inference patterns

- Multi-framework support

- Container-friendly deployment

- Developer-focused serving workflow

Pros

- Developer-friendly and flexible

- Good for packaging models into services

- Useful for teams moving from notebooks to APIs

Cons

- Infrastructure scaling depends on deployment setup

- Enterprise governance may need additional tooling

- Some advanced operations require platform integration

Platforms / Deployment

- Web APIs / Containers / Kubernetes / Cloud environments

- Self-hosted / Cloud / Hybrid

Security & Compliance

Security depends on deployment environment, API gateway, identity layer, network controls, container security, and cloud policies. Compliance is Varies / N/A.

Integrations & Ecosystem

BentoML fits flexible AI application deployment workflows.

- Python ML frameworks

- Containers

- Kubernetes

- CI/CD pipelines

- Model registries depending on setup

- API-based AI applications

Support & Community

BentoML has documentation, community support, and growing adoption among ML engineers building production AI services.

#5 — Ray Serve

Short description :

Ray Serve is a scalable model serving library built on the Ray distributed computing framework. It is designed for Python-based AI applications that need scalable serving, composable deployments, batching, and distributed execution. Ray Serve is especially useful for teams building complex inference pipelines, LLM applications, multi-model workflows, or workloads that combine serving with distributed processing. It is a strong fit for engineering teams comfortable with Python and distributed systems.

Key Features

- Scalable Python-native serving

- Model deployment composition

- Dynamic batching

- Multi-model and pipeline support

- Distributed execution with Ray

- HTTP ingress support

- LLM and AI application serving patterns

Pros

- Strong for Python and distributed AI workloads

- Good for complex inference pipelines

- Flexible for custom AI services

Cons

- Requires Ray ecosystem knowledge

- Operational complexity grows with scale

- May be more developer-heavy than managed platforms

Platforms / Deployment

- Linux / Containers / Kubernetes / Cloud environments

- Self-hosted / Cloud / Hybrid

Security & Compliance

Security depends on Ray cluster setup, API access controls, network boundaries, authentication layers, Kubernetes policies, and cloud configuration. Compliance is Varies / N/A.

Integrations & Ecosystem

Ray Serve fits distributed AI and Python-native workflows.

- Ray clusters

- Kubernetes

- Python ML frameworks

- LLM serving workflows

- Distributed batch and online inference

- Custom AI application stacks

Support & Community

Ray Serve benefits from the broader Ray community, documentation, and production adoption among distributed AI teams.

#6 — TensorFlow Serving

Short description :

TensorFlow Serving is a production serving system designed for TensorFlow models. It provides efficient model loading, versioning, inference APIs, and deployment patterns for TensorFlow-based machine learning systems. It is especially useful for teams with established TensorFlow pipelines and models that need stable production inference. While newer serving stacks support broader frameworks, TensorFlow Serving remains valuable for TensorFlow-heavy organizations.

Key Features

- TensorFlow model serving

- Model versioning

- REST and gRPC APIs

- Efficient model loading

- Production inference support

- Batch inference patterns depending on setup

- Container deployment support

Pros

- Strong fit for TensorFlow models

- Stable and production-oriented

- Good versioning and serving architecture

Cons

- Best suited for TensorFlow ecosystem

- Less flexible for multi-framework environments

- May require additional tooling for full MLOps

Platforms / Deployment

- Linux / Containers / Kubernetes

- Self-hosted / Cloud / Hybrid

Security & Compliance

Not publicly stated as a standalone compliance-certified system. Security depends on deployment environment, API access control, container security, and infrastructure policies.

Integrations & Ecosystem

TensorFlow Serving fits TensorFlow production workflows.

- TensorFlow models

- Kubernetes

- Containers

- REST and gRPC APIs

- CI/CD deployment

- Model versioning workflows

Support & Community

TensorFlow Serving has strong documentation and community support from the TensorFlow ecosystem. It is best for teams already committed to TensorFlow-based ML.

#7 — TorchServe

Short description :

TorchServe is a model serving framework designed for PyTorch models. It helps teams package, serve, scale, and monitor PyTorch inference services. TorchServe is useful for teams building computer vision, NLP, deep learning, and research-to-production workflows using PyTorch. It gives PyTorch users a more structured way to expose models as production endpoints.

Key Features

- PyTorch model serving

- Model archiving and packaging

- REST APIs for inference

- Metrics and logging support

- Multi-model serving

- Custom handlers

- Container-based deployment support

Pros

- Strong fit for PyTorch teams

- Supports custom preprocessing and postprocessing

- Useful for research-to-production workflows

Cons

- Best suited for PyTorch workloads

- Requires operational setup for scaling

- Broader ecosystem may be less active than some newer serving approaches

Platforms / Deployment

- Linux / Containers / Kubernetes

- Self-hosted / Cloud / Hybrid

Security & Compliance

Not publicly stated as a standalone compliance-certified system. Security depends on deployment, API access control, container security, authentication, and infrastructure policies.

Integrations & Ecosystem

TorchServe fits PyTorch production environments.

- PyTorch models

- Custom handlers

- Containers

- Kubernetes

- Metrics and logging workflows

- REST-based inference APIs

Support & Community

TorchServe has documentation and community support from PyTorch users. It is most relevant for teams standardizing around PyTorch model deployment.

#8 — MLflow Models

Short description :

MLflow Models is part of the broader MLflow ecosystem and helps package, register, and deploy machine learning models in a standardized format. It supports multiple model flavors and can be used to serve models through APIs or deploy them into different environments. MLflow is especially useful for teams that already use experiment tracking, model registry, and lifecycle management. Its value comes from connecting model management with deployment workflows.

Key Features

- Standardized model packaging

- Multiple framework flavors

- Model registry integration

- Local model serving

- Deployment integrations depending on environment

- Experiment tracking ecosystem

- Version and stage management

Pros

- Strong model lifecycle management

- Useful across many ML frameworks

- Good fit for teams already using MLflow

Cons

- Serving layer may need additional infrastructure for scale

- Not always as optimized as specialized inference servers

- Operational maturity depends on deployment setup

Platforms / Deployment

- Python / Containers / Cloud environments / Kubernetes depending on setup

- Self-hosted / Cloud / Hybrid

Security & Compliance

Security depends on MLflow deployment, registry access, identity controls, artifact storage, API access, and cloud infrastructure. Compliance is Varies / N/A.

Integrations & Ecosystem

MLflow fits end-to-end ML lifecycle workflows.

- Experiment tracking

- Model registry

- Multiple ML frameworks

- Artifact storage

- CI/CD workflows

- Cloud deployment patterns

Support & Community

MLflow has a large community and broad adoption across ML teams. Support options depend on the environment or vendor distribution used.

#9 — AWS SageMaker Inference

Short description :

AWS SageMaker Inference is a managed model deployment and inference service for teams building machine learning workloads on AWS. It supports real-time inference, batch transform, asynchronous inference, serverless inference, and managed endpoints. SageMaker Inference is useful for teams that want managed infrastructure, AWS ecosystem integration, scaling, monitoring, and model deployment without fully operating their own serving stack. It is especially relevant for AWS-first organizations.

Key Features

- Managed real-time inference endpoints

- Batch transform

- Serverless inference options

- Asynchronous inference

- Autoscaling support

- Model monitoring options

- AWS ecosystem integration

Pros

- Strong fit for AWS-based teams

- Reduces infrastructure management burden

- Supports multiple inference patterns

Cons

- AWS ecosystem dependency

- Cost management requires careful monitoring

- Advanced customization may require AWS expertise

Platforms / Deployment

- AWS Cloud

- Cloud / Managed

Security & Compliance

Security and compliance depend on AWS account configuration, IAM, VPC design, encryption settings, logging, monitoring, and service configuration. Compliance support varies by workload and AWS environment.

Integrations & Ecosystem

SageMaker Inference fits AWS-centered ML workflows.

- AWS storage services

- IAM and VPC controls

- Cloud monitoring

- Model registry workflows

- CI/CD pipelines

- Batch and real-time inference patterns

Support & Community

AWS provides documentation, support plans, and a large cloud ecosystem. SageMaker has broad adoption among AWS machine learning teams.

#10 — Google Vertex AI Prediction

Short description :

Google Vertex AI Prediction is a managed inference service for deploying machine learning models on Google Cloud. It supports online prediction, batch prediction, custom containers, model endpoints, autoscaling, and integration with the broader Vertex AI platform. It is useful for teams that want managed model serving with Google Cloud infrastructure, data tools, and AI lifecycle services. It is especially relevant for Google Cloud-first organizations.

Key Features

- Managed online prediction

- Batch prediction

- Custom model containers

- Autoscaling endpoints

- Model registry integration

- Monitoring and logging workflows

- Google Cloud ecosystem integration

Pros

- Strong fit for Google Cloud environments

- Managed serving reduces operational load

- Good integration with Vertex AI lifecycle tools

Cons

- Google Cloud ecosystem dependency

- Cost and scaling need careful planning

- Advanced setup requires cloud and MLOps expertise

Platforms / Deployment

- Google Cloud

- Cloud / Managed

Security & Compliance

Security and compliance depend on Google Cloud IAM, network controls, encryption configuration, logging, monitoring, and project governance. Compliance support varies by workload and environment.

Integrations & Ecosystem

Vertex AI Prediction fits Google Cloud AI workflows.

- Vertex AI model registry

- Google Cloud storage and data tools

- IAM and networking

- Custom containers

- Cloud monitoring and logging

- MLOps pipelines

Support & Community

Google Cloud provides documentation, support options, and cloud ecosystem resources. Vertex AI is relevant for teams building managed AI workflows on Google Cloud.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| NVIDIA Triton Inference Server | High-performance GPU inference | Linux, Containers, Kubernetes | Self-hosted / Cloud / Hybrid | Optimized multi-framework GPU serving | N/A |

| KServe | Kubernetes-native MLOps platforms | Kubernetes | Self-hosted / Cloud / Hybrid | Standardized inference services on Kubernetes | N/A |

| Seldon Core | Enterprise Kubernetes model serving | Kubernetes | Self-hosted / Cloud / Hybrid | Advanced model graphs and rollout workflows | N/A |

| BentoML | Developer-friendly model APIs | Containers, Kubernetes, Cloud environments | Self-hosted / Cloud / Hybrid | Model packaging and service deployment | N/A |

| Ray Serve | Distributed Python AI applications | Containers, Kubernetes, Cloud environments | Self-hosted / Cloud / Hybrid | Scalable Python-native inference pipelines | N/A |

| TensorFlow Serving | TensorFlow production models | Linux, Containers, Kubernetes | Self-hosted / Cloud / Hybrid | Stable TensorFlow model serving | N/A |

| TorchServe | PyTorch model serving | Linux, Containers, Kubernetes | Self-hosted / Cloud / Hybrid | PyTorch-focused deployment workflow | N/A |

| MLflow Models | Model lifecycle and packaging | Python, Containers, Cloud environments | Self-hosted / Cloud / Hybrid | Standardized model packaging and registry fit | N/A |

| AWS SageMaker Inference | AWS-managed inference | AWS Cloud | Managed Cloud | Managed real-time, batch, async, and serverless inference | N/A |

| Google Vertex AI Prediction | Google Cloud-managed inference | Google Cloud | Managed Cloud | Managed prediction endpoints inside Vertex AI | N/A |

Evaluation & AI Inference Serving Platforms

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| NVIDIA Triton Inference Server | 10 | 6 | 8 | 8 | 10 | 8 | 9 | 8.50 |

| KServe | 9 | 6 | 9 | 8 | 8 | 7 | 8 | 7.95 |

| Seldon Core | 9 | 6 | 9 | 8 | 8 | 8 | 8 | 8.05 |

| BentoML | 8 | 8 | 8 | 7 | 8 | 8 | 8 | 7.95 |

| Ray Serve | 9 | 7 | 8 | 7 | 9 | 8 | 8 | 8.15 |

| TensorFlow Serving | 8 | 7 | 7 | 7 | 8 | 8 | 8 | 7.60 |

| TorchServe | 7 | 7 | 7 | 7 | 7 | 7 | 8 | 7.15 |

| MLflow Models | 8 | 8 | 9 | 7 | 7 | 8 | 8 | 7.95 |

| AWS SageMaker Inference | 9 | 8 | 10 | 9 | 9 | 9 | 7 | 8.75 |

| Google Vertex AI Prediction | 9 | 8 | 10 | 9 | 9 | 9 | 7 | 8.75 |

The scoring is comparative and should be used as a shortlist guide, not as a universal ranking. Managed cloud platforms score well for ease, ecosystem, security controls, and support, but they may increase cloud lock-in and cost sensitivity. Open-source platforms such as Triton, KServe, Seldon Core, BentoML, and Ray Serve offer more flexibility and control, but they require stronger platform engineering capability.

Which AI Inference Serving Platform Is Right for You?

Solo / Freelancer

Solo ML engineers and freelancers should avoid overengineering early deployments. BentoML, MLflow Models, Ray Serve, or a managed cloud inference endpoint can be practical starting points. If the project is small, a simple containerized API may be enough before moving to a larger serving platform.

For GPU-heavy work, NVIDIA Triton can be valuable, but it requires technical setup. For client projects, choose tools that are easy to explain, deploy, monitor, and hand over.

SMB

Small businesses should prioritize simplicity, cost control, and reliable deployment. Managed platforms such as AWS SageMaker Inference or Google Vertex AI Prediction can reduce operational burden if the company is already using that cloud.

If the SMB has a strong engineering team and wants cloud portability, BentoML or KServe may be a good fit. For high-throughput GPU inference, Triton can be evaluated carefully.

Mid-Market

Mid-market companies often need repeatable MLOps workflows, model registry integration, CI/CD, monitoring, security controls, and autoscaling. KServe, Seldon Core, Ray Serve, BentoML, SageMaker Inference, and Vertex AI Prediction are strong candidates depending on the infrastructure strategy.

If Kubernetes is already standard, KServe or Seldon Core may fit well. If the company prefers managed cloud services, SageMaker or Vertex AI can accelerate adoption.

Enterprise

Enterprises should evaluate inference platforms based on governance, security, compliance, cost control, observability, model lifecycle integration, and operational ownership. Managed platforms can simplify compliance alignment within cloud ecosystems, while Kubernetes-native platforms offer portability and customization.

For large-scale GPU inference, NVIDIA Triton is a strong option. For Kubernetes-based platform engineering, KServe and Seldon Core are relevant. For cloud-native enterprise ML, SageMaker Inference and Vertex AI Prediction are common choices.

Budget vs Premium

Budget-focused teams can start with open-source tools such as BentoML, Ray Serve, KServe, Seldon Core, TensorFlow Serving, TorchServe, MLflow Models, or Triton. However, open-source does not mean free operationally. Teams still need infrastructure, monitoring, security, and engineering time.

Managed platforms may cost more directly, but they can reduce platform maintenance work. The right decision depends on team skill, workload volume, GPU usage, and reliability requirements.

Feature Depth vs Ease of Use

For ease of use, managed cloud inference and BentoML are often easier to adopt. MLflow Models can also be convenient for teams already using MLflow.

For feature depth, Triton, KServe, Seldon Core, and Ray Serve provide stronger control for advanced production environments. They are better suited for teams with platform engineering skills.

Integrations & Scalability

SageMaker Inference works best inside AWS environments. Vertex AI Prediction works best inside Google Cloud environments. KServe and Seldon Core work well inside Kubernetes-based MLOps platforms. Ray Serve fits distributed Python AI applications. Triton fits performance-heavy GPU serving.

Scalability depends on autoscaling, batching, model loading, GPU utilization, request latency, traffic patterns, and observability. The best serving platform is the one that matches the workload shape.

Security & Compliance Needs

Security-focused teams should evaluate authentication, authorization, network isolation, encryption, logging, audit trails, model artifact access, secrets management, and deployment approvals.

For regulated environments, inference serving must be evaluated as part of the full production stack. The serving platform alone does not guarantee compliance. Compliance depends on cloud configuration, infrastructure controls, access policies, monitoring, and operational process.

Frequently Asked Questions

1. What is AI inference serving?

AI inference serving is the process of deploying a trained AI or machine learning model so applications can send inputs and receive predictions or generated outputs. It turns a model into a production service that can be used by real users and systems.

2. How is inference different from training?

Training teaches a model using data. Inference uses the trained model to make predictions or generate responses. Training is usually compute-heavy and offline, while inference must often be fast, reliable, scalable, and available in production.

3. Which platform is best for GPU inference?

NVIDIA Triton Inference Server is a strong option for GPU inference because it focuses on high-performance serving, dynamic batching, and multi-framework support. Managed cloud platforms can also be effective when GPU infrastructure is available.

4. Which platform is best for Kubernetes model serving?

KServe and Seldon Core are strong Kubernetes-native options. They are suitable for teams building internal MLOps platforms with autoscaling, rollout control, service mesh patterns, and cloud-native observability.

5. Which platform is best for LLM serving?

The best LLM serving platform depends on model size, latency, GPU availability, batching needs, streaming requirements, and deployment environment. Triton, Ray Serve, BentoML, managed cloud services, and specialized LLM runtimes can all be relevant depending on architecture.

6. Is managed inference better than self-hosted inference?

Managed inference is easier to operate and integrates well with cloud services. Self-hosted inference gives more control and portability but requires platform engineering, monitoring, scaling, and security management. The best choice depends on team maturity and workload needs.

7. What is model versioning in inference serving?

Model versioning allows teams to deploy multiple versions of a model, test new versions, roll back if needed, and control which version receives traffic. It is important for safe production updates.

8. Why is latency important in model serving?

Latency measures how quickly the model responds. Low latency is important for chatbots, fraud detection, recommendations, search, image processing, and real-time user experiences. High latency can make AI applications feel slow or unusable.

9. What is dynamic batching?

Dynamic batching groups multiple inference requests together so the model can process them more efficiently, especially on GPUs. It can improve throughput and reduce cost, but it must be tuned carefully to avoid increasing latency too much.

10. What is the biggest mistake teams make with inference serving?

The biggest mistake is deploying a model without proper monitoring, rollback, load testing, and cost controls. A model may work in testing but fail under real traffic if the serving layer is not production-ready.

11. Can one platform serve multiple models?

Yes. Many inference serving platforms support multi-model serving. However, teams must manage memory, model loading time, resource isolation, versioning, and scaling carefully when many models share infrastructure.

12. How should I choose an inference serving platform?

Start with your workload. Choose Triton for high-performance GPU serving, KServe or Seldon Core for Kubernetes-native platforms, BentoML for developer-friendly APIs, Ray Serve for distributed Python inference, MLflow for model lifecycle integration, and managed cloud services if you want reduced infrastructure operations.

Conclusion

AI inference serving platforms are critical for turning trained models into reliable production services. The best platform depends on model type, traffic pattern, infrastructure strategy, team skills, latency goals, security requirements, and cost constraints. NVIDIA Triton is strong for high-performance GPU inference, KServe and Seldon Core fit Kubernetes-native MLOps platforms, BentoML is developer-friendly for packaging and serving models, Ray Serve is powerful for distributed Python AI applications, and MLflow Models works well when model lifecycle management is already in place. Managed services such as AWS SageMaker Inference and Google Vertex AI Prediction are valuable for cloud-first teams that want less operational burden.