Introduction

RAG (Retrieval-Augmented Generation) tooling refers to platforms and frameworks that combine information retrieval systems (like vector databases) with AI generation models (like LLMs). In simple terms, instead of relying only on a model’s internal knowledge, RAG tools allow AI to fetch real-time or private data and use it to generate more accurate and contextual responses.

This approach has become critical in modern AI systems where accuracy, relevance, and trust are non-negotiable. As organizations deploy AI assistants, enterprise search systems, and knowledge bots, RAG ensures that responses are grounded in real data, not hallucinated guesses.

Real-world use cases:

- Enterprise knowledge chatbots for internal documentation

- Customer support automation using company data

- Legal and compliance document search assistants

- AI-powered research tools and summarization systems

- Personalized recommendation systems

What buyers should evaluate:

- Retrieval quality and ranking mechanisms

- Vector database compatibility

- Latency and response time

- Scalability for large datasets

- Integration with LLM providers

- Data ingestion pipelines and connectors

- Security and data isolation

- Observability and debugging tools

- Cost efficiency and pricing model

Best for: AI engineers, data teams, SaaS companies, enterprises building internal knowledge systems, and startups building AI-powered apps.

Not ideal for: Teams without structured data, simple chatbot use cases that don’t require external knowledge, or low-scale applications where basic prompt engineering is sufficient.

Key Trends in RAG (Retrieval-Augmented Generation) Tooling

- Rise of hybrid search (vector + keyword) for better retrieval accuracy

- Adoption of multi-vector and multi-modal retrieval systems

- Integration with real-time data sources and streaming pipelines

- Growing focus on evaluation and observability in RAG pipelines

- Emergence of end-to-end RAG platforms (no-code + low-code)

- Increased demand for data privacy and secure RAG architectures

- Support for multi-LLM orchestration within RAG pipelines

- Optimization for low-latency retrieval at scale

- Evolution of agent-based RAG systems

- Pricing shifting toward usage-based models (tokens + queries)

How We Selected These Tools (Methodology)

- Selected tools with strong developer adoption and ecosystem presence

- Evaluated end-to-end RAG capabilities (retrieval, generation, orchestration)

- Assessed performance and scalability benchmarks

- Considered security practices and enterprise readiness

- Reviewed integration flexibility with databases and APIs

- Ensured mix of open-source and enterprise-grade platforms

- Prioritized tools supporting modern LLM workflows

- Focused on platforms actively evolving in the AI ecosystem

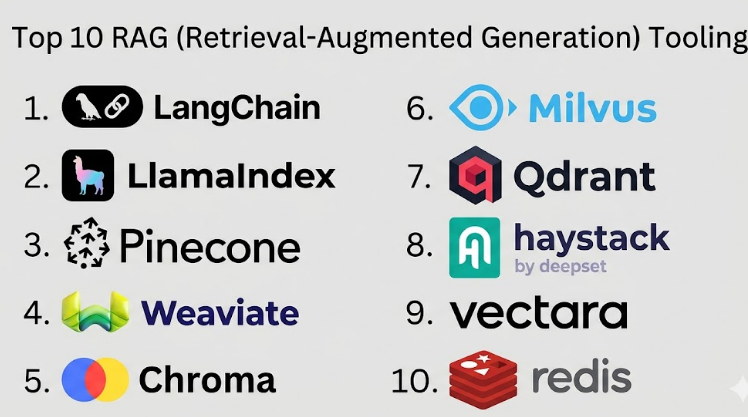

Top 10 RAG (Retrieval-Augmented Generation) Tooling

#1 — LangChain

Short description: A popular open-source framework for building RAG applications, widely used by developers to orchestrate LLM pipelines.

Key Features

- Modular RAG pipeline building

- Document loaders and retrievers

- Memory management

- Multi-LLM support

- Agent workflows

- Prompt chaining

Pros

- Highly flexible and extensible

- Strong community adoption

Cons

- Steep learning curve

- Debugging can be complex

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Extensive ecosystem support for AI tools and databases.

- Vector databases (Pinecone, Weaviate)

- APIs for LLM providers

- Data connectors

Support & Community

Very strong open-source community and documentation.

#2 — LlamaIndex

Short description: A data framework designed specifically for connecting LLMs with structured and unstructured data sources.

Key Features

- Data connectors and ingestion pipelines

- Indexing and retrieval optimization

- Query engines

- Multi-modal support

- Evaluation tools

Pros

- Designed for RAG use cases

- Easy data integration

Cons

- Requires technical expertise

- Limited UI

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Strong integration with data ecosystems.

- Databases and APIs

- File systems

- Cloud storage

Support & Community

Growing developer community.

#3 — Pinecone

Short description: A managed vector database optimized for similarity search and RAG applications.

Key Features

- High-performance vector search

- Managed infrastructure

- Scalability

- Metadata filtering

- Low-latency queries

Pros

- Fully managed

- High performance

Cons

- Cost can scale with usage

- Limited control over infrastructure

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Works with major AI frameworks.

- LangChain

- LlamaIndex

- APIs

Support & Community

Enterprise support available.

#4 — Weaviate

Short description: An open-source vector database with built-in AI modules for semantic search and RAG.

Key Features

- Hybrid search

- GraphQL API

- Modular AI integrations

- Schema-based data modeling

- Scalability

Pros

- Open-source flexibility

- Built-in AI features

Cons

- Requires infrastructure management

- Setup complexity

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- APIs

- ML frameworks

- Cloud platforms

Support & Community

Active open-source community.

#5 — Chroma

Short description: Lightweight vector database designed for developers building local or small-scale RAG applications.

Key Features

- Simple vector storage

- Embedding support

- Fast local deployment

- Easy API usage

- Minimal setup

Pros

- Beginner-friendly

- Lightweight

Cons

- Not ideal for large-scale systems

- Limited enterprise features

Platforms / Deployment

Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Python-based integrations

- LLM APIs

Support & Community

Growing community.

#6 — Milvus

Short description: Open-source vector database designed for large-scale AI and RAG applications.

Key Features

- High-performance vector indexing

- Distributed architecture

- Multi-cloud support

- GPU acceleration

- Scalability

Pros

- Highly scalable

- Enterprise-ready

Cons

- Complex setup

- Requires infrastructure expertise

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Data pipelines

- APIs

- ML frameworks

Support & Community

Strong community and enterprise support.

#7 — Qdrant

Short description: Vector database with focus on performance, filtering, and real-time RAG use cases.

Key Features

- Payload filtering

- Real-time updates

- Distributed deployment

- High performance

- API-first design

Pros

- Fast and efficient

- Flexible filtering

Cons

- Smaller ecosystem

- Learning curve

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- APIs

- LLM frameworks

Support & Community

Growing ecosystem.

#8 — Haystack

Short description: Open-source framework for building search systems and RAG pipelines.

Key Features

- Pipeline orchestration

- Document retrieval

- Multi-model support

- Evaluation tools

- API-based workflows

Pros

- Mature framework

- Flexible pipelines

Cons

- Requires configuration

- Less intuitive UI

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Search engines

- ML tools

- APIs

Support & Community

Strong open-source backing.

#9 — Vectara

Short description: End-to-end RAG platform offering retrieval and generation capabilities out-of-the-box.

Key Features

- Managed RAG platform

- Semantic search

- Built-in ranking

- APIs for integration

- Real-time indexing

Pros

- Easy to deploy

- End-to-end solution

Cons

- Less customization

- Pricing varies

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- APIs

- Data ingestion pipelines

Support & Community

Enterprise support available.

#10 — Redis (Vector Search)

Short description: Extension of Redis enabling vector search capabilities for RAG systems.

Key Features

- In-memory vector search

- Fast performance

- Hybrid queries

- Scalability

- Integration with existing Redis setups

Pros

- Very fast

- Widely adopted

Cons

- Limited advanced RAG features

- Requires tuning

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- APIs

- Databases

- AI frameworks

Support & Community

Very strong global community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | RAG orchestration | Web | Cloud/Self-hosted | Pipeline flexibility | N/A |

| LlamaIndex | Data integration | Web | Cloud/Self-hosted | Indexing system | N/A |

| Pinecone | Vector DB | Web | Cloud | Managed vector search | N/A |

| Weaviate | Open-source DB | Web | Cloud/Self-hosted | Hybrid search | N/A |

| Chroma | Lightweight apps | Local | Self-hosted | Simplicity | N/A |

| Milvus | Large-scale AI | Linux | Cloud/Self-hosted | Distributed search | N/A |

| Qdrant | Real-time apps | Web | Cloud/Self-hosted | Filtering | N/A |

| Haystack | Search pipelines | Web | Cloud/Self-hosted | Pipeline design | N/A |

| Vectara | Managed RAG | Web | Cloud | End-to-end platform | N/A |

| Redis | Fast retrieval | Web | Cloud/Self-hosted | In-memory speed | N/A |

Evaluation & Scoring of RAG (Retrieval-Augmented Generation) Tooling

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 7 | 9 | 7 | 8 | 9 | 8 | 8.3 |

| LlamaIndex | 9 | 8 | 8 | 7 | 8 | 8 | 8 | 8.2 |

| Pinecone | 9 | 8 | 9 | 8 | 9 | 8 | 7 | 8.4 |

| Weaviate | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.8 |

| Chroma | 7 | 9 | 7 | 6 | 7 | 7 | 9 | 7.8 |

| Milvus | 9 | 6 | 8 | 8 | 9 | 7 | 7 | 8.0 |

| Qdrant | 8 | 7 | 7 | 7 | 8 | 7 | 8 | 7.7 |

| Haystack | 8 | 7 | 8 | 7 | 8 | 8 | 8 | 7.9 |

| Vectara | 8 | 9 | 7 | 8 | 8 | 8 | 7 | 8.0 |

| Redis | 8 | 8 | 9 | 7 | 9 | 9 | 8 | 8.3 |

Interpretation:

- Scores reflect comparative strength across multiple dimensions

- Enterprise tools score higher in performance and security

- Open-source tools score higher in flexibility and value

- Ease of use may vary based on technical expertise

- Always evaluate based on your use case

Which RAG (Retrieval-Augmented Generation) Tooling

Solo / Freelancer

- Best: Chroma, LangChain

- Focus on simplicity and local development

SMB

- Best: LlamaIndex, Pinecone

- Balance of performance and usability

Mid-Market

- Best: Weaviate, Qdrant

- Scalable and flexible

Enterprise

- Best: Milvus, Vectara, Pinecone

- High performance and reliability

Budget vs Premium

- Budget: Chroma, Qdrant

- Premium: Pinecone, Vectara

Feature Depth vs Ease of Use

- Deep features: LangChain, Haystack

- Easy to use: Vectara, Chroma

Integrations & Scalability

- Strong integrations: LangChain, Redis

- Scalable: Milvus, Pinecone

Security & Compliance Needs

- High security: Pinecone, Milvus

- Moderate: Weaviate, Qdrant

Frequently Asked Questions (FAQs)

What is RAG in AI?

RAG combines retrieval systems with AI models to improve response accuracy using external data.

Why is RAG important?

It reduces hallucinations and improves trust in AI outputs.

Do I need a vector database?

Yes, most RAG systems rely on vector databases for similarity search.

Is RAG expensive?

Costs vary depending on data size, queries, and infrastructure.

Can RAG work in real-time?

Yes, many tools support low-latency retrieval.

Is RAG secure?

Security depends on deployment and tool choice.

How long does it take to build a RAG system?

From a few days to several weeks depending on complexity.

Can I use multiple models?

Yes, many tools support multi-LLM setups.

What are common mistakes?

Poor data quality, lack of evaluation, and ignoring latency.

Can I switch tools later?

Yes, but migration depends on architecture.

Conclusion

RAG (Retrieval-Augmented Generation) tooling has quickly become one of the most important building blocks in modern AI systems. Instead of relying solely on pre-trained models, organizations are now building AI applications that are deeply connected to their own data, ensuring better accuracy, relevance, and trust. However, choosing the right RAG tooling depends entirely on your use case. Developer-first frameworks like LangChain and LlamaIndex offer flexibility, while managed solutions like Pinecone and Vectara simplify deployment. Open-source databases like Milvus and Weaviate provide scalability, while lightweight tools like Chroma are perfect for rapid prototyping.