Introduction

AI Safety & Evaluation Tools are platforms designed to test, monitor, and improve the behavior of AI systems—especially large language models (LLMs) and generative AI applications. In simple terms, they help teams ensure that AI outputs are accurate, safe, unbiased, and aligned with business goals before and after deployment.

As AI adoption accelerates, especially in enterprise workflows, customer-facing apps, and automation pipelines, the risks have also increased—hallucinations, harmful outputs, data leakage, and compliance issues. This is why AI safety is no longer optional; it’s becoming a core engineering and governance function.

Real-world use cases:

- Validating chatbot responses before production deployment

- Detecting hallucinations in AI-generated content

- Ensuring compliance with privacy and regulatory standards

- Monitoring model drift and performance over time

- Red-teaming AI systems for vulnerabilities

What buyers should evaluate:

- Coverage of evaluation types (toxicity, bias, accuracy, robustness)

- Automation vs manual testing capabilities

- Integration with ML pipelines and APIs

- Real-time monitoring vs batch evaluation

- Security and compliance support

- Customizability of evaluation metrics

- Scalability for large AI workloads

- Reporting and observability dashboards

Best for: AI engineers, ML teams, DevOps/SRE teams, product managers, compliance teams, and enterprises deploying AI at scale.

Not ideal for: Small teams not using AI in production, or companies using only basic automation tools without AI-driven workflows.

Key Trends in AI Safety & Evaluation Tools

- Shift from offline testing to continuous AI monitoring in production

- Rise of automated red-teaming frameworks for LLM security

- Increasing demand for compliance-ready AI governance tools

- Integration with MLOps and DevOps pipelines

- Focus on hallucination detection and mitigation

- Emergence of AI observability platforms

- Support for multi-model evaluation (OpenAI, open-source, custom models)

- Growing adoption of human-in-the-loop evaluation workflows

- Expansion of AI risk scoring and reporting dashboards

- Pricing evolving toward usage-based and API-based models

How We Selected These Tools (Methodology)

- Evaluated tools with strong market adoption and developer mindshare

- Assessed feature completeness across safety, evaluation, and monitoring

- Reviewed performance and reliability indicators

- Considered security and compliance capabilities

- Checked integration ecosystem (APIs, ML tools, CI/CD)

- Ensured coverage across enterprise, SMB, and developer-first tools

- Balanced between commercial and open-source platforms

- Focused on tools actively evolving with modern AI trends

- Prioritized tools with real-world deployment use cases

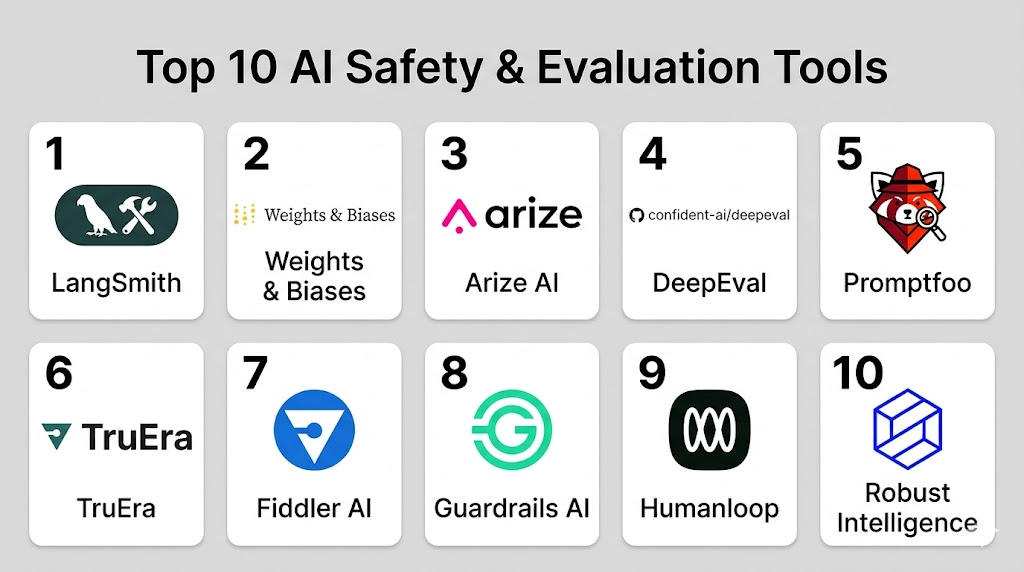

Top 10 AI Safety & Evaluation Tools

#1 — LangSmith

Short description: A developer-focused platform for debugging, testing, and evaluating LLM applications. Ideal for teams building production AI apps.

Key Features

- LLM tracing and debugging

- Dataset-based evaluation workflows

- Prompt versioning and testing

- Real-time observability

- Experiment tracking

- Performance metrics

Pros

- Strong developer tooling

- Seamless integration with LangChain ecosystem

Cons

- Limited governance features

- Requires technical setup

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Integrates deeply with LangChain and API-based AI tools.

- OpenAI-compatible APIs

- Custom LLM integrations

- SDK-based extensibility

Support & Community

Strong developer community and documentation.

#2 — Weights & Biases (W&B)

Short description: A popular ML experimentation and evaluation platform extended for LLM evaluation and monitoring.

Key Features

- Experiment tracking

- Model evaluation dashboards

- Dataset versioning

- Collaboration tools

- Visualization tools

Pros

- Mature platform

- Strong visualization capabilities

Cons

- Can be complex for beginners

- Pricing varies

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Works across ML frameworks.

- PyTorch, TensorFlow

- APIs and SDKs

- CI/CD integrations

Support & Community

Strong community and enterprise support.

#3 — Arize AI

Short description: AI observability platform focused on monitoring, debugging, and evaluating ML and LLM systems.

Key Features

- Model monitoring

- Drift detection

- LLM evaluation tools

- Explainability features

- Alerting system

Pros

- Strong observability

- Enterprise-ready

Cons

- Requires setup effort

- Pricing varies

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports ML pipelines and APIs.

- Data platforms

- ML frameworks

- API integrations

Support & Community

Enterprise-level support.

#4 — DeepEval

Short description: Open-source evaluation framework for testing LLM applications with customizable metrics.

Key Features

- Automated evaluation pipelines

- Custom metrics support

- Test case generation

- LLM benchmarking

- Integration with CI pipelines

Pros

- Open-source flexibility

- Lightweight

Cons

- Limited enterprise features

- Requires coding knowledge

Platforms / Deployment

Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Developer-focused integrations.

- Python-based workflows

- API extensibility

Support & Community

Growing open-source community.

#5 — Promptfoo

Short description: A testing and evaluation tool for prompt engineering and LLM outputs.

Key Features

- Prompt testing framework

- Regression testing

- Multi-model comparison

- CLI-based workflows

- YAML configurations

Pros

- Simple to use

- Great for prompt testing

Cons

- Limited monitoring features

- Developer-centric

Platforms / Deployment

CLI / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- OpenAI and LLM APIs

- CI/CD pipelines

Support & Community

Active GitHub community.

#6 — TruEra

Short description: Enterprise-grade AI quality and explainability platform.

Key Features

- Model explainability

- Bias detection

- Performance monitoring

- Governance tools

- LLM evaluation support

Pros

- Strong compliance focus

- Enterprise-ready

Cons

- Complex setup

- Premium pricing

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- ML pipelines

- Enterprise systems

- APIs

Support & Community

Enterprise support.

#7 — Fiddler AI

Short description: AI monitoring and explainability platform with strong governance capabilities.

Key Features

- Model monitoring

- Bias detection

- Explainability

- LLM observability

- Alerts and dashboards

Pros

- Strong governance tools

- Good UI

Cons

- Pricing may be high

- Requires integration effort

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- ML frameworks

- APIs

- Data pipelines

Support & Community

Enterprise support available.

#8 — Guardrails AI

Short description: Framework for enforcing constraints and validation rules on LLM outputs.

Key Features

- Output validation

- Schema enforcement

- Guardrail policies

- LLM safety checks

- Custom validators

Pros

- Strong safety enforcement

- Flexible

Cons

- Developer-focused

- Limited UI

Platforms / Deployment

Self-hosted / API

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Python SDK

- LLM APIs

Support & Community

Active developer community.

#9 — Humanloop

Short description: Platform for human-in-the-loop evaluation and feedback for AI systems.

Key Features

- Feedback loops

- Evaluation datasets

- Annotation tools

- Prompt iteration

- Experiment tracking

Pros

- Great for human evaluation

- Easy UI

Cons

- Limited automation

- Pricing varies

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- API integrations

- Data workflows

Support & Community

Growing adoption.

#10 — Robust Intelligence

Short description: AI risk and security platform focused on testing and protecting ML systems.

Key Features

- AI vulnerability testing

- Red teaming

- Security validation

- Risk scoring

- Compliance tools

Pros

- Strong security focus

- Enterprise-grade

Cons

- Premium pricing

- Complex deployment

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Enterprise AI systems

- APIs

Support & Community

Enterprise support.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangSmith | LLM debugging | Web | Cloud | LLM tracing | N/A |

| Weights & Biases | ML evaluation | Web | Cloud/Self-hosted | Experiment tracking | N/A |

| Arize AI | Observability | Web | Cloud | Drift detection | N/A |

| DeepEval | Open-source testing | Linux/macOS | Self-hosted | Custom metrics | N/A |

| Promptfoo | Prompt testing | CLI | Self-hosted | Regression testing | N/A |

| TruEra | Enterprise AI quality | Web | Cloud/Hybrid | Explainability | N/A |

| Fiddler AI | Governance | Web | Cloud | Bias detection | N/A |

| Guardrails AI | Output validation | API | Self-hosted | Schema enforcement | N/A |

| Humanloop | Human feedback | Web | Cloud | Human-in-loop | N/A |

| Robust Intelligence | AI security | Web | Cloud/Hybrid | Red teaming | N/A |

Evaluation & Scoring of AI Safety & Evaluation Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| LangSmith | 9 | 8 | 9 | 7 | 9 | 8 | 8 | 8.4 |

| W&B | 9 | 7 | 9 | 7 | 9 | 9 | 7 | 8.3 |

| Arize AI | 9 | 7 | 8 | 8 | 9 | 8 | 7 | 8.2 |

| DeepEval | 7 | 6 | 7 | 6 | 7 | 6 | 9 | 7.0 |

| Promptfoo | 7 | 8 | 7 | 6 | 7 | 7 | 9 | 7.5 |

| TruEra | 9 | 6 | 8 | 9 | 8 | 8 | 6 | 8.0 |

| Fiddler AI | 8 | 7 | 8 | 8 | 8 | 8 | 7 | 7.9 |

| Guardrails AI | 8 | 7 | 7 | 7 | 7 | 7 | 8 | 7.6 |

| Humanloop | 7 | 8 | 7 | 6 | 7 | 7 | 7 | 7.3 |

| Robust Intelligence | 9 | 6 | 7 | 9 | 8 | 8 | 6 | 7.9 |

How to interpret scores:

- Scores are relative comparisons, not absolute benchmarks

- Higher scores indicate stronger overall capability

- Enterprise tools often score higher in security but lower in ease

- Open-source tools may score lower in support but higher in value

- Choose based on your specific needs, not just total score

Which AI Safety & Evaluation Tools for You?

Solo / Freelancer

- Best: Promptfoo, Guardrails AI

- Focus on simplicity and low cost

SMB

- Best: LangSmith, Humanloop

- Balance between usability and features

Mid-Market

- Best: Arize AI, Fiddler AI

- Focus on monitoring and governance

Enterprise

- Best: TruEra, Robust Intelligence

- Strong compliance and security required

Budget vs Premium

- Budget: DeepEval, Promptfoo

- Premium: TruEra, Robust Intelligence

Feature Depth vs Ease of Use

- Deep features: Arize AI, TruEra

- Easy to use: Humanloop, Promptfoo

Integrations & Scalability

- Strong integrations: W&B, LangSmith

- Scalable: Arize AI, Fiddler AI

Security & Compliance Needs

- High security: Robust Intelligence, TruEra

- Moderate: LangSmith, Arize AI

Frequently Asked Questions (FAQs)

What are AI Safety tools used for?

They help test, monitor, and control AI outputs to ensure safety, accuracy, and compliance.

Are these tools only for large companies?

No, many tools support small teams and developers as well.

Do I need coding skills?

Some tools require coding, while others offer UI-based workflows.

How much do these tools cost?

Pricing varies widely from free open-source to enterprise-level subscriptions.

Can these tools detect hallucinations?

Yes, many tools include hallucination detection features.

Are they secure?

Security depends on the tool; always verify compliance requirements.

Can I integrate them with my pipeline?

Most tools support API and CI/CD integrations.

What is AI observability?

It refers to monitoring AI system performance and behavior in production.

How long does implementation take?

From a few hours (simple tools) to weeks (enterprise platforms).

Can I switch tools later?

Yes, but migration effort depends on integration complexity.

Conclusion

AI Safety & Evaluation Tools are becoming a critical part of modern AI development and deployment. As organizations increasingly rely on AI for decision-making, automation, and customer interactions, the need to ensure safe, reliable, and compliant outputs is growing rapidly. These tools help teams detect risks early, improve model performance, and maintain trust in AI systems. However, there is no single “best” tool for everyone. The right choice depends on your use case, team size, technical expertise, and compliance requirements. A startup may prefer lightweight tools like Promptfoo or Guardrails AI, while enterprises may require robust platforms like TruEra or Robust Intelligence.