Introduction

Prompt Engineering Tools are platforms and frameworks that help users design, test, optimize, and manage prompts used to interact with AI models like large language models (LLMs). Instead of manually experimenting with prompts, these tools provide structured workflows, versioning, testing environments, and collaboration features to improve output quality and consistency.

In today’s AI-driven environment, prompt engineering has become a core skill for developers, marketers, product teams, and AI engineers. As organizations increasingly rely on generative AI for automation, content generation, coding, analytics, and customer support, managing prompts effectively is no longer optional—it is critical for performance, cost control, and reliability.

Real-world use cases include:

- Building AI-powered chatbots and assistants

- Automating content creation workflows

- Enhancing developer productivity with AI copilots

- Running A/B testing for prompt optimization

- Managing enterprise AI workflows and governance

What buyers should evaluate:

- Prompt testing and evaluation capabilities

- Version control and collaboration features

- Integration with LLM APIs and workflows

- Security and compliance controls

- Scalability and performance

- Ease of use for non-technical users

- Cost and pricing flexibility

- Observability and analytics

- Deployment options (cloud vs self-hosted)

Best for: Developers, AI engineers, product managers, marketers, and enterprises building AI-driven workflows, especially those scaling LLM usage across teams.

Not ideal for: Individuals using AI casually or small teams with minimal prompt experimentation needs; basic AI interfaces may be sufficient in such cases.

Key Trends in Prompt Engineering Tools

- Rise of LLM observability platforms with real-time monitoring and debugging

- Increased focus on prompt versioning and lifecycle management

- Integration with AI agents and autonomous workflows

- Growing demand for security, governance, and auditability

- Expansion of no-code and low-code prompt builders

- Support for multi-model orchestration (OpenAI, Anthropic, etc.)

- Emergence of evaluation frameworks and benchmarking tools

- Integration with CI/CD pipelines for AI applications

- Adoption of fine-tuning + prompt hybrid strategies

- Shift toward enterprise-grade prompt management platforms

How We Selected These Tools (Methodology)

- Strong market adoption and developer mindshare

- Feature completeness across prompt lifecycle

- Performance and reliability indicators

- Security posture and enterprise readiness

- Integration capabilities with modern stacks

- Flexibility across use cases (dev, business, AI teams)

- Support for multiple LLM providers

- Community activity and ecosystem growth

- Fit across SMB, mid-market, and enterprise users

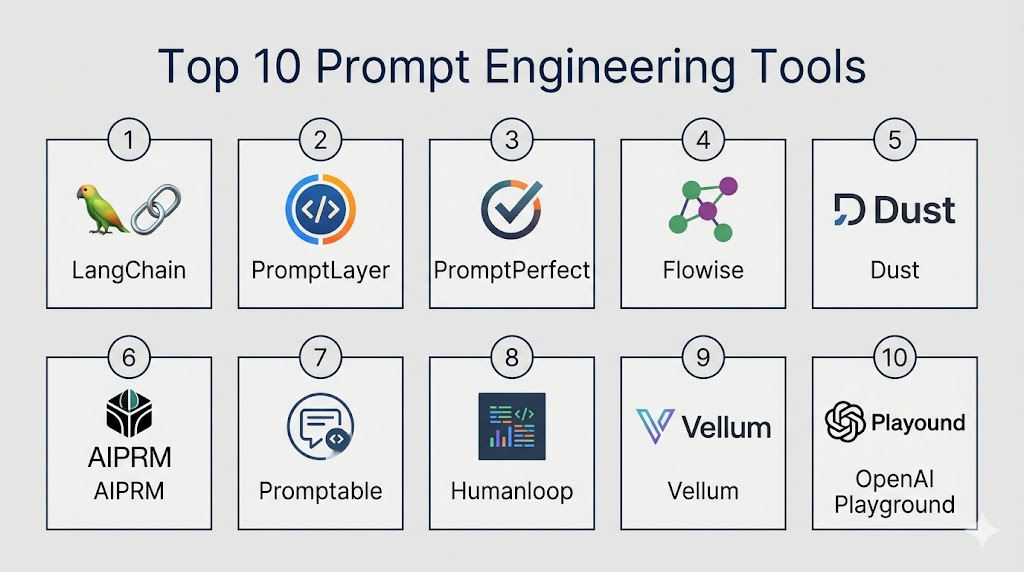

Top 10 Prompt Engineering Tools

#1 — LangChain

Short description: A popular developer framework for building applications using LLMs. Widely used for chaining prompts, tools, and workflows.

Key Features

- Prompt templates and chaining logic

- Memory and context management

- Multi-model support

- Tool and agent integrations

- Extensive ecosystem and plugins

- Debugging and tracing tools

Pros

- Highly flexible for developers

- Strong ecosystem and community

Cons

- Steep learning curve

- Requires coding expertise

Platforms / Deployment

Python / JavaScript / Cloud / Self-hosted

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

Strong integration with LLM APIs and developer tools

- OpenAI, Anthropic

- Vector databases

- APIs and plugins

- Cloud platforms

Support & Community

Large open-source community with extensive documentation

#2 — PromptLayer

Short description: A prompt management and observability platform designed for tracking and optimizing LLM prompts.

Key Features

- Prompt logging and tracking

- Version control

- Performance analytics

- A/B testing

- API integration

Pros

- Strong observability features

- Easy integration

Cons

- Limited UI flexibility

- Focused mainly on monitoring

Platforms / Deployment

Web / Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports API-based integrations

- LLM providers

- Developer tools

- Analytics platforms

Support & Community

Growing community with good documentation

#3 — PromptPerfect

Short description: A tool focused on optimizing prompts automatically for better AI responses.

Key Features

- Automated prompt refinement

- Multi-model compatibility

- Performance benchmarking

- Prompt tuning suggestions

- Optimization engine

Pros

- Improves prompt quality quickly

- Easy to use

Cons

- Limited customization depth

- Less control for advanced users

Platforms / Deployment

Web / Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Works with multiple AI models

- OpenAI

- Other LLM APIs

Support & Community

Moderate documentation and support

#4 — Flowise

Short description: A visual drag-and-drop tool for building LLM workflows and prompt pipelines.

Key Features

- Visual workflow builder

- Node-based prompt design

- Integration with LangChain

- API deployment

- Custom components

Pros

- No-code friendly

- Fast prototyping

Cons

- Limited enterprise features

- Performance varies

Platforms / Deployment

Web / Self-hosted / Cloud

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

- LangChain

- APIs

- Databases

Support & Community

Open-source community support

#5 — Dust

Short description: A collaborative AI tool for building internal assistants and managing prompts.

Key Features

- Team collaboration

- Prompt templates

- Workflow automation

- Knowledge base integration

- Custom assistants

Pros

- Strong collaboration features

- Enterprise-friendly

Cons

- Limited customization

- Pricing transparency unclear

Platforms / Deployment

Web / Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Internal data sources

- APIs

- Knowledge systems

Support & Community

Enterprise-focused support

#6 — AIPRM

Short description: A prompt management extension widely used for predefined templates and marketing use cases.

Key Features

- Prompt templates library

- Community sharing

- SEO and marketing prompts

- Easy installation

- Categorized prompts

Pros

- Easy for beginners

- Large template library

Cons

- Limited customization

- Browser dependency

Platforms / Deployment

Browser extension

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- ChatGPT integration

- Template sharing

Support & Community

Active user community

#7 — Promptable

Short description: A testing and evaluation platform for prompts focused on improving output quality.

Key Features

- Prompt testing

- Evaluation metrics

- Regression testing

- Debugging tools

- Collaboration

Pros

- Strong testing features

- Useful for developers

Cons

- Smaller ecosystem

- Limited integrations

Platforms / Deployment

Web / Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- APIs

- LLM providers

Support & Community

Emerging community

#8 — Humanloop

Short description: A platform for building, evaluating, and deploying AI applications with prompt management.

Key Features

- Prompt versioning

- Evaluation workflows

- Data annotation

- Experiment tracking

- Analytics

Pros

- Strong enterprise features

- Good evaluation tools

Cons

- Learning curve

- Pricing not transparent

Platforms / Deployment

Web / Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- LLM APIs

- Data pipelines

- Analytics tools

Support & Community

Enterprise-grade support

#9 — Vellum

Short description: A platform for prompt engineering, testing, and deployment with a focus on production use.

Key Features

- Prompt testing

- Version control

- Workflow automation

- Monitoring

- Deployment tools

Pros

- Production-ready features

- Strong testing

Cons

- Complex setup

- Limited free usage

Platforms / Deployment

Web / Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- APIs

- LLM platforms

- Dev tools

Support & Community

Professional support available

#10 — OpenAI Playground

Short description: A widely used interface for experimenting with prompts and testing LLM outputs.

Key Features

- Interactive prompt testing

- Parameter tuning

- Model selection

- Real-time outputs

- Simple interface

Pros

- Easy to use

- Great for experimentation

Cons

- Not a full management tool

- Limited collaboration

Platforms / Deployment

Web / Cloud

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

- OpenAI ecosystem

- API access

Support & Community

Extensive documentation

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | Developers | Python/JS | Hybrid | Workflow chaining | N/A |

| PromptLayer | Monitoring | Web | Cloud | Observability | N/A |

| PromptPerfect | Optimization | Web | Cloud | Auto prompt tuning | N/A |

| Flowise | No-code users | Web | Hybrid | Visual builder | N/A |

| Dust | Teams | Web | Cloud | Collaboration | N/A |

| AIPRM | Marketers | Browser | Cloud | Prompt templates | N/A |

| Promptable | Testing | Web | Cloud | Evaluation tools | N/A |

| Humanloop | Enterprises | Web | Cloud | Experiment tracking | N/A |

| Vellum | Production teams | Web | Cloud | Deployment tools | N/A |

| OpenAI Playground | Beginners | Web | Cloud | Easy experimentation | N/A |

Evaluation & Scoring of Prompt Engineering Tools

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 6 | 9 | 6 | 8 | 8 | 8 | 8.0 |

| PromptLayer | 8 | 8 | 7 | 6 | 7 | 7 | 7 | 7.4 |

| PromptPerfect | 7 | 9 | 6 | 5 | 7 | 6 | 8 | 7.1 |

| Flowise | 7 | 8 | 7 | 5 | 6 | 6 | 8 | 7.0 |

| Dust | 8 | 7 | 7 | 6 | 7 | 7 | 7 | 7.3 |

| AIPRM | 6 | 9 | 5 | 5 | 6 | 6 | 8 | 6.8 |

| Promptable | 7 | 7 | 6 | 5 | 7 | 6 | 7 | 6.9 |

| Humanloop | 9 | 7 | 8 | 7 | 8 | 8 | 7 | 8.1 |

| Vellum | 8 | 7 | 8 | 7 | 8 | 7 | 7 | 7.8 |

| OpenAI Playground | 7 | 10 | 6 | 6 | 8 | 8 | 9 | 7.8 |

How to interpret scores:

- Scores are comparative, not absolute.

- Higher scores indicate stronger overall capability for enterprise use.

- Ease of use matters more for non-technical teams.

- Integration and security scores are critical for production deployments.

Which Prompt Engineering Tools for You?

Solo / Freelancer

- Best: OpenAI Playground, AIPRM, PromptPerfect

- Focus on ease and speed

SMB

- Best: Flowise, PromptLayer, Dust

- Balance between usability and features

Mid-Market

- Best: Vellum, Humanloop

- Need scalability and testing

Enterprise

- Best: LangChain, Humanloop

- Focus on integrations, governance, performance

Budget vs Premium

- Budget: OpenAI Playground, Flowise

- Premium: Humanloop, Vellum

Feature Depth vs Ease of Use

- Deep features: LangChain

- Easy use: AIPRM, PromptPerfect

Integrations & Scalability

- Strong: LangChain, Humanloop

- Moderate: PromptLayer

Security & Compliance Needs

- Enterprise focus: Humanloop, Vellum

- Basic: AIPRM

Frequently Asked Questions (FAQs)

What are prompt engineering tools?

They help design, test, and manage prompts for AI systems to improve output quality and consistency.

Are these tools expensive?

Pricing varies widely; many offer free tiers, while enterprise tools can be costly.

Do I need coding skills?

Some tools require coding (LangChain), while others are no-code.

Can I use multiple tools together?

Yes, many tools integrate with APIs and can be combined.

Are these tools secure?

Security varies; enterprise tools offer better controls.

Do they support all AI models?

Most support major LLM providers.

How long does implementation take?

From minutes (simple tools) to weeks (enterprise deployment).

Can I switch tools easily?

Depends on architecture and integrations.

What is the biggest mistake users make?

Not testing prompts properly before deployment.

Are prompt tools replacing developers?

No, they enhance productivity but still require expertise.

Conclusion

Prompt engineering tools have become essential for anyone building or scaling AI-powered applications. From simple experimentation platforms to enterprise-grade systems with governance, testing, and observability, the ecosystem is evolving rapidly. The right choice depends on your use case, technical expertise, and scale. Beginners may benefit from simple tools like Playground or AIPRM, while enterprises should prioritize platforms like Humanloop or LangChain for advanced workflows.