Introduction

LLM orchestration frameworks are tools that help you design, manage, and scale applications powered by large language models (LLMs). Instead of writing raw prompts and glue code, these frameworks provide structured ways to connect models, tools, APIs, memory, and workflows into reliable systems.

In the current landscape, LLM usage has shifted from simple chatbots to complex, multi-step AI systems—such as agents, copilots, and automation pipelines. This makes orchestration essential for managing prompts, handling failures, integrating tools, and ensuring consistency across deployments.

Common use cases include:

- AI copilots for developers, analysts, or customer support

- Automated workflows using agents (data extraction, summarization, reporting)

- Retrieval-augmented generation (RAG) systems for enterprise knowledge

- Multi-model pipelines combining text, code, and structured data

- AI-driven SaaS features like recommendations, search, and personalization

What buyers should evaluate:

- Workflow orchestration and agent capabilities

- Model compatibility (OpenAI, open-source, multi-provider support)

- Observability and debugging tools

- Scalability and performance handling

- Security and compliance features

- Integration ecosystem (databases, APIs, tools)

- Ease of development and learning curve

- Deployment flexibility (cloud vs self-hosted)

Best for: Developers, AI engineers, product teams, startups, and enterprises building production-grade AI applications.

Not ideal for: Small teams using simple prompts or standalone chatbots where orchestration overhead adds unnecessary complexity.

Key Trends in LLM Orchestration Frameworks

- Agent-first architecture: Increasing focus on autonomous AI agents performing multi-step tasks

- RAG standardization: Retrieval pipelines becoming a core component of most frameworks

- Multi-model orchestration: Support for combining proprietary and open-source LLMs

- Observability tools: Built-in tracing, logging, and evaluation systems for debugging LLM outputs

- Security-first design: Growing emphasis on data privacy, prompt injection protection, and governance

- Low-code interfaces: Visual workflow builders for non-developers

- Edge and on-prem deployments: Demand for private deployments due to compliance needs

- Cost optimization layers: Token usage tracking and optimization strategies

- Composable architecture: Modular building blocks for flexibility

- Integration ecosystems: Tight coupling with vector databases, APIs, and enterprise tools

How We Selected These Tools (Methodology)

- Evaluated market adoption and developer mindshare across ecosystems

- Assessed feature completeness including agents, memory, RAG, and pipelines

- Considered performance reliability in production environments

- Reviewed security posture signals where publicly available

- Analyzed integration capabilities with tools, APIs, and data systems

- Checked community strength and documentation quality

- Evaluated fit across company sizes (startup to enterprise)

- Balanced open-source and commercial solutions

- Considered ease of onboarding vs advanced capabilities

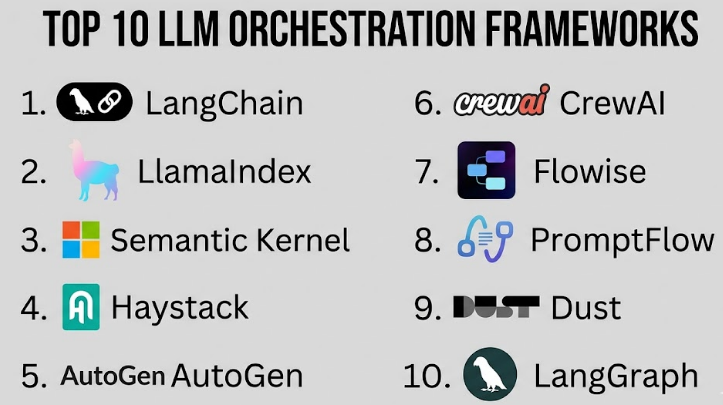

Top 10 LLM Orchestration Frameworks

#1 — LangChain

Short description (2–3 lines): A widely used open-source framework for building LLM-powered applications. It provides modular components for chains, agents, memory, and integrations, making it suitable for developers building complex workflows.

Key Features

- Chain-based workflow orchestration

- Agent frameworks for tool usage

- Built-in memory handling

- RAG pipeline support

- Extensive integrations (vector DBs, APIs)

- Multi-model compatibility

Pros

- Strong community and ecosystem

- Highly flexible and extensible

Cons

- Steep learning curve for beginners

- Can become complex for large systems

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Basic RBAC and integrations; formal certifications not publicly stated

Integrations & Ecosystem

LangChain integrates with most major LLM providers and data tools.

- Vector databases (Pinecone, Weaviate)

- APIs and SaaS tools

- Open-source model providers

Support & Community

Very active community, strong documentation, frequent updates

#2 — LlamaIndex

Short description (2–3 lines): A data-centric framework focused on connecting LLMs with external data sources, especially useful for building RAG-based systems.

Key Features

- Data indexing and retrieval pipelines

- Native RAG support

- Query engines and data connectors

- Structured data handling

- Multi-source ingestion

Pros

- Strong for data-heavy applications

- Easy integration with structured/unstructured data

Cons

- Less focus on full orchestration compared to others

- Limited agent features

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Focuses heavily on data integrations.

- Databases and document stores

- Vector DBs

- APIs and connectors

Support & Community

Growing community, good documentation

#3 — Semantic Kernel

Short description (2–3 lines): A framework designed for enterprise AI orchestration with strong support for integrating LLMs into existing applications.

Key Features

- Plugin-based architecture

- AI skill orchestration

- Memory and context handling

- Multi-language SDKs

- Enterprise integration focus

Pros

- Strong enterprise capabilities

- Structured development approach

Cons

- Smaller ecosystem compared to LangChain

- Learning curve for non-enterprise developers

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Enterprise-ready features; certifications not publicly stated

Integrations & Ecosystem

Designed for enterprise ecosystems.

- APIs and enterprise services

- Microsoft ecosystem tools

- Custom plugins

Support & Community

Good documentation, enterprise support options

#4 — Haystack

Short description (2–3 lines): An open-source framework for building search and question-answering systems powered by LLMs.

Key Features

- RAG pipelines

- Document retrieval systems

- Modular pipeline design

- Integration with search tools

- Scalable architecture

Pros

- Strong for search-based use cases

- Mature open-source ecosystem

Cons

- Less focus on agent workflows

- Requires setup for scaling

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Strong in search and retrieval integrations.

- Elasticsearch

- Vector databases

- APIs

Support & Community

Active open-source community

#5 — AutoGen

Short description (2–3 lines): A framework focused on building multi-agent systems where AI agents collaborate to complete tasks.

Key Features

- Multi-agent orchestration

- Conversation-based workflows

- Task automation

- Custom agent roles

- Code execution capabilities

Pros

- Powerful for agent-based workflows

- Flexible collaboration models

Cons

- Experimental in some areas

- Requires careful design

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports integration with tools and APIs.

- Code execution environments

- APIs

- LLM providers

Support & Community

Growing rapidly, active research community

#6 — CrewAI

Short description (2–3 lines): A lightweight framework for building structured multi-agent workflows with clear task assignments.

Key Features

- Role-based agent orchestration

- Task assignment workflows

- Simple configuration

- Collaboration logic

- Lightweight design

Pros

- Easy to get started

- Good for structured workflows

Cons

- Limited advanced features

- Smaller ecosystem

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Basic integrations with APIs and tools.

- APIs

- LLM providers

- Custom tools

Support & Community

Smaller but active community

#7 — Flowise

Short description (2–3 lines): A low-code visual tool for building LLM workflows using a drag-and-drop interface.

Key Features

- Visual workflow builder

- Node-based architecture

- LangChain integration

- Easy deployment

- RAG support

Pros

- Beginner-friendly

- Fast prototyping

Cons

- Limited customization compared to code-first tools

- Not ideal for very complex systems

Platforms / Deployment

Web / Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Integrates via nodes and APIs.

- LangChain components

- APIs

- Databases

Support & Community

Good documentation, growing user base

#8 — PromptFlow

Short description (2–3 lines): A tool focused on designing, testing, and optimizing LLM workflows with strong evaluation capabilities.

Key Features

- Workflow design tools

- Prompt testing and evaluation

- Debugging tools

- Experiment tracking

- Integration with AI pipelines

Pros

- Strong observability

- Good for optimization workflows

Cons

- Limited orchestration flexibility

- Ecosystem still evolving

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Focus on AI workflow integration.

- AI pipelines

- APIs

- LLM providers

Support & Community

Moderate documentation and support

#9 — Dust

Short description (2–3 lines): A platform designed for building and deploying AI assistants with orchestration and workflow capabilities.

Key Features

- AI assistant builder

- Workflow orchestration

- Collaboration tools

- Data integration

- Deployment tools

Pros

- Good for internal tools

- Easy deployment

Cons

- Limited flexibility compared to open frameworks

- Less developer control

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Integrates with internal tools and APIs.

- APIs

- Data sources

- SaaS tools

Support & Community

Smaller ecosystem

#10 — LangGraph

Short description (2–3 lines): A framework built on top of LangChain for creating stateful, graph-based workflows and agents.

Key Features

- Graph-based orchestration

- Stateful workflows

- Agent coordination

- Event-driven execution

- Integration with LangChain

Pros

- Advanced workflow control

- Ideal for complex systems

Cons

- Requires deep understanding

- Still evolving

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Built on LangChain ecosystem.

- LangChain tools

- APIs

- LLM providers

Support & Community

Growing community, tied to LangChain

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | Developers | Web / Linux / macOS | Hybrid | Modular orchestration | N/A |

| LlamaIndex | Data pipelines | Web / Linux | Cloud / Self-hosted | Data indexing | N/A |

| Semantic Kernel | Enterprise apps | Web / Windows | Hybrid | Plugin architecture | N/A |

| Haystack | Search systems | Linux / Cloud | Self-hosted | RAG pipelines | N/A |

| AutoGen | Multi-agent workflows | Web / Linux | Hybrid | Agent collaboration | N/A |

| CrewAI | Task automation | Web | Hybrid | Role-based agents | N/A |

| Flowise | Low-code workflows | Web | Cloud / Self-hosted | Visual builder | N/A |

| PromptFlow | Workflow optimization | Web | Cloud | Evaluation tools | N/A |

| Dust | AI assistants | Web | Cloud | Assistant builder | N/A |

| LangGraph | Complex orchestration | Web / Linux | Hybrid | Graph workflows | N/A |

Evaluation & Scoring of LLM Orchestration Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 6 | 9 | 6 | 8 | 9 | 8 | 8.1 |

| LlamaIndex | 8 | 7 | 8 | 6 | 8 | 8 | 8 | 7.9 |

| Semantic Kernel | 8 | 6 | 8 | 7 | 8 | 7 | 7 | 7.7 |

| Haystack | 8 | 6 | 7 | 6 | 8 | 7 | 7 | 7.4 |

| AutoGen | 8 | 6 | 7 | 6 | 7 | 7 | 7 | 7.2 |

| CrewAI | 7 | 8 | 6 | 5 | 7 | 6 | 8 | 7.0 |

| Flowise | 7 | 9 | 6 | 5 | 7 | 6 | 8 | 7.1 |

| PromptFlow | 7 | 7 | 7 | 6 | 7 | 6 | 7 | 6.9 |

| Dust | 7 | 8 | 6 | 6 | 7 | 6 | 7 | 7.0 |

| LangGraph | 9 | 5 | 8 | 6 | 8 | 7 | 7 | 7.8 |

How to interpret scores:

- Scores are relative across this category, not absolute benchmarks

- “Core” reflects orchestration power and features

- “Ease” reflects onboarding and developer experience

- Weighted total helps compare overall balance

- Higher scores indicate better fit for production-grade systems

- Lower scores may still be ideal for specific use cases

Which LLM Orchestration Frameworks for You?

Solo / Freelancer

- Best choices: Flowise, CrewAI

- Focus on ease of use and quick setup

SMB

- Best choices: LangChain, LlamaIndex

- Balance between flexibility and scalability

Mid-Market

- Best choices: LangGraph, AutoGen

- More complex workflows and agent systems

Enterprise

- Best choices: Semantic Kernel, LangChain

- Strong integration and governance requirements

Budget vs Premium

- Open-source tools (LangChain, Haystack) offer cost control

- Managed platforms (Dust, PromptFlow) simplify operations

Feature Depth vs Ease of Use

- Deep features: LangChain, LangGraph

- Ease of use: Flowise, CrewAI

Integrations & Scalability

- Best integration ecosystems: LangChain, LlamaIndex

- Best for scaling: Semantic Kernel

Security & Compliance Needs

- Enterprise-focused: Semantic Kernel

- Others: evaluate case-by-case

Frequently Asked Questions (FAQs)

What is an LLM orchestration framework?

A system that manages workflows, prompts, tools, and integrations around large language models to build scalable AI applications.

Do I need orchestration for simple AI apps?

Not always. Simple chatbots may not need it, but complex workflows benefit significantly.

Are these tools free?

Many are open-source, but enterprise features or managed versions may cost money.

How long does implementation take?

Basic setups can take hours, while production systems may take weeks.

Can I switch frameworks later?

Yes, but it may require refactoring depending on architecture.

Are these tools secure?

Security varies. Enterprise tools offer better controls, but many frameworks require custom implementation.

Do they support multiple LLM providers?

Most modern frameworks support multiple providers.

What is RAG in this context?

Retrieval-Augmented Generation combines LLMs with external data sources.

Which tool is easiest to start with?

Flowise or CrewAI for beginners.

Which tool is best overall?

Depends on your needs—LangChain is the most versatile, but not always the simplest.

Conclusion

LLM orchestration frameworks have become a core part of building modern AI applications. As organizations move from simple experiments to production-grade systems, these tools help manage complexity, improve reliability, and scale AI capabilities effectively. There is no single “best” framework for everyone. Some tools excel in flexibility and deep customization, while others focus on ease of use and fast deployment. Enterprise teams may prioritize security and integrations, while startups may focus on speed and cost efficiency.