Introduction

Batch Processing Frameworks are systems designed to process large volumes of data in chunks (batches) instead of handling data continuously in real time. These frameworks collect data over a period, process it in bulk, and produce results at scheduled intervals. This approach is highly efficient for workloads that do not require immediate response but demand accuracy, scalability, and cost efficiency.

Even in a real-time-first world, batch processing remains critical. Organizations still rely on it for large-scale analytics, reporting, machine learning training, and ETL pipelines. With modern data platforms evolving rapidly, batch frameworks are now integrated with cloud services, AI pipelines, and hybrid architectures, making them more powerful than ever.

Common use cases include:

- Data warehousing and ETL pipelines

- Financial reporting and reconciliation

- Machine learning model training

- Log processing and historical analysis

- Data migration and transformation

What buyers should evaluate:

- Scalability and distributed processing capability

- Fault tolerance and data reliability

- Ease of development and APIs

- Integration with data lakes and warehouses

- Scheduling and orchestration features

- Cost efficiency and resource utilization

- Security and compliance readiness

- Community and enterprise support

- Compatibility with existing infrastructure

Best for: Data engineers, analytics teams, enterprises with large datasets, and organizations handling scheduled workloads such as reporting, ML pipelines, and historical data processing.

Not ideal for: Real-time use cases like fraud detection or live dashboards. In such cases, stream processing frameworks are more suitable.

Key Trends in Batch Processing Frameworks

- AI/ML integration: Batch frameworks are increasingly used for training large machine learning models

- Hybrid processing models: Unified platforms supporting both batch and streaming

- Cloud-native architecture: Shift toward managed services and serverless batch processing

- Cost optimization: Better resource scheduling and auto-scaling features

- Data lake integration: Tight integration with modern lakehouse architectures

- Workflow automation: Advanced orchestration and scheduling capabilities

- Security enhancements: Encryption, RBAC, and compliance-driven features

- Containerization: Kubernetes-based deployments becoming standard

- Open-source dominance: Strong adoption of open frameworks with enterprise support

- Low-code interfaces: Simplified pipeline creation for non-developers

How We Selected These Tools (Methodology)

- Evaluated market adoption and enterprise usage

- Assessed core batch processing capabilities

- Reviewed performance benchmarks and scalability signals

- Considered security and compliance readiness

- Analyzed integration ecosystems with modern data tools

- Reviewed community strength and enterprise support availability

- Included a mix of open-source and managed services

- Evaluated ease of development and operational complexity

- Considered cost efficiency and deployment flexibility

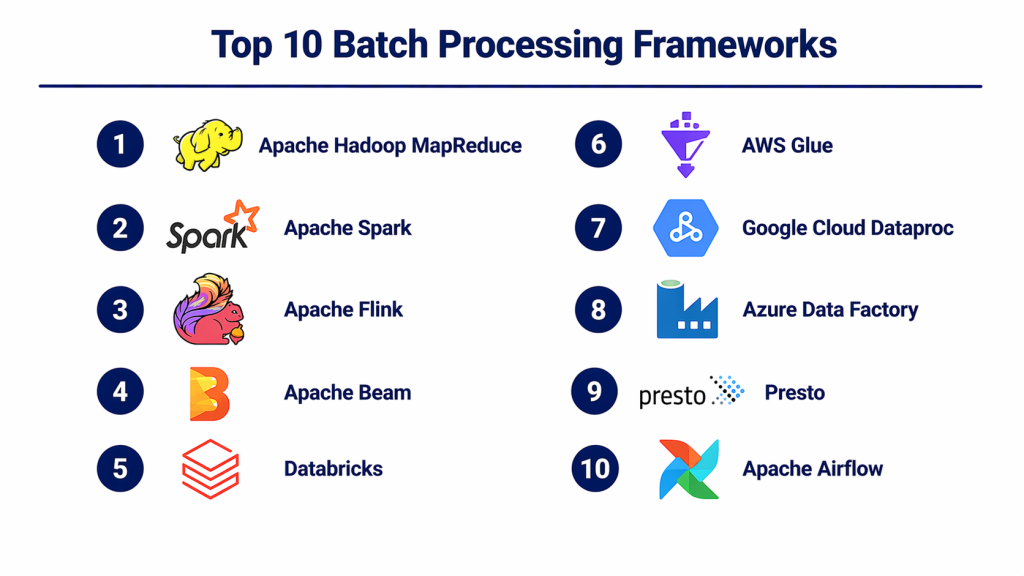

Top 10 Batch Processing Frameworks

#1 — Apache Hadoop MapReduce

Short description: A foundational batch processing framework designed for distributed processing of large datasets across clusters.

Key Features

- Distributed data processing

- Fault tolerance via replication

- Scalable cluster architecture

- Data locality optimization

- Integration with Hadoop ecosystem

Pros

- Highly scalable

- Proven reliability

Cons

- Complex setup

- Slower compared to modern frameworks

Platforms / Deployment

Self-hosted

Security & Compliance

Supports Kerberos authentication, encryption

Integrations & Ecosystem

Deep integration with Hadoop tools

- HDFS

- Hive

- Pig

Support & Community

Large and mature open-source community

#2 — Apache Spark

Short description: A fast, unified analytics engine supporting batch and stream processing with in-memory computation.

Key Features

- In-memory processing

- Distributed computing

- SQL and DataFrame APIs

- Machine learning libraries

- Fault tolerance

Pros

- High performance

- Rich ecosystem

Cons

- Memory intensive

- Requires tuning

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Supports encryption, authentication

Integrations & Ecosystem

- Hadoop

- Kafka

- Databases

Support & Community

Very strong community and enterprise backing

#3 — Apache Flink (Batch Mode)

Short description: A unified processing framework that supports both batch and streaming with high efficiency.

Key Features

- Unified processing engine

- High throughput

- Fault tolerance

- SQL support

- Event-driven architecture

Pros

- Flexible processing

- High performance

Cons

- Complex setup

- Learning curve

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Encryption and RBAC supported

Integrations & Ecosystem

- Kafka

- Hadoop

- Cloud platforms

Support & Community

Growing enterprise adoption

#4 — Apache Beam

Short description: A unified programming model for defining batch and streaming data pipelines.

Key Features

- Unified API

- Portable pipelines

- Multiple runners

- SDK support

- Scalability

Pros

- Flexible execution

- Multi-platform support

Cons

- Requires runners

- Complexity

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Dataflow

- Spark

- Flink

Support & Community

Active community

#5 — Databricks (Batch Workloads)

Short description: A cloud-based data platform built on Spark for large-scale batch analytics and AI workloads.

Key Features

- Unified analytics platform

- Auto-scaling clusters

- ML integration

- Collaborative notebooks

- Delta Lake support

Pros

- Easy to use

- Strong AI integration

Cons

- Cost can be high

- Cloud dependency

Platforms / Deployment

Cloud

Security & Compliance

Supports enterprise-grade security features

Integrations & Ecosystem

- Cloud storage

- BI tools

- ML frameworks

Support & Community

Strong enterprise support

#6 — AWS Glue

Short description: Fully managed ETL service designed for batch data processing in AWS.

Key Features

- Serverless ETL

- Auto scaling

- Data catalog

- Job scheduling

- Integration with AWS

Pros

- No infrastructure management

- Easy integration

Cons

- AWS dependency

- Limited customization

Platforms / Deployment

Cloud

Security & Compliance

Supports IAM, encryption

Integrations & Ecosystem

- S3

- Redshift

- Athena

Support & Community

Enterprise support

#7 — Google Cloud Dataproc

Short description: Managed service for running Hadoop and Spark workloads in the cloud.

Key Features

- Managed clusters

- Auto scaling

- Integration with Google Cloud

- Cost optimization

- Fast cluster setup

Pros

- Easy cluster management

- Flexible workloads

Cons

- Cloud dependency

- Requires knowledge of underlying tools

Platforms / Deployment

Cloud

Security & Compliance

Supports IAM and encryption

Integrations & Ecosystem

- BigQuery

- Cloud Storage

- AI tools

Support & Community

Strong enterprise support

#8 — Azure Data Factory

Short description: Cloud-based data integration service for building batch data pipelines.

Key Features

- Pipeline orchestration

- Data integration

- Scheduling

- Monitoring

- ETL capabilities

Pros

- Easy to use

- Strong Azure integration

Cons

- Limited advanced processing

- Azure dependency

Platforms / Deployment

Cloud

Security & Compliance

Supports Azure security features

Integrations & Ecosystem

- Azure storage

- SQL databases

- Power BI

Support & Community

Enterprise support

#9 — Presto (Batch Query Engine)

Short description: Distributed SQL query engine designed for fast batch analytics on large datasets.

Key Features

- SQL-based querying

- Distributed execution

- High performance

- Integration with data lakes

- Scalability

Pros

- Fast query execution

- Easy SQL interface

Cons

- Limited ETL features

- Requires setup

Platforms / Deployment

Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Hive

- S3

- Databases

Support & Community

Active open-source community

#10 — Apache Airflow

Short description: Workflow orchestration platform used for scheduling and managing batch processing pipelines.

Key Features

- DAG-based workflows

- Scheduling

- Monitoring

- Extensible architecture

- API support

Pros

- Highly flexible

- Strong ecosystem

Cons

- Not a processing engine itself

- Requires setup

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Supports authentication, RBAC

Integrations & Ecosystem

- Spark

- Databases

- Cloud services

Support & Community

Large and active community

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Hadoop MapReduce | Legacy batch jobs | Linux | Self-hosted | Distributed processing | N/A |

| Apache Spark | High-speed batch | Multi-platform | Hybrid | In-memory processing | N/A |

| Apache Flink | Unified workloads | Multi-platform | Hybrid | Stream + batch | N/A |

| Apache Beam | Portable pipelines | Multi-platform | Hybrid | Multi-runner support | N/A |

| Databricks | Enterprise analytics | Cloud | Cloud | Unified AI platform | N/A |

| AWS Glue | Serverless ETL | Cloud | Cloud | Managed ETL | N/A |

| Dataproc | Managed clusters | Cloud | Cloud | Hadoop/Spark service | N/A |

| Azure Data Factory | Data pipelines | Cloud | Cloud | Workflow orchestration | N/A |

| Presto | SQL analytics | Multi-platform | Self-hosted | Fast SQL queries | N/A |

| Apache Airflow | Workflow orchestration | Multi-platform | Hybrid | DAG workflows | N/A |

Evaluation & Scoring of Batch Processing Frameworks

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Hadoop | 8 | 5 | 8 | 7 | 7 | 8 | 8 | 7.3 |

| Spark | 9 | 7 | 9 | 8 | 9 | 9 | 8 | 8.6 |

| Flink | 9 | 6 | 9 | 8 | 9 | 8 | 8 | 8.4 |

| Beam | 8 | 6 | 9 | 7 | 8 | 7 | 8 | 7.9 |

| Databricks | 9 | 8 | 9 | 9 | 9 | 9 | 7 | 8.7 |

| AWS Glue | 8 | 8 | 9 | 9 | 8 | 9 | 7 | 8.4 |

| Dataproc | 8 | 7 | 9 | 8 | 8 | 8 | 8 | 8.1 |

| Azure Data Factory | 7 | 9 | 8 | 9 | 7 | 9 | 7 | 8.0 |

| Presto | 8 | 7 | 8 | 6 | 9 | 7 | 8 | 7.8 |

| Airflow | 7 | 7 | 9 | 8 | 7 | 9 | 8 | 7.9 |

How to interpret scores:

- Scores are comparative and reflect typical use cases

- Higher score does not always mean better for your needs

- Enterprise tools score higher due to features and support

- Simpler tools may be easier to adopt despite lower scores

- Evaluate based on workload type and team capability

Which Batch Processing Frameworks Right for You?

Solo / Freelancer

- Choose AWS Glue or Azure Data Factory

- Avoid complex cluster-based systems

SMB

- Use Spark or Dataproc

- Balance performance and cost

Mid-Market

- Consider Flink or Beam

- Focus on flexibility and scalability

Enterprise

- Choose Databricks or Spark

- Prioritize security, performance, and integration

Budget vs Premium

- Open-source = lower cost, higher effort

- Managed services = higher cost, lower effort

Feature Depth vs Ease of Use

- Spark = deep features

- Azure Data Factory = ease of use

Integrations & Scalability

- Cloud platforms offer best scalability

- Open-source offers flexibility

Security & Compliance Needs

- Enterprises should prefer cloud-managed services

Frequently Asked Questions (FAQs)

What is batch processing?

It processes large datasets in chunks at scheduled intervals.

Is batch processing still relevant?

Yes, especially for analytics and reporting workloads.

Which tool is best for beginners?

Managed tools like AWS Glue are easier to start.

Are these tools expensive?

Depends on scale and deployment model.

Can batch and streaming work together?

Yes, many tools support hybrid processing.

Do I need coding skills?

Most tools require some programming knowledge.

Are they secure?

Most provide encryption and access control features.

Can I migrate between tools?

Yes, but it requires planning and effort.

What industries use batch processing?

Finance, healthcare, retail, and telecom.

Is open-source reliable?

Yes, widely used in production environments.

Conclusion

Batch processing frameworks remain a core part of modern data architectures. While real-time processing gets much attention, batch processing is still essential for handling large-scale analytics, ETL pipelines, and machine learning workloads. The choice of framework depends on your specific requirements—whether you need high performance, ease of use, or seamless cloud integration.