Introduction

Experiment Tracking Tools are platforms that help data scientists and machine learning teams log, monitor, compare, and reproduce experiments. These tools capture key details such as model parameters, datasets, metrics, code versions, and outputs—ensuring that experiments are organized and reproducible instead of scattered across notebooks and spreadsheets.

In the current AI-first environment, experiment tracking has become essential. Teams are running hundreds or thousands of experiments across different models, hyperparameters, and datasets. Without structured tracking, it becomes nearly impossible to understand what worked, what failed, and why. These tools enable faster iteration, better collaboration, and reliable production deployment.

Real-world use cases

- Hyperparameter tuning for deep learning models

- A/B testing of ML models in production

- Tracking NLP model performance across datasets

- Comparing feature engineering strategies

- Monitoring model drift experiments

What buyers should evaluate

- Experiment logging capabilities (metrics, parameters, artifacts)

- Visualization and comparison tools

- Collaboration features and team workflows

- Integration with ML frameworks

- Version control and reproducibility

- Scalability for large experiments

- Deployment flexibility (cloud vs self-hosted)

- Security and access control

- Cost and pricing model

- Ease of onboarding

Best for: Data scientists, ML engineers, AI teams, research labs, and organizations building scalable ML workflows.

Not ideal for: Small teams doing simple analytics or single-model workflows where manual tracking (e.g., spreadsheets) is sufficient.

Key Trends in Experiment Tracking Tools

- AI-assisted experiment analysis to suggest optimal configurations

- Tighter integration with MLOps platforms and CI/CD pipelines

- Unified ML lifecycle platforms combining tracking, deployment, and monitoring

- Real-time experiment dashboards for faster insights

- Collaboration-first design with shared workspaces and versioning

- Cloud-native adoption with managed experiment tracking services

- Open-source ecosystem growth for flexibility and cost control

- Improved reproducibility standards with metadata tracking

- Security and governance enhancements for enterprise adoption

- Integration with data versioning tools for end-to-end traceability

How We Selected These Tools (Methodology)

- Evaluated industry adoption and developer mindshare

- Assessed experiment tracking depth and usability

- Reviewed performance with large-scale ML workloads

- Considered security and compliance capabilities

- Analyzed integration with ML frameworks and data tools

- Evaluated deployment flexibility (cloud, hybrid, on-prem)

- Reviewed community support and documentation quality

- Considered fit for different organization sizes

- Assessed innovation in AI and automation features

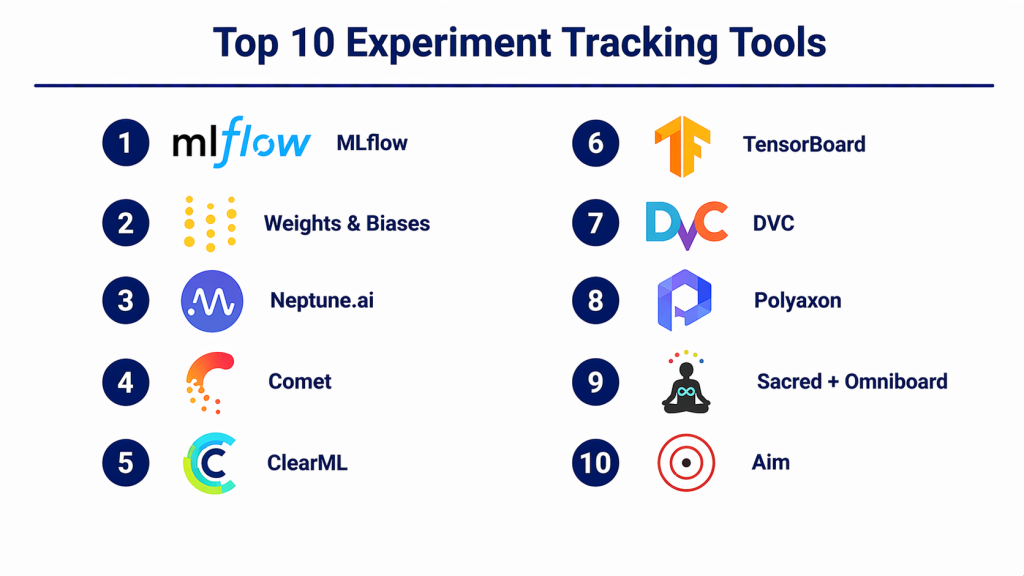

Top 10 Experiment Tracking Tools

#1 — MLflow

Short description (2–3 lines): A widely adopted open-source platform for managing the ML lifecycle, including experiment tracking, model packaging, and deployment.

Key Features

- Experiment logging (parameters, metrics, artifacts)

- Model registry

- REST API support

- UI dashboard for experiments

- Multi-language support

- Integration with multiple ML frameworks

Pros

- Strong open-source ecosystem

- Flexible and widely supported

Cons

- Requires setup for production use

- UI can feel basic

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Highly extensible and integrates with major ML tools.

- TensorFlow

- PyTorch

- Spark

- Databricks

Support & Community

Very strong community support and extensive documentation.

#2 — Weights & Biases (W&B)

Short description (2–3 lines): A popular experiment tracking platform with advanced visualization and collaboration features for ML teams.

Key Features

- Real-time experiment tracking

- Interactive dashboards

- Hyperparameter sweeps

- Artifact versioning

- Team collaboration tools

- Automated reports

Pros

- Excellent visualization tools

- Easy to use

Cons

- Pricing can scale quickly

- Requires internet connectivity for cloud features

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC (others not publicly stated)

Integrations & Ecosystem

Integrates seamlessly with ML workflows.

- PyTorch

- TensorFlow

- Hugging Face

Support & Community

Strong documentation and enterprise support.

#3 — Neptune.ai

Short description (2–3 lines): A metadata store for experiments designed for tracking complex ML workflows and large-scale experiments.

Key Features

- Experiment metadata tracking

- Model versioning

- Collaboration tools

- Custom dashboards

- API-based logging

- Large dataset handling

Pros

- Flexible metadata tracking

- Good scalability

Cons

- Learning curve

- Limited offline capabilities

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports a wide range of ML tools.

- PyTorch

- TensorFlow

- Scikit-learn

Support & Community

Growing community and support.

#4 — Comet

Short description (2–3 lines): An experiment tracking and model management platform designed for deep learning and production ML teams.

Key Features

- Experiment tracking

- Model registry

- Visualization tools

- Hyperparameter optimization

- Collaboration features

- API integrations

Pros

- Strong visualization capabilities

- Good model management

Cons

- Pricing complexity

- UI can be overwhelming

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, encryption (others not publicly stated)

Integrations & Ecosystem

Integrates with ML pipelines.

- Keras

- PyTorch

- TensorFlow

Support & Community

Commercial support with documentation.

#5 — ClearML

Short description (2–3 lines): An open-source MLOps platform with experiment tracking, pipeline orchestration, and automation features.

Key Features

- Experiment tracking

- Pipeline automation

- Dataset versioning

- Model management

- Resource orchestration

- Open-source flexibility

Pros

- End-to-end ML platform

- Cost-effective

Cons

- Setup complexity

- UI improvements needed

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports various ML tools and frameworks.

- PyTorch

- TensorFlow

- Kubernetes

Support & Community

Active community with growing adoption.

#6 — TensorBoard

Short description (2–3 lines): Visualization and experiment tracking tool primarily used with TensorFlow, but extensible to other frameworks.

Key Features

- Metrics visualization

- Graph visualization

- Embedding visualization

- Performance tracking

- Real-time updates

- Plugin support

Pros

- Free and widely used

- Strong visualization

Cons

- Limited to visualization

- Not a full experiment tracking platform

Platforms / Deployment

Web / Local

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Primarily integrates with TensorFlow ecosystem.

- TensorFlow

- PyTorch (via plugins)

Support & Community

Strong open-source community.

#7 — DVC (Data Version Control)

Short description (2–3 lines): A data and experiment versioning tool that integrates with Git for reproducible ML workflows.

Key Features

- Data versioning

- Experiment tracking

- Pipeline management

- Reproducibility

- Git integration

- CLI-based workflows

Pros

- Strong reproducibility

- Works well with Git

Cons

- CLI-heavy

- Limited UI

Platforms / Deployment

Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Works with version control systems.

- Git

- CI/CD tools

Support & Community

Strong open-source support.

#8 — Polyaxon

Short description (2–3 lines): A platform for managing ML experiments, pipelines, and deployments in Kubernetes environments.

Key Features

- Experiment tracking

- Pipeline orchestration

- Resource management

- Kubernetes-native

- Model lifecycle support

- Visualization dashboards

Pros

- Scalable architecture

- Kubernetes integration

Cons

- Complex setup

- Requires Kubernetes knowledge

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

RBAC (others not publicly stated)

Integrations & Ecosystem

Designed for cloud-native ML.

- Kubernetes

- Docker

Support & Community

Moderate community support.

#9 — Sacred + Omniboard

Short description (2–3 lines): Lightweight experiment tracking framework combined with a visualization dashboard.

Key Features

- Experiment configuration tracking

- Logging system

- Lightweight architecture

- Extensible design

- Visualization dashboard

- Open-source

Pros

- Simple and lightweight

- Highly customizable

Cons

- Limited enterprise features

- Smaller ecosystem

Platforms / Deployment

Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Flexible integration via Python.

- Python frameworks

- Custom scripts

Support & Community

Small but dedicated community.

#10 — Aim

Short description (2–3 lines): An open-source experiment tracking tool focused on performance and scalability.

Key Features

- Fast experiment tracking

- Scalable storage

- Visualization dashboards

- Query system

- Lightweight integration

- CLI and UI

Pros

- High performance

- Easy integration

Cons

- Limited enterprise features

- Smaller ecosystem

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Works with ML pipelines and frameworks.

- PyTorch

- TensorFlow

Support & Community

Growing open-source community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| MLflow | Developers | Web, Linux | Hybrid | Open-source flexibility | N/A |

| W&B | Teams | Web | Cloud | Visualization dashboards | N/A |

| Neptune.ai | Enterprises | Web | Cloud | Metadata tracking | N/A |

| Comet | ML teams | Web | Hybrid | Visualization tools | N/A |

| ClearML | Full ML workflows | Web | Hybrid | Pipeline automation | N/A |

| TensorBoard | TensorFlow users | Web | Local | Visualization | N/A |

| DVC | Dev teams | CLI | Self-hosted | Git integration | N/A |

| Polyaxon | Cloud-native teams | Web | Hybrid | Kubernetes-native | N/A |

| Sacred | Lightweight use | Local | Self-hosted | Simplicity | N/A |

| Aim | Open-source users | Web | Hybrid | High performance | N/A |

Evaluation & Scoring of Experiment Tracking Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| MLflow | 9 | 7 | 9 | 6 | 8 | 9 | 9 | 8.3 |

| W&B | 9 | 9 | 8 | 8 | 9 | 9 | 7 | 8.6 |

| Neptune | 8 | 7 | 8 | 7 | 8 | 8 | 7 | 7.8 |

| Comet | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 7.9 |

| ClearML | 9 | 7 | 8 | 6 | 8 | 7 | 9 | 8.0 |

| TensorBoard | 7 | 8 | 6 | 5 | 7 | 9 | 10 | 7.5 |

| DVC | 8 | 6 | 7 | 6 | 7 | 8 | 9 | 7.6 |

| Polyaxon | 8 | 6 | 7 | 7 | 8 | 7 | 7 | 7.4 |

| Sacred | 6 | 7 | 6 | 5 | 6 | 6 | 8 | 6.5 |

| Aim | 7 | 8 | 7 | 5 | 9 | 7 | 8 | 7.6 |

How to interpret:

These scores compare tools across key dimensions relevant to ML teams. Higher scores indicate stronger overall capabilities, but the right choice depends on your team size, infrastructure, and use case.

Which Experiment Tracking Tools for You?

Solo / Freelancer

Aim, DVC, or MLflow are ideal due to simplicity and cost-effectiveness.

SMB

ClearML and Comet provide a good balance of features and usability.

Mid-Market

Neptune and W&B offer strong collaboration and scalability.

Enterprise

W&B, Tecton-style platforms, and MLflow with enterprise support are preferred.

Budget vs Premium

- Budget: MLflow, DVC, Aim

- Premium: W&B, Comet

Feature Depth vs Ease of Use

- Deep features: ClearML, MLflow

- Easy use: W&B

Integrations & Scalability

- Best integrations: MLflow, W&B

- Highly scalable: Neptune

Security & Compliance Needs

- Enterprise security: W&B, Comet

Frequently Asked Questions (FAQs)

What are experiment tracking tools?

They track ML experiments, including parameters, metrics, and results for reproducibility.

Why are they important?

They help teams understand which experiments work and ensure consistency.

Are they expensive?

Some are free (open-source), while others use subscription pricing.

Can I use them with any ML framework?

Most tools support major frameworks like TensorFlow and PyTorch.

How long does implementation take?

Basic setup can take hours; full integration may take weeks.

Do they support collaboration?

Yes, most modern tools offer team collaboration features.

Are they secure?

Enterprise tools offer security features; details vary.

Can I switch tools later?

Yes, but migration requires effort.

What mistakes should I avoid?

Not logging enough metadata and ignoring reproducibility.

What are alternatives?

Manual tracking, spreadsheets, or simple logging systems.

Conclusion

Experiment tracking tools are no longer optional for serious machine learning workflows—they are a foundational requirement for building reliable, scalable, and reproducible AI systems. As experimentation becomes more complex, these tools help teams move faster while maintaining clarity and control over their work. There is no single “best” tool. Open-source solutions like MLflow and DVC provide flexibility and cost advantages, while platforms like Weights & Biases and Comet offer advanced collaboration and visualization for larger teams. Your choice should depend on your team’s size, infrastructure, and maturity level in MLOps.