Introduction

Model Monitoring & Drift Detection Tools are platforms that help teams track how machine learning models behave after deployment. In simple terms, they ensure that your model keeps performing well in real-world conditions by detecting issues like data drift, concept drift, performance degradation, and anomalies.

This matters more than ever in modern AI-driven systems. As businesses rely heavily on models for decisions—fraud detection, recommendation engines, pricing, or healthcare diagnostics—any unnoticed drift can lead to poor outcomes, financial loss, or compliance risks.

Real-world use cases:

- Detecting fraud model degradation in banking systems

- Monitoring recommendation systems in e-commerce

- Ensuring fairness in hiring or credit scoring models

- Tracking predictive maintenance models in manufacturing

- Validating NLP models in customer support automation

What buyers should evaluate:

- Drift detection accuracy and methods

- Real-time vs batch monitoring capabilities

- Alerting and observability features

- Integration with ML pipelines and data platforms

- Explainability and root cause analysis

- Scalability and performance

- Security and compliance readiness

- Cost structure and pricing transparency

- Ease of setup and usability

Best for: ML engineers, data scientists, AI platform teams, and enterprises deploying production ML systems across industries like fintech, healthcare, retail, and SaaS.

Not ideal for: Teams in early experimentation stages with no production models, or those running simple rule-based systems where drift monitoring is not required.

Key Trends in Model Monitoring & Drift Detection Tools

- AI-driven anomaly detection: Platforms are embedding advanced ML to detect subtle drift patterns automatically.

- Real-time observability: Streaming-based monitoring is becoming standard for high-frequency use cases.

- Explainability integration: Tools now combine monitoring with root cause analysis and feature attribution.

- Unified MLOps platforms: Monitoring is increasingly part of end-to-end ML lifecycle tools.

- Data-centric monitoring: Focus is shifting from model metrics to data quality and lineage.

- Compliance-first design: GDPR, HIPAA, and audit readiness are influencing product features.

- Auto-remediation workflows: Triggering retraining pipelines automatically on drift detection.

- Multi-cloud and hybrid deployments: Flexibility across infrastructure is now expected.

- Cost optimization features: Monitoring pipelines are becoming more efficient to reduce overhead.

How We Selected These Tools (Methodology)

- Evaluated market adoption and industry usage across enterprises and startups

- Assessed feature completeness in monitoring, drift detection, alerting, and explainability

- Reviewed performance reliability for large-scale production environments

- Considered security posture signals such as enterprise readiness and compliance support

- Analyzed integration capabilities with ML frameworks, data platforms, and pipelines

- Ensured coverage across segments (open-source, enterprise, developer-first tools)

- Evaluated community and ecosystem strength

- Focused on real-world usability and scalability

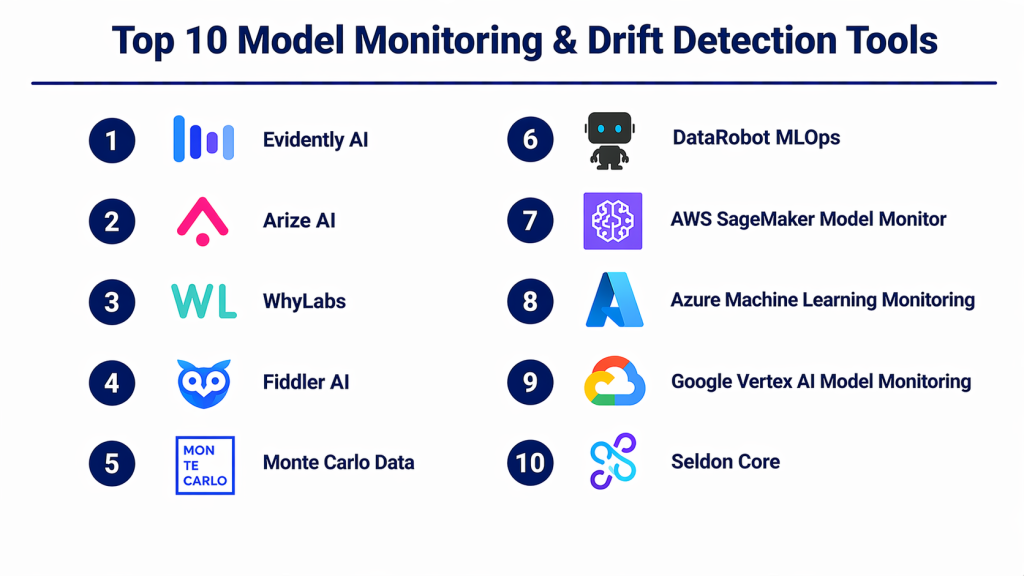

Top 10 Model Monitoring & Drift Detection Tools

#1 — Evidently AI

Short description (2–3 lines):

Evidently AI is an open-source and commercial solution focused on monitoring data and model drift. It is popular among data scientists for its flexibility and transparency.

Key Features

- Data and concept drift detection

- Customizable reports and dashboards

- Feature-level monitoring

- Open-source library with extensibility

- Model performance tracking

- Integration with notebooks and pipelines

Pros

- Highly customizable and transparent

- Strong community adoption

Cons

- Requires setup effort

- Limited out-of-the-box enterprise features

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Integrates with Python workflows, ML pipelines, and visualization tools.

- Jupyter

- Pandas

- MLflow

- Airflow

Support & Community

Strong open-source community; enterprise support available.

#2 — Arize AI

Short description (2–3 lines):

Arize AI provides advanced observability for ML models with strong visualization and explainability features.

Key Features

- Real-time monitoring

- Drift detection (data and concept)

- Model explainability tools

- Root cause analysis

- Performance tracking dashboards

Pros

- Excellent visualization capabilities

- Strong enterprise-grade features

Cons

- Premium pricing

- Learning curve for new users

Platforms / Deployment

Cloud

Security & Compliance

RBAC, encryption; others not publicly stated

Integrations & Ecosystem

Works with modern ML stacks and cloud platforms.

- AWS

- GCP

- Databricks

- Snowflake

Support & Community

Strong enterprise support; growing user base.

#3 — WhyLabs (WhyLogs)

Short description (2–3 lines):

WhyLabs focuses on data logging and monitoring with lightweight instrumentation using WhyLogs.

Key Features

- Data profiling at scale

- Drift detection

- Privacy-preserving monitoring

- Observability dashboards

- Lightweight logging

Pros

- Efficient and scalable

- Strong data observability

Cons

- Requires integration effort

- Limited UI compared to competitors

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Designed for flexible pipeline integration.

- Python SDK

- Data pipelines

- Cloud storage

Support & Community

Active community; enterprise support available.

#4 — Fiddler AI

Short description (2–3 lines):

Fiddler AI focuses on explainable AI with strong monitoring and governance capabilities.

Key Features

- Model monitoring

- Explainability dashboards

- Bias detection

- Data drift detection

- Model debugging tools

Pros

- Strong explainability features

- Enterprise-ready governance

Cons

- Premium pricing

- Complex setup

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SOC 2, RBAC, audit logs

Integrations & Ecosystem

Supports enterprise ML environments.

- Kubernetes

- ML pipelines

- Data warehouses

Support & Community

Enterprise-grade support.

#5 — Monte Carlo Data

Short description (2–3 lines):

Monte Carlo focuses on data observability with indirect model monitoring through data quality tracking.

Key Features

- Data quality monitoring

- Anomaly detection

- Data lineage tracking

- Incident alerting

- Pipeline monitoring

Pros

- Strong data-first approach

- Easy integration with data stacks

Cons

- Not ML-specific

- Limited model-level insights

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Strong data ecosystem integrations.

- Snowflake

- BigQuery

- Redshift

Support & Community

Enterprise support available.

#6 — DataRobot MLOps

Short description (2–3 lines):

DataRobot MLOps offers full lifecycle monitoring with enterprise-grade automation and governance.

Key Features

- Automated drift detection

- Performance monitoring

- Model governance

- Retraining triggers

- Centralized dashboards

Pros

- End-to-end platform

- Strong automation

Cons

- Expensive

- Less flexible for custom workflows

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

Enterprise-grade; specifics not publicly stated

Integrations & Ecosystem

Works with enterprise ML stacks.

- APIs

- Cloud platforms

- CI/CD tools

Support & Community

Strong enterprise support.

#7 — AWS SageMaker Model Monitor

Short description (2–3 lines):

A native AWS solution for monitoring deployed models in SageMaker environments.

Key Features

- Data drift detection

- Model quality monitoring

- Scheduled monitoring jobs

- Alerting via CloudWatch

- Integration with AWS ecosystem

Pros

- Deep AWS integration

- Scalable

Cons

- AWS lock-in

- Limited flexibility outside AWS

Platforms / Deployment

Cloud

Security & Compliance

AWS security standards

Integrations & Ecosystem

Tightly integrated with AWS services.

- S3

- CloudWatch

- Lambda

Support & Community

Strong AWS ecosystem support.

#8 — Azure Machine Learning Monitoring

Short description (2–3 lines):

Microsoft’s monitoring solution integrated into Azure ML for tracking model performance and drift.

Key Features

- Drift detection

- Model performance tracking

- Data monitoring

- Alerts and automation

- Integration with Azure ecosystem

Pros

- Strong enterprise integration

- Easy for Azure users

Cons

- Azure dependency

- Limited customization

Platforms / Deployment

Cloud

Security & Compliance

Enterprise-grade Azure compliance

Integrations & Ecosystem

Works within Azure ecosystem.

- Azure Data Factory

- Power BI

- Azure DevOps

Support & Community

Strong enterprise support.

#9 — Google Vertex AI Model Monitoring

Short description (2–3 lines):

Google’s solution for monitoring ML models deployed via Vertex AI.

Key Features

- Drift detection

- Feature attribution

- Monitoring dashboards

- Alerting

- Integration with GCP

Pros

- Scalable

- Strong AI ecosystem

Cons

- GCP dependency

- Limited flexibility

Platforms / Deployment

Cloud

Security & Compliance

Google Cloud standards

Integrations & Ecosystem

Deep GCP integration.

- BigQuery

- Dataflow

- AI pipelines

Support & Community

Strong enterprise support.

#10 — Seldon Core (Seldon Deploy)

Short description (2–3 lines):

Seldon provides Kubernetes-native model deployment and monitoring capabilities.

Key Features

- Drift detection

- Model performance tracking

- Kubernetes-native deployment

- Custom metrics

- Explainability tools

Pros

- Highly flexible

- Open-source friendly

Cons

- Requires Kubernetes expertise

- Complex setup

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Works well in cloud-native environments.

- Kubernetes

- Prometheus

- Grafana

Support & Community

Strong open-source community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Evidently AI | Data scientists | Web | Cloud/Self-hosted | Open-source flexibility | N/A |

| Arize AI | Enterprises | Web | Cloud | Advanced observability | N/A |

| WhyLabs | Data teams | Web | Cloud/Self-hosted | Data profiling | N/A |

| Fiddler AI | Regulated industries | Web | Cloud/Hybrid | Explainability | N/A |

| Monte Carlo | Data teams | Web | Cloud | Data observability | N/A |

| DataRobot MLOps | Enterprises | Web | Cloud/Hybrid | Automation | N/A |

| AWS Model Monitor | AWS users | Web | Cloud | Native AWS integration | N/A |

| Azure ML Monitoring | Azure users | Web | Cloud | Enterprise integration | N/A |

| Vertex AI Monitoring | GCP users | Web | Cloud | GCP-native monitoring | N/A |

| Seldon | DevOps teams | Web | Self-hosted/Hybrid | Kubernetes-native | N/A |

Evaluation & Scoring of Model Monitoring & Drift Detection Tools

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Evidently AI | 8 | 7 | 7 | 6 | 7 | 7 | 8 | 7.3 |

| Arize AI | 9 | 8 | 9 | 8 | 9 | 8 | 7 | 8.4 |

| WhyLabs | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.8 |

| Fiddler AI | 9 | 7 | 8 | 9 | 8 | 8 | 7 | 8.2 |

| Monte Carlo | 7 | 8 | 9 | 7 | 8 | 8 | 7 | 7.9 |

| DataRobot MLOps | 9 | 7 | 8 | 8 | 9 | 9 | 6 | 8.2 |

| AWS Model Monitor | 8 | 7 | 9 | 8 | 9 | 8 | 7 | 8.1 |

| Azure ML Monitoring | 8 | 8 | 9 | 8 | 8 | 8 | 7 | 8.1 |

| Vertex AI Monitoring | 8 | 8 | 9 | 8 | 9 | 8 | 7 | 8.2 |

| Seldon | 8 | 6 | 8 | 7 | 8 | 7 | 8 | 7.6 |

How to interpret scores:

- Scores are comparative within this category, not absolute benchmarks

- Higher scores indicate stronger overall capability across weighted criteria

- Enterprise tools score higher in security and integrations

- Open-source tools score better in value and flexibility

- Choose based on your specific environment and priorities

Which Model Monitoring & Drift Detection Tools for You?

Solo / Freelancer

Evidently AI or WhyLabs are ideal due to simplicity and cost efficiency.

SMB

WhyLabs or Monte Carlo provide good balance between features and usability.

Mid-Market

Arize AI or Seldon offer scalability with reasonable flexibility.

Enterprise

DataRobot, Fiddler, or cloud-native tools (AWS, Azure, GCP) are best for governance and scale.

Budget vs Premium

- Budget: Evidently AI, Seldon

- Premium: Arize AI, DataRobot

Feature Depth vs Ease of Use

- Feature-rich: Arize, Fiddler

- Easy-to-use: Evidently, Monte Carlo

Integrations & Scalability

- Best integrations: AWS, Azure, GCP

- Most scalable: Arize, DataRobot

Security & Compliance Needs

- High compliance: Fiddler, DataRobot

- Moderate: Cloud-native tools

Frequently Asked Questions (FAQs)

What is model drift?

Model drift occurs when input data or relationships change over time, reducing model accuracy.

How often should models be monitored?

Continuously for real-time systems, or periodically for batch models.

Are these tools expensive?

Pricing varies widely; open-source options are cheaper, enterprise tools are premium.

Do I need monitoring for all models?

Not always—only for production-critical models.

Can drift detection trigger retraining?

Yes, many tools support automated retraining workflows.

What is data drift vs concept drift?

Data drift is change in input data; concept drift is change in relationships.

Are these tools cloud-only?

Many support hybrid or self-hosted deployments.

How long does implementation take?

From a few days to several weeks depending on complexity.

Can I switch tools later?

Yes, but migration effort depends on integrations and data pipelines.

What are alternatives?

Basic logging, dashboards, or custom-built monitoring solutions.

Conclusion

Model monitoring and drift detection tools are no longer optional—they are critical for maintaining reliable, trustworthy AI systems. As models move from experimentation to production, continuous visibility into performance, data quality, and anomalies becomes essential. There is no single “best” tool. The right choice depends on your environment, scale, budget, and technical maturity. Open-source tools provide flexibility and cost efficiency, while enterprise platforms deliver automation, governance, and scalability.