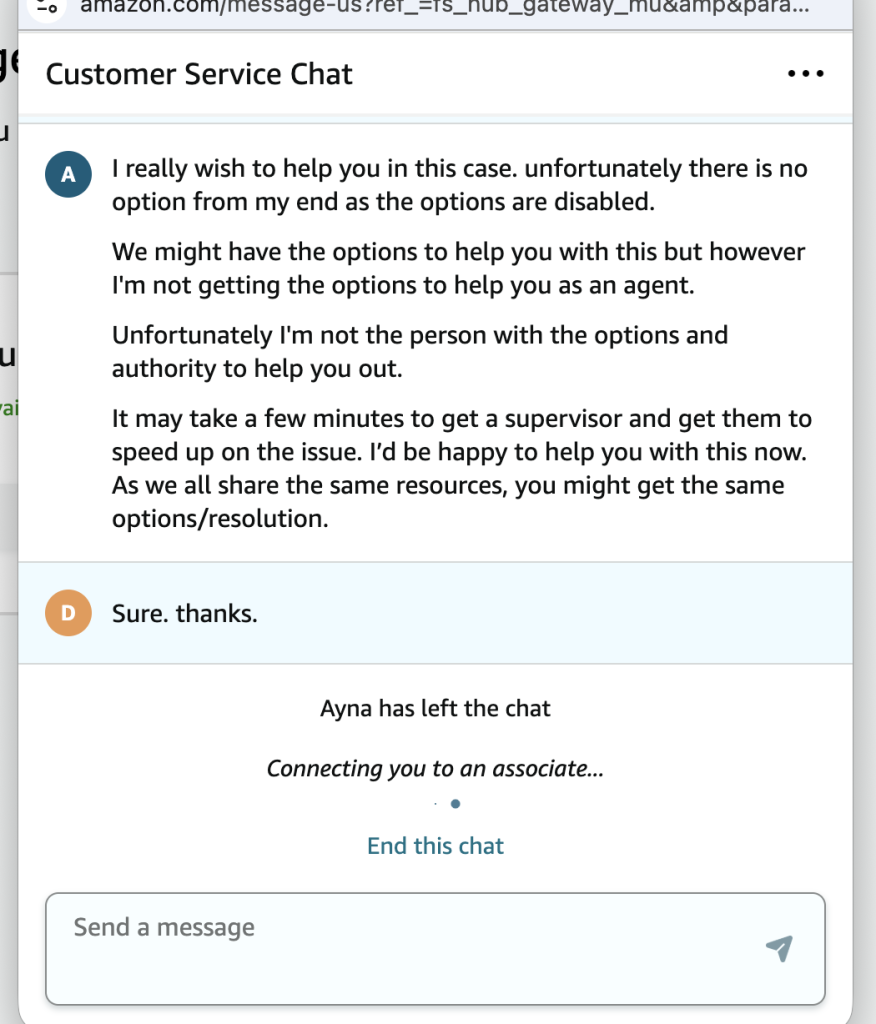

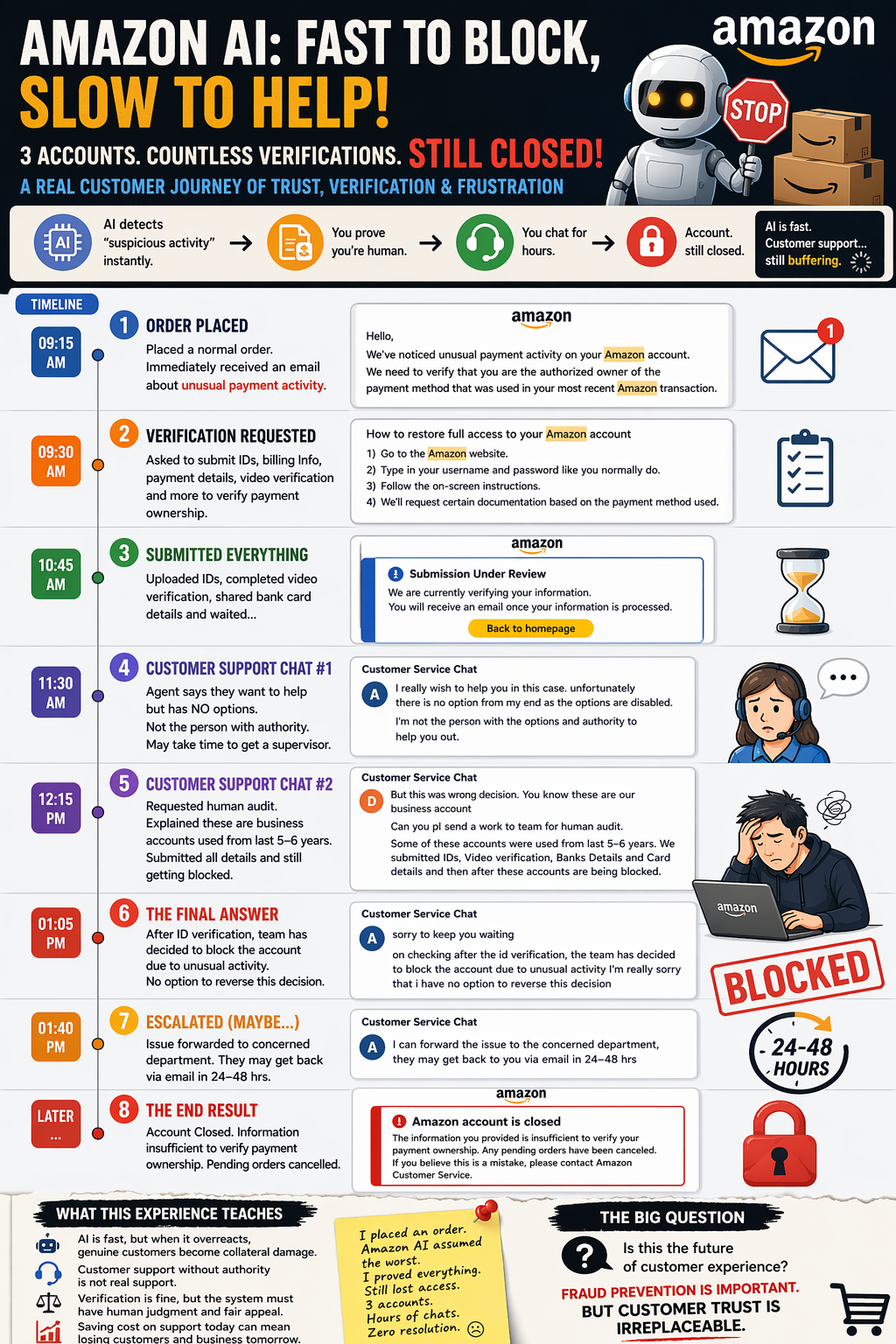

A real customer timeline of how over-automation can quietly damage trust, loyalty, and business

There is a big difference between using AI to protect customers and using AI in a way that makes real customers feel like criminals.

That is exactly what my recent Amazon experience felt like.

This was not about one random new account. These were older accounts, around five years old, with multiple payment methods and more than 300 orders delivered in the past. That is why the experience felt even more shocking. I was not dealing with the platform as a suspicious first-time buyer. I was dealing with it as an established customer who had already built years of purchase history.

And yet, the moment I placed an order, the system moved fast. Very fast.

First came the alert. Then the verification steps. Then the documents. Then the waiting. Then the customer support chats. Then the escalation. And in the end, after all the IDs, video verification, payment-related details, and hours of effort, the accounts were still closed.

That is why this is not just a complaint. It is a warning about what happens when AI-driven trust systems move faster than human judgment.

The one-line version of my experience

Amazon’s AI was fast enough to block the accounts instantly.

Amazon’s support was slow enough to keep asking for patience.

And somewhere in the middle, customer trust disappeared.

Or, in the funny version I shared earlier:

Amazon AI is so advanced that it blocked 3 of my accounts instantly the moment I placed an order. Then came the real challenge — proving I’m human by sharing documents and chatting for hours… still no resolution. AI is fast. Customer support is still thinking.

Funny line. Painful reality.

Timeline of what happened

Phase 1: A normal order suddenly became “unusual payment activity”

Everything started with what should have been a completely routine purchase.

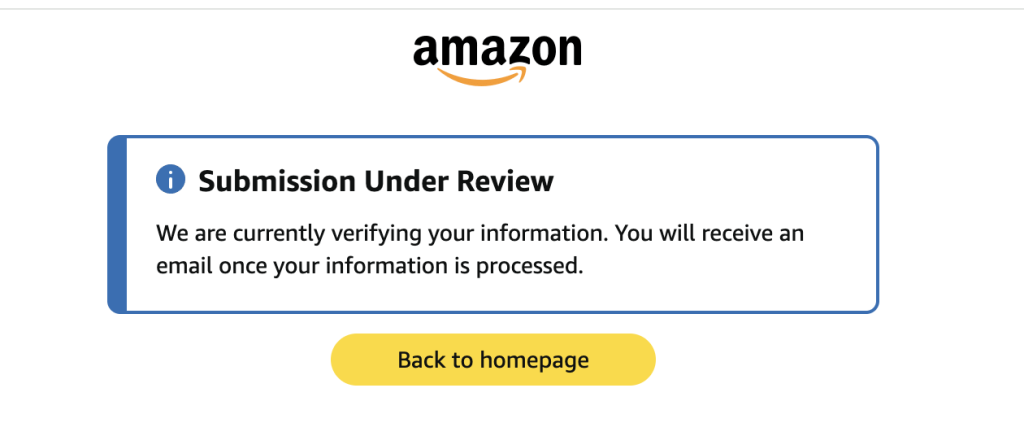

I placed a normal order. Instead of a smooth checkout experience, I received a message saying Amazon had noticed unusual payment activity and needed to verify that I was the authorized owner of the payment method used in the transaction.

That was the first moment when the shopping experience changed into a defensive process. I was no longer simply placing an order. I was being pulled into a system that had already become suspicious.

Insert Image 1 here

Reference: Email screenshot mentioning unusual payment activity and asking for account/payment verification.

This kind of message instantly changes the emotional state of the customer. A normal purchase becomes a risk event. Convenience turns into anxiety.

And once that shift happens, the platform is no longer acting like a marketplace. It starts feeling like an interrogation workflow.

Phase 2: Amazon asked for proof — IDs, billing validation, and payment ownership

Next came the verification instructions.

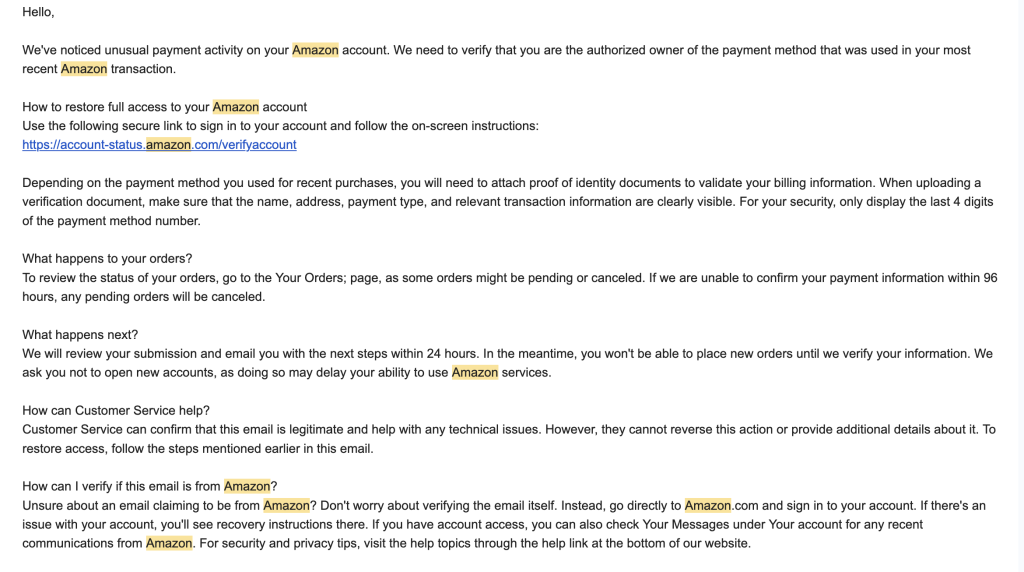

The process required proof that I was the real owner of the payment method. The instructions pointed toward identity and billing validation, asking for documentation that clearly showed details such as name, address, payment type, and relevant transaction information. It also indicated that only the last four digits of the payment method should be visible.

Then there was another message explaining how to restore access to the account by signing in, following the on-screen steps, and submitting the required documentation based on the payment method used.

Insert Image 2 here

Reference: Screenshot showing “How to restore full access to your Amazon account.”

At this point, most genuine customers cooperate. That is the normal reaction. You assume the company is just being careful. You assume that once you provide the requested information, the matter will be resolved.

That assumption is exactly where trust begins — and exactly where trust gets damaged when the process fails.

Phase 3: Submission under review — the waiting room with no transparency

After the details were submitted, the account status changed to a review stage.

The screen said:

Submission Under Review

We are currently verifying your information. You will receive an email once your information is processed.

On paper, that sounds reassuring. It suggests progress. It suggests that the evidence is being examined and that a decision will follow.

Insert Image 3 here

Reference: Blue status page showing “Submission Under Review.”

But the reality of such a page is very different when you are the customer.

You wait, but you do not know what exactly is being reviewed.

You wait, but you do not know whether a human is actually looking at it.

You wait, but you do not know how the decision will be made.

You wait, but you do not know whether the outcome was already predetermined.

That is one of the biggest problems with over-automated trust systems: they create the appearance of a process without giving the customer any visibility into whether the process is meaningful.

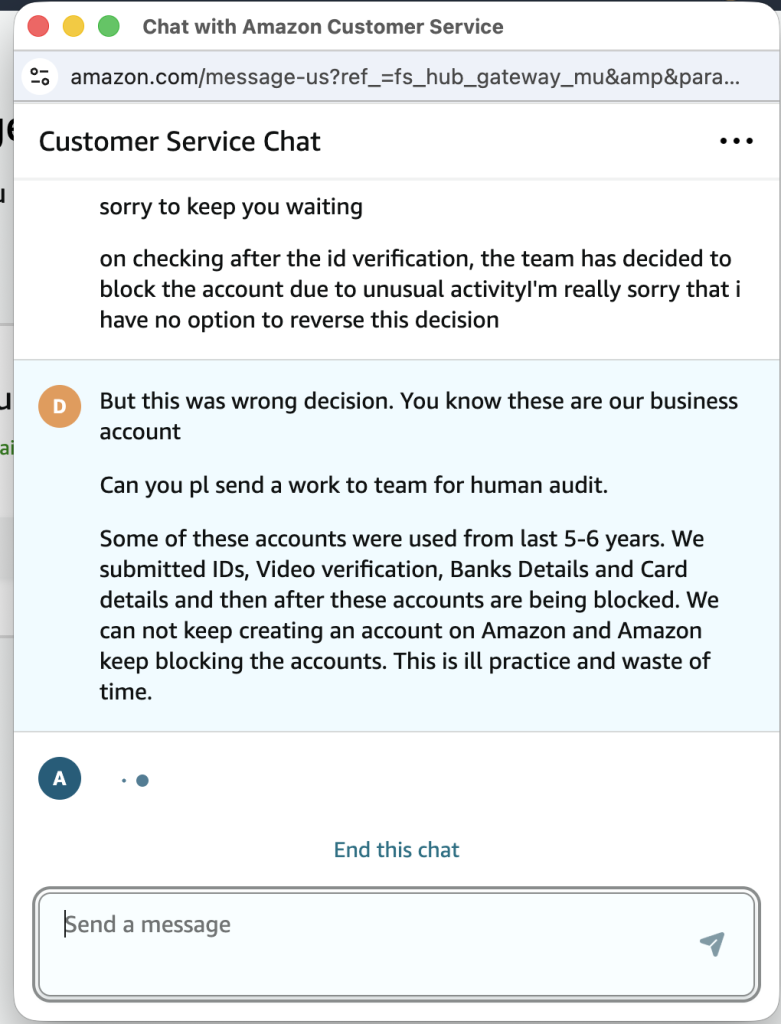

Phase 4: Customer support admitted they had little or no real control

When the review process did not solve the problem, I contacted Amazon customer support.

This was the stage where the issue became bigger than account verification. It became a customer experience problem.

One support associate clearly said they wanted to help, but the options on their end were disabled. They indicated that they were not the person with the authority to resolve the issue and that even if escalation happened, I might still get the same options or the same resolution.

Insert Image 4 here

Reference: Customer service chat where the agent says they want to help but their options are disabled and they may not have the authority.

That one moment explains almost everything.

Because once frontline support cannot act, cannot override, cannot interpret context, and cannot meaningfully escalate, the human layer stops being support. It becomes a communication layer sitting on top of a machine-led decision.

And that is dangerous.

A customer does not care whether the limitation is policy-based, tool-based, team-based, or system-based. From the customer’s point of view, the message is simple:

The platform can block me immediately, but no one can actually help me.

That is a very damaging message for any company.

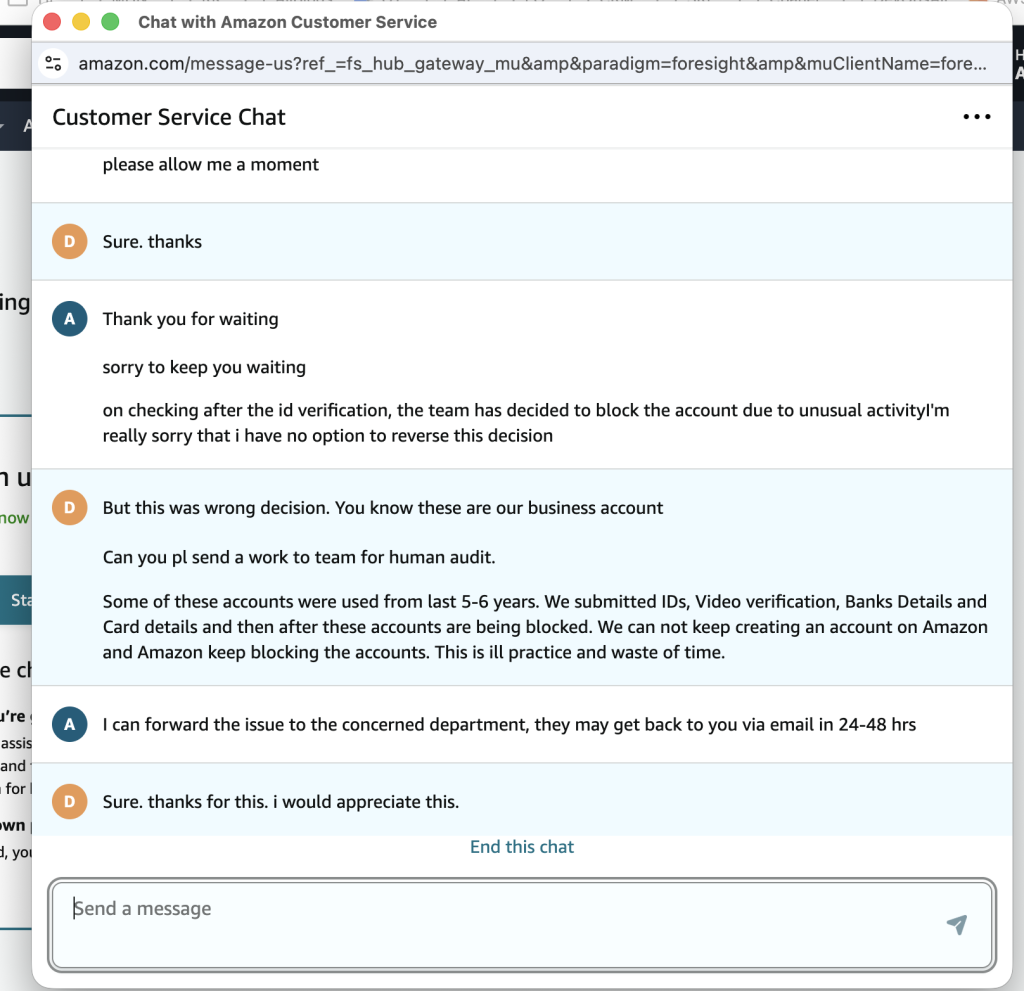

Phase 5: I requested a human audit because these were old business-related accounts

At that point, I explicitly asked for the issue to be sent for human audit.

I explained that these were business accounts. I explained that some of them had been used for five to six years. I explained that we had already submitted IDs, completed video verification, and shared the required bank and card-related information. And still, despite all of that, the accounts were being blocked.

Insert Image 5 here

Reference: Chat message requesting human audit and explaining that long-used business accounts were still being blocked even after all verification steps.

This is where the experience became not just frustrating, but unreasonable.

Because if long-standing accounts with years of purchase history can still be treated like instant threats even after extensive verification, then the trust engine is either too aggressive, too rigid, or too disconnected from context.

A smart AI system should not only detect anomalies. It should also understand history, patterns, loyalty, account maturity, and the difference between genuine edge cases and actual fraud.

Without that, “AI-powered trust” quickly becomes “AI-powered collateral damage.”

Phase 6: After all the verification, the final human message was still: blocked

Then came the most damaging support message of all.

The response stated that after checking following the ID verification, the team had decided to block the account due to unusual activity, and the support representative had no option to reverse the decision.

Insert Image 6 here

Reference: Chat screenshot stating the team decided to block the account and the agent cannot reverse the decision.

This is the exact moment where a customer relationship breaks.

Not because fraud checks happened. Fraud checks are understandable.

Not because documents were requested. Verification can be justified.

Not because support needed time. Some cases do take time.

The break happens when the customer fully cooperates, shares sensitive details, waits through the process, follows every step, and still receives a final answer that feels absolute, unexplained, and unchallengeable.

That is when the system stops feeling protective and starts feeling unfair.

When a customer does everything asked of them and still loses access, the issue is no longer just fraud prevention. It becomes a trust failure.

Phase 7: The issue was forwarded, but even that felt like delay, not resolution

In another support exchange, I was told that the issue could be forwarded to the concerned department and that they might respond within 24 to 48 hours by email.

Insert Image 7 here

Reference: Chat screenshot saying the issue was forwarded to the concerned department and a response may come in 24–48 hours.

On the surface, this sounds positive. Any escalation is better than an immediate dead end.

But after repeated verification, repeated account blocks, and repeated support interactions, this kind of answer begins to feel less like problem solving and more like time extension.

When the process has already asked for IDs, bank details, payment ownership proof, and video verification, the customer expects clarity — not another holding statement.

And that is where exhaustion starts.

Phase 8: The final outcome — “Amazon account is closed”

Eventually the system reached its final visible answer.

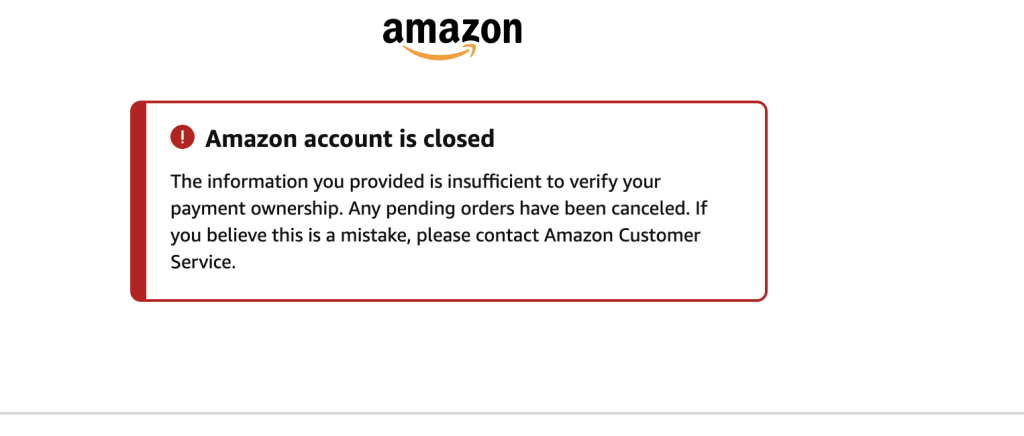

The message on screen said:

Amazon account is closed

The information you provided is insufficient to verify your payment ownership. Any pending orders have been canceled.

Insert Image 8 here

Reference: Red status page showing “Amazon account is closed.”

That message is short, but it carries enormous weight.

It means the system considers the customer’s effort insufficient.

It means the pending orders are gone.

It means the review is over.

It means all the time, documentation, waiting, and support conversations ended at exactly the same point where the customer feared they would.

And that is why this is bigger than one order or one blocked account.

It is about the experience of being treated as guilty by default, then being asked to prove everything, and then still being rejected.

Why this matters for Amazon.com and Amazon.in

Let me be fair.

I am not saying that one personal experience automatically proves a broad business collapse. That would be exaggerated.

But I am saying something important: if this pattern becomes common, and if AI-driven account risk systems repeatedly overreach without meaningful human correction, then it can absolutely hurt both Amazon.com and Amazon.in in the long term. That conclusion follows directly from the customer experience you documented and the broader trust concerns highlighted in your notes.

Here is why.

1. False positives are not harmless

Every false positive is more than a technical miss.

It can mean a cancelled order.

It can mean a lost sale.

It can mean a customer who decides never to come back.

It can mean a bad story that gets shared publicly.

Fraud systems are supposed to reduce losses. But if they become too aggressive, they start creating a new kind of loss: self-created business damage.

2. Verification fatigue destroys loyalty

One round of verification is understandable.

But repeated identity checks, payment proofs, video verification, bank details, card-related confirmation, and still getting blocked? That creates fatigue, frustration, and resentment.

At some point the customer starts asking a very basic question:

If I did everything you asked, what was the point of asking at all?

That question is deadly for trust.

3. Support without authority is not real support

The chats reveal a deeper issue than a single bad agent or a single bad response.

They suggest that support may exist largely to relay outcomes rather than change them.

That is a major customer experience weakness. A company can automate risk scoring, but it should never automate away the possibility of fair review.

4. Long-term customer history should matter

If an account has years of history, many successful deliveries, multiple payment methods, and recognizable behavior, then the system should use that context intelligently.

A smart system does not ignore history. It weighs it.

When history seems irrelevant to the final outcome, customers begin to feel that the system is not intelligent at all. It is simply rigid.

5. Saving support cost can quietly cost far more

This is the uncomfortable business truth behind all of this.

Maybe heavy automation reduces staffing effort.

Maybe it speeds up decisions.

Maybe it standardizes workflows.

Maybe it lowers internal operational cost.

But if the result is that real customers feel humiliated, unsupported, and disposable, then those savings may come at the cost of retention, repeat orders, word-of-mouth trust, and long-term brand value.

That is how decline begins in modern digital businesses. Not always with a headline. Often with silent exits.

The emotional side companies often miss

What makes experiences like this so frustrating is not only the action, but the feeling.

Customers come to Amazon expecting speed, simplicity, and reliability.

They do not expect to place a normal order and suddenly feel like they need to defend their identity to a machine. They do not expect to provide personal proof and still feel unseen. They do not expect to reach support only to discover that support cannot truly support them.

And once that happens, the relationship with the brand changes.

The customer stops feeling valued and starts feeling processed.

That emotional shift matters more than many companies realize.

Because when customers begin joking that “robots do not trust humans anymore,” the joke is actually feedback in disguise.

My honest conclusion

AI should be used in fraud prevention. No reasonable customer would argue against that.

But AI without transparency is risky.

AI without proportionality is damaging.

AI without meaningful human escalation is not customer protection. It is automated friction.

From my experience, the real story was not simply that Amazon checked a payment method. The real story was the sequence:

A normal order was placed.

The account was flagged.

Documents were requested.

The case went under review.

Support could not truly act.

Human audit was requested.

The account was still closed.

That is not a trust journey.

That is a trust breakdown.

What Amazon should fix

If Amazon wants AI-based trust systems to work without alienating legitimate customers, it needs a better recovery path.

It needs a real human review channel for disputed closures.

It needs clearer reason codes instead of vague labels like “unusual activity.”

It needs continuity, where one case is owned by one team instead of being pushed through support fragments.

It needs better treatment for older and business-related accounts with long histories.

And it needs verification processes that do not keep restarting from zero every time.

Fraud prevention is necessary.

But customer humiliation should never be part of the design.

Final line

Amazon’s AI moved faster than its customer support, and customer trust got caught in the middle.

weqeqw

This is a nightmare for any business owner. It’s not right to demand private information and then give no real support. Thank you for sharing your story; more people need to know about these issues so things can change. Stay strong!

This situation reflects a growing issue with AI-based account control systems. When identity verification is completed and accounts are still closed, it raises questions about transparency and accountability in automated decisions.

Large platforms like Amazon are tightening security due to fraud risks, but over-automation without proper review can harm genuine users.

A better balance between AI and human intervention is clearly needed.

I can imagine how frustrating this must be. It’s really disappointing to see—Amazon should seriously look into such cases, as it doesn’t build much trust.

This is the kind of guide teams need before investing in a code review platform. Clear, relevant, and written in a very understandable way.

That’s really frustrating—being asked for ID, video verification, and bank details, only to have the accounts closed anyway, feels unfair and exhausting. It shows how rigid AI-based account reviews can be, even when someone tries to fully cooperate.